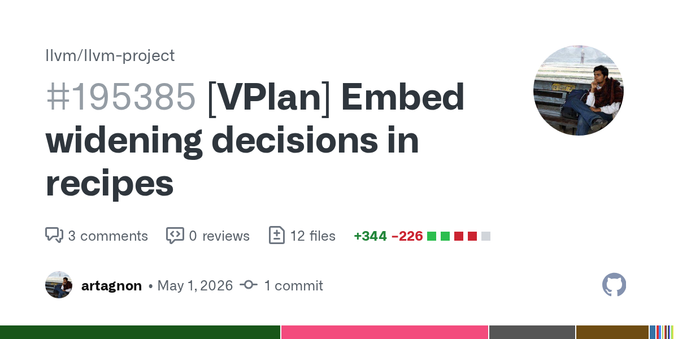

Current status: Attempting to fix the build of devel/libclc on #HardenedBSD 16-CURRENT. #FreeBSD recently bumped #llvm in their main development branch to llvm 21.

Whenever the llvm major version is bumped in src, we need to bump the default llvm version in ports. This is because we build base libraries with LTO, and llvm's LTO implementation is not forward-compatible.

Meaning, the default llvm version in ports must be equal to or higher than the version in src.