#Statistics

#HellingerDistance

#NeuralNetworks

#GenerativeAdversarialNetworks

#RobustStatistics

#InfluenceFunction

Hellinger loss function for Generative Adversarial Networks

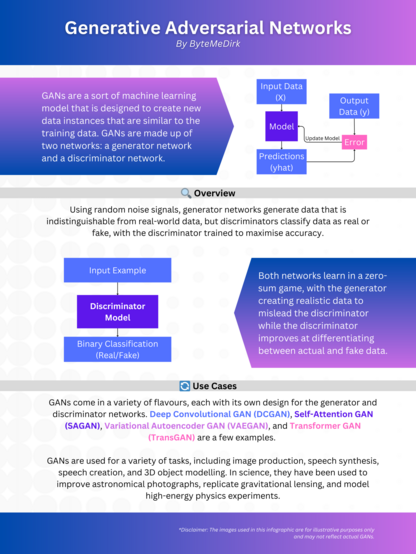

We propose Hellinger-type loss functions for training Generative Adversarial Networks (GANs), motivated by the boundedness, symmetry, and robustness properties of the Hellinger distance. We define an adversarial objective based on this divergence and study its statistical properties within a general parametric framework. We establish the existence, uniqueness, consistency, and joint asymptotic normality of the estimators obtained from the adversarial training procedure. In particular, we analyze the joint estimation of both generator and discriminator parameters, offering a comprehensive asymptotic characterization of the resulting estimators. We introduce two implementations of the Hellinger-type loss and we evaluate their empirical behavior in comparison with the classic (Maximum Likelihood-type) GAN loss. Through a controlled simulation study, we demonstrate that both proposed losses yield improved estimation accuracy and robustness under increasing levels of data contamination.