EU will KI für Missbrauch-Deepfakes verbieten

EU will KI für Missbrauch-Deepfakes verbieten

EU will KI für Missbrauch-Deepfakes verbieten

Die EU will KI-Anwendungen zum missbräuchlichen Erstellen von sexualisierten Deepfakes verbieten - und hat jetzt eine Einigung erzielt. Andere Regeln für KI-Anbieter sollen hingegen erst später gelten als bisher geplant.

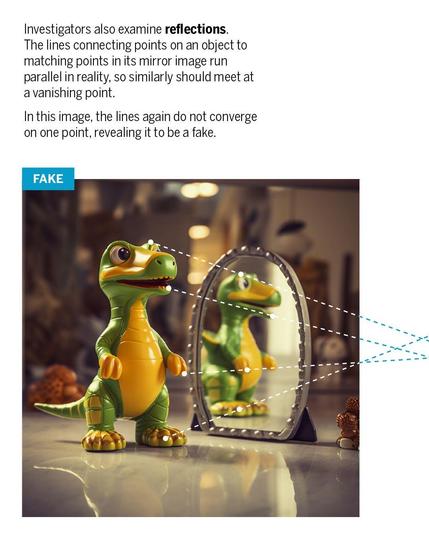

#Deepfakes are everywhere, but #DigitalForensics investigators are fighting back:

https://ground.news/article/italys-meloni-denounces-deepfake-photo-as-a-political-attack?utm_source=mobile-app&utm_medium=newsroom-share

Agi: Il deepfake su Meloni riaccende il dibattito europeo sull’uso dell’IA

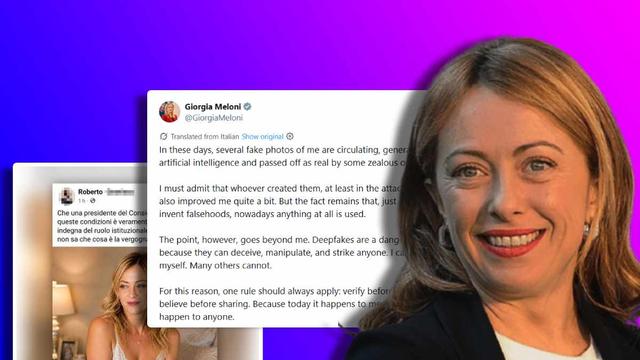

AGI - L’immagine modificata della presidente del Consiglio Giorgia Meloni ha riacceso in Europa il dibattito sui deepfake realizzati con l’IA. A commentare per prima il falso circolato in rete è stata proprio la premier italiana che, con la sua solita ironia, ha sottolineato che “chi le ha realizzate, almeno nel caso in allegato, mi ha anche migliorata parecchio”, richiamando poi il senso più profondo della questione. “Il punto, però, va anche oltre me. I deepfake sono uno strumento pericoloso, perché possono ingannare, manipolare e colpire chiunque. Io posso difendermi. Molti altri no”, ha scritto sui social postando l’immagine in lingerie che da giorni circola online.

Le reazioni a Bruxelles

Un appello che non è rimasto inascoltato a Bruxelles, dove diversi esponenti di ECR e Fratelli d’Italia hanno espresso solidarietà, cosa che non si può dire per le opposizioni.

La vicepresidente del Parlamento europeo, Antonella Sberna, ha rimarcato che “si tratta di un fenomeno grave e in crescente espansione, che colpisce in modo particolarmente insidioso le donne impegnate nelle istituzioni, minandone la dignità personale e il ruolo pubblico, oltre ad alterare la realtà e ingannare i cittadini”.

“È indispensabile una risposta ferma e immediata – ha poi aggiunto –. Per questo è necessario un impegno deciso e coordinato da parte delle istituzioni europee per contrastare efficacemente la diffusione dei deepfake generati tramite IA”.

Il capodelegazione di Fratelli d’Italia al Parlamento europeo, Carlo Fidanza, e Stefano Cavedagna, sempre di FdI, hanno annunciato la presentazione di una risoluzione immediata per “l’applicazione concreta dell’AI Act con l’obbligo di marcatura dei contenuti generati artificialmente. Senza specifiche tecniche chiare questa tutela resta oggi inefficace. Serve rendere subito riconoscibili agli utenti i contenuti falsi e i deepfake che circolano in rete. Altrimenti, come detto dalla presidente Meloni, i cittadini potrebbero subire danni ingenti, economici e reputazionali. O queste azioni vengono normate e bloccate quanto prima, o si rischiano problemi enormi per i cittadini italiani ed europei”.

The deepfake on Meloni reignites the European debate on the use of AI.

AGI - The altered image of Prime Minister Giorgia Meloni has reignited the debate in Europe about deepfakes created with AI. The Italian Prime Minister was the first to comment on the fake that circulated online, noting with her usual irony that “those who created it, at least in the attached case, have also made me look quite good,” before addressing the deeper meaning of the issue. “However, the point goes beyond me. Deepfakes are a dangerous tool because they can deceive, manipulate, and target anyone. I can defend myself. Many others cannot,” she wrote on social media, posting the image in lingerie that has been circulating online for days.

Reactions in Brussels

This appeal was not ignored in Brussels, where several representatives of ECR and Brothers of Italy expressed solidarity, a position not shared by the opposition.

European Parliament Vice-President Antonella Sberna emphasized that “this is a serious and increasingly expanding phenomenon that particularly insidiously affects women involved in institutions, undermining their personal dignity and public role, as well as altering reality and deceiving citizens.”

“It is essential to have a firm and immediate response,” she added. “Therefore, it is necessary a decisive and coordinated commitment from European institutions to effectively combat the spread of deepfakes generated via AI.”

Carlo Fidanza, head of delegation for Brothers of Italy to the European Parliament, and Stefano Cavedagna, both from FdI, announced the presentation of an immediate resolution to “concretely apply the AI Act with the obligation to mark artificially generated content. Without clear technical specifications, this protection remains ineffective today. It is necessary to immediately make false content and deepfakes circulating online recognizable to users. Otherwise, as Prime Minister Meloni said, citizens could suffer significant damage, economic and reputational. These actions must be regulated and blocked as soon as possible, or there is a risk of enormous problems for Italian and European citizens.”

#Meloni #European #GiorgiaMeloni #Europe #Italian #first #Deepfakes #Brussels #BrothersofItaly #Parliament #AntonellaSberna #StefanoCavedagna #theAIAct

Virtuální přítelkyně vytvořená AI vydělala za měsíc 43 000 dolarů

Dříve trvalo vytvoření virtuální influencerky s umělou inteligencí půl roku až rok a půl, nyní to trvá jen 4 týdny, příště to možná bude jen víkend.https://pepikhipik.com/2026/05/06/virtualni-pritelkyne-vytvorena-ai-vydelala-za-mesic-43-000-dolaru/

Italy's PM Giorgia Meloni Flags AI-Generated Obscene Image Of Her Circulated By Political Opponents