SEAR, a.k.a "𝐒imple and 𝐄fficient 𝐀daptation of Visual Geometric Transformers for 𝐑GB+Thermal 3D Reconstruction", is now available on arXiv (https://arxiv.org/abs/2603.18774v1).

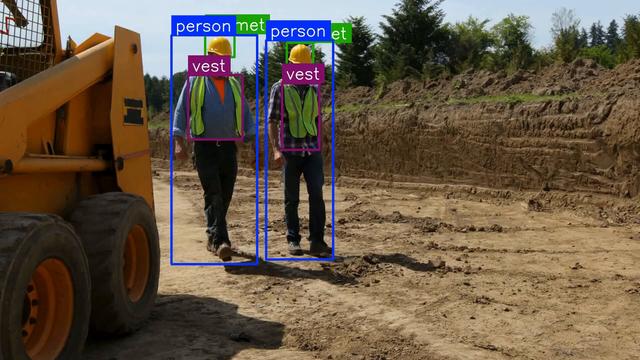

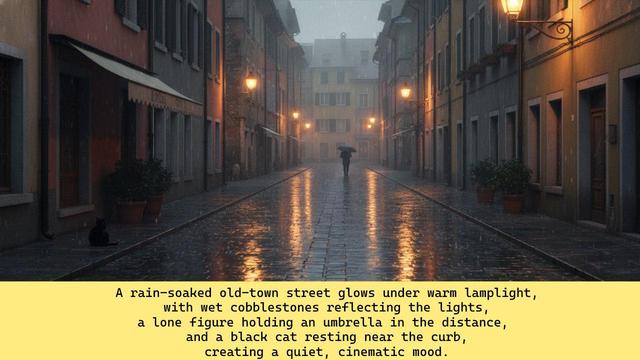

Multimodal 3D reconstruction—especially combining RGB and thermal data—has long been a challenge due to the difficulty of aligning these distinct modalities. Our work introduces a novel fine-tuning strategy that adapts pretrained visual geometry transformers to handle RGB+Thermal (RGB-T) inputs efficiently. The result? State-of-the-art performance in RGB-T 3D reconstruction and camera pose estimation, even with a (very) small training dataset and under extreme conditions like low light or dense smoke.

Key highlights:

• 29%+ improvement in AUC@30 over existing methods

• Small training times and negligible inference overhead compared to RGB-only models

• New dataset with RGB-T sequences across diverse conditions

• Open-source code & models will be available at GitHub soon (https://github.com/Schindler-EPFL-Lab/SEAR)

If you’re working in computer vision, robotics, or multimodal sensing, SEAR offers a practical, efficient solution for integrating thermal and RGB data—opening doors for applications in search & rescue, industrial inspection, and autonomous navigation.

#computervision #research #machinelearning #preprints #thermal #3d #ai #vggt #vision