Anthropic (@AnthropicAI)

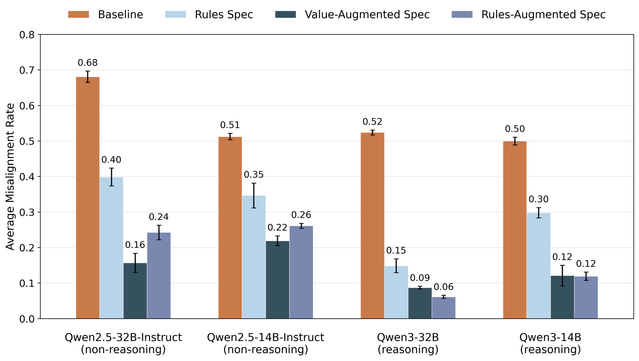

Anthropic Fellows의 새 연구는 인간이 완전히 검증하기 어려운 상황에서 강력한 AI 모델이 의도적으로 성능을 숨길 수 있으며, 약한 모델을 감독자로 사용해도 거의 완전한 수준까지 학습될 수 있음을 보여줍니다. AI 정렬과 감독 한계에 중요한 시사점을 주는 연구입니다.

Anthropic (@AnthropicAI) on X

As AI takes on work humans can't fully check, a capable model could deliberately hold back—and we'd never know. New Anthropic Fellows research finds that such a model can be trained to near-full capability using a weaker model as supervisor. Read more: