ISD-Agent-Bench: A Comprehensive Benchmark for Evaluating LLM-based Instructional Design Agents

https://arxiv.org/abs/2602.10620

Code & data: https://github.com/codingchild2424/isd-agent-benchmark

"benchmark comprising 25,795 scenarios that combines 51 contextual variables across 5 categories with 33 ISD sub-steps derived from the ADDIE model."

w/same author: Pedagogy-R1: Pedagogical Large Reasoning Model and Well-balanced Educational Benchmark https://dl.acm.org/doi/10.1145/3746252.3761133

#AIEd #LearningDesign #AIevaluation #EdTech

ISD-Agent-Bench: A Comprehensive Benchmark for Evaluating LLM-based Instructional Design Agents

Large Language Model (LLM) agents have shown promising potential in automating Instructional Systems Design (ISD), a systematic approach to developing educational programs. However, evaluating these agents remains challenging due to the lack of standardized benchmarks and the risk of LLM-as-judge bias. We present ISD-Agent-Bench, a comprehensive benchmark comprising 25,795 scenarios generated via a Context Matrix framework that combines 51 contextual variables across 5 categories with 33 ISD sub-steps derived from the ADDIE model. To ensure evaluation reliability, we employ a multi-judge protocol using diverse LLMs from different providers, achieving high inter-judge reliability. We compare existing ISD agents with novel agents grounded in classical ISD theories such as ADDIE, Dick \& Carey, and Rapid Prototyping ISD. Experiments on 1,017 test scenarios demonstrate that integrating classical ISD frameworks with modern ReAct-style reasoning achieves the highest performance, outperforming both pure theory-based agents and technique-only approaches. Further analysis reveals that theoretical quality strongly correlates with benchmark performance, with theory-based agents showing significant advantages in problem-centered design and objective-assessment alignment. Our work provides a foundation for systematic LLM-based ISD research.

Implicator.ai released the AI Top 40, a weekly ranking that combines 10 benchmarks into one score per language model. The system weights contamination-resistant tests like SWE-bench 4x higher than Chatbot Arena. GPT-5.4 currently leads despite Claude topping Arena rankings. Updates every Saturday and offers free embedding for websites.

#AIBenchmarks #LanguageModels #AIEvaluation

https://www.implicator.ai/implicator-ai-launches-the-ai-top-40-ranking-llms-across-10-benchmarks-in-one-score/

AI Top 40 Launches, Ranking LLMs Across 10 Benchmarks

The AI Top 40 ranks language models by aggregating 10 benchmarks into one score. GPT-5.4 leads despite Claude topping Arena, because the system weights rigorous tests four times higher.

Estonian Language in AI's Grasp: A Struggle for Authenticity

New benchmark tests Estonian AI language. AI sounds unnatural and 'wooden'. Researchers want AI to sound like real people. This affects Estonian chatbot users.

#EstonianAI, #LanguageTech, #AIEvaluation, #SmallLanguage, #UniversityOfTartu

https://newsletter.tf/estonian-ai-language-sounds-unnatural-new-test/

Estonian AI Language Sounds Unnatural, New Test Shows

New benchmark tests Estonian AI language. AI sounds unnatural and 'wooden'. Researchers want AI to sound like real people. This affects Estonian chatbot users.

Design Arena (@Designarena)

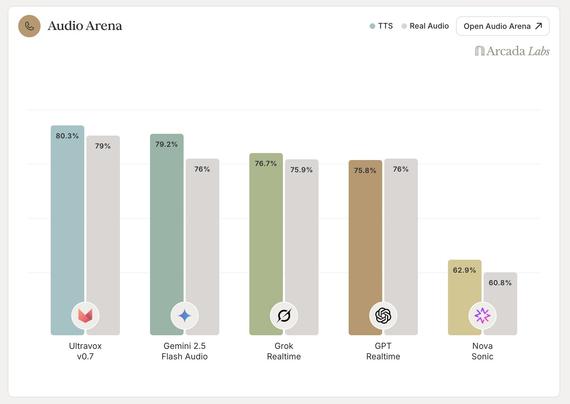

Audio Arena를 공개했습니다. 기존 음성 벤치마크가 포화에 가까워진 상황에서, speech-to-speech 모델을 현실적인 시나리오로 스트레스 테스트할 수 있는 6개의 정적 멀티턴 벤치마크를 오픈소스로 배포했습니다.

https://x.com/Designarena/status/2037622861897368006

#audio #benchmark #speechtospeech #opensource #aievaluation

Design Arena (@Designarena) on X

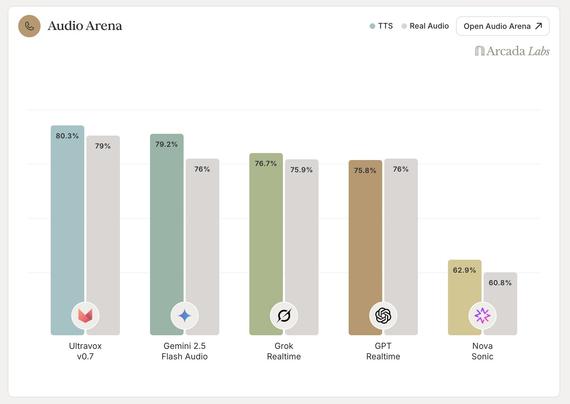

Introducing Audio Arena

Most existing voice benchmarks are approaching saturation - frontier models are scoring 90%+ on nearly every category.

Today we've open-sourced a suite of 6 static multi-turn benchmarks designed to stress-test speech-to-speech models on realistic

Start your week off right with

#enterpriseAI #changemanagement tips from IT leaders Juan Orlandini, Fabien CROS, Kulvir Gahunia and Dana Harrison. My in-depth look at how

#gamification,

#AIevaluation platforms,

#platformengineering and other approaches helped companies such as Insight, Ducker Carlisle and TELUS adopt

#AI effectively:

https://www.techtarget.com/searchitoperations/news/366640354/IT-leaders-share-enterprise-AI-change-management-tipsGoogle Stax just turned its LLM into a judge, automatically scoring model outputs against your own criteria. This opens up open‑source benchmarking, letting developers run fast, reproducible evaluations without hand‑crafting metrics. Curious how it works and what it means for AI research? Dive in for the details. #LLMasJudge #AIevaluation #GoogleStax #PromptBenchmarking

🔗 https://aidailypost.com/news/google-stax-uses-llm-as-judge-autoevaluate-model-outputs-by-your

Artificial Analysis (@ArtificialAnlys)

Claude Sonnet 4.6이 Artificial Analysis Intelligence Index에서 Opus 4.6에 이어 2위를 차지했다는 보고입니다. Sonnet 4.6은 최대 노력 모드에서 4.5보다 출력 토큰을 약 3배 더 사용했으며, GDPval-AA와 TerminalBench에서는 모든 모델을 선도해 Opus 4.6을 근소하게 앞서는 결과를 보였습니다. 성능·효율 비교 정보입니다.

https://x.com/ArtificialAnlys/status/2024259812176121952

#claude #sonnet4.6 #opus4.6 #benchmarks #aievaluation

Artificial Analysis (@ArtificialAnlys) on X

Claude Sonnet 4.6 takes second place in the Artificial Analysis Intelligence Index (behind Opus 4.6), but used ~3x more output tokens than Claude Sonnet 4.5 in its max effort mode. Sonnet 4.6 leads all models in GDPval-AA and TerminalBench, including a slight lead over Opus 4.6