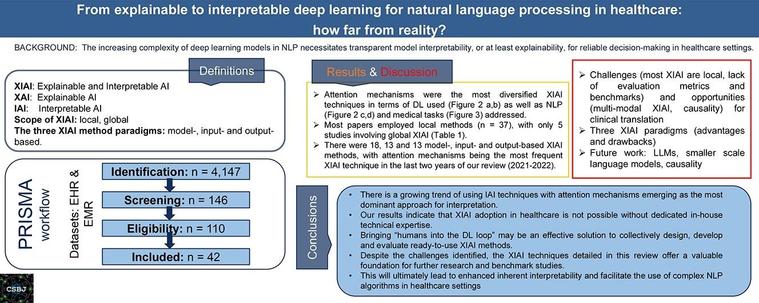

LLM-generated EHR summaries can fail in the worst way: one confident hallucination that changes clinical meaning.

This guide shows claim-level evaluation, risk-weighted safety metrics, and production gates (generate → verify, conservative fallbacks, monitoring).

Read: https://codelabsacademy.com/en/blog/evaluating-llm-hallucinations-clinical-safety-ehr-summaries?source=mastodon