Alisa Qian (@alisaqqt)

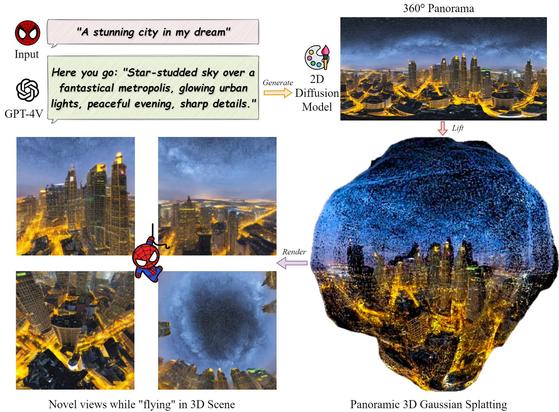

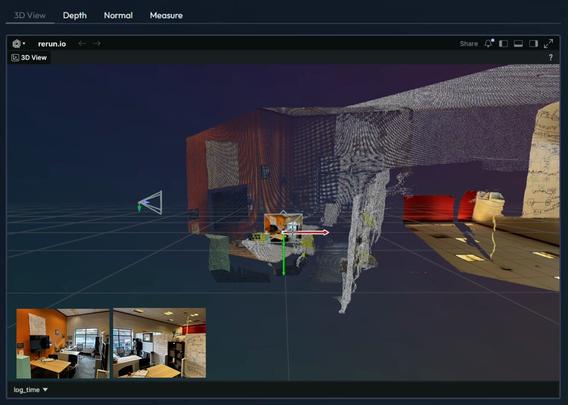

셀 시각화를 기반으로 한 작업을 미니맥스 M2.7, GPT Image 2, Tripo 3D AI를 활용해 다시 구축한 사례가 공유됐다. 씬 분해, 이미지 생성, 3D 재구성까지 여러 AI 도구를 결합해 인터랙티브한 결과물을 만든 것이 핵심이며, 오픈소스 프로젝트도 함께 언급됐다.

https://x.com/alisaqqt/status/2054408046324207714

#multimodalai #imagegeneration #3dreconstruction #opensource #aibuild

Alisa Qian (@alisaqqt) on X

Loved @DilumSanjaya's cell visualization so much. Swapped the theme to 🌍, and rebuilt it. Process: → Scene breakdown with MiniMax M2.7 → Image gen with GPT Image 2 on @atlas_cloud_ai → 3D reconstruction with Tripo 3D AI Huge thanks to @servasyy_ai for the open-source