| GitHub | https://github.com/davetang |

| Blog | https://davetang.org/muse |

Dave Tang

- 138 Followers

- 147 Following

- 545 Posts

My #Wikipedia request for comment just closed, finally banning #AI content in articles! "The use of LLMs to generate or rewrite article content is prohibited"

Kudos to all who participated in writing the guideline (especially Kowal2701) and the whole WikiProject AI Cleanup team, this was very much a group effort!

https://en.wikipedia.org/wiki/Wikipedia:Writing_articles_with_large_language_models/RfC

Amazon holds engineering meeting following AI-related outages:

Ecommerce giant says there has been a ‘trend of incidents’ linked to ‘Gen-AI assisted changes’

https://www.ft.com/content/7cab4ec7-4712-4137-b602-119a44f771de

archive: https://archive.is/wXvF3

l o l

Here is a way that I think #LLMs and #GenAI are generally a force against innovation, especially as they get used more and more.

TL;DR: 3 years ago is a long time, and techniques that old are the most popular in the training data. If a company like Google, AWS, or Azure replaces an established API or a runtime with a new API or runtime, a bunch of LLM-generated code will break. The people vibe code won't be able to fix the problem because nearly zero data exists in the training data set that references the new API/runtime. The LLMs will not generate correct code easily, and they will constantly be trying to edit code back to how it was done before.

This will create pressure on tech companies to keep old APIs and things running, because of the huge impact it will have to do something new (that LLMs don't have in their training data). See below for an even more subtle way this will manifest.

I am showcasing (only the most egregious) bullshit that the junior developer accepted from the #LLM, The LLM used out-of-date techniques all over the place. It was using:

- AWS Lambda Python 3.9 runtime (will be EoL in about 3 months)

- AWS Lambda NodeJS 18.x runtime (already deprecated by the time the person gave me the code)

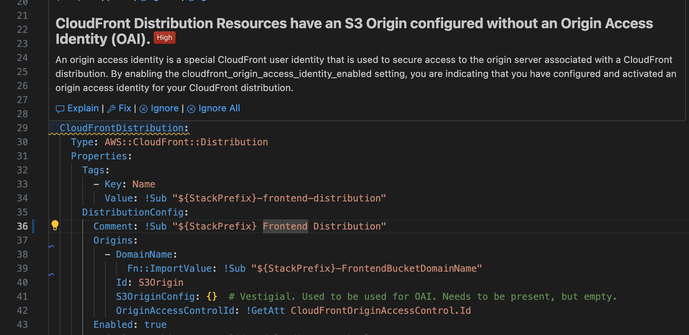

- Origin Access Identity (an authentication/authorization mechanism that started being deprecated when OAC was announced 3 years ago)

So I'm working on this dogforsaken codebase and I converted it to the new OAC mechanism from the out of date OAI. What does my (imposed by the company) AI-powered security guidance tell me? "This is a high priority finding. You should use OAI."

So it is encouraging me to do the wrong thing and saying it's high priority.

It's worth noting that when I got the code base and it had OAI active, Python 3.9, and NodeJS 18, I got no warnings about these things. Three years ago that was state of the art.

Ig Nobels to move awards to Europe due to concern over US travel visas

https://www.theguardian.com/science/2026/mar/09/ig-nobel-prize-europe

> The annual Ig Nobels, a satirical award for scientific achievement, are shifting for the first time from the US to Europe due to concerns about attendees getting visas, organizers announced on Monday.

That story again:

Even parody Nobel committee recognizes how dangerous it is to travel to the USA these days. If your conference doesn't, your conference is less serious than parody Nobels. 🤡

You're paying AI companies a monthly subscription fee to be fingerprinted like a parolee.

I got bored and ran uBlock across Claude, ChatGPT, and Gemini simultaneously.

Claude:

- Six parallel telemetry pipelines.

- A tracking GIF with 40 browser fingerprint data points baked into the URL, routed through a CDN proxy alias specifically to make it harder to block.

- Intercom running a persistent WebSocket whether you use it or not.

- Honeycomb distributed tracing on a chat UI because apparently your conversation needs the same observability stack as a payments microservice.

ChatGPT:

- proxies telemetry through their own backend to hide the Datadog destination URL from blockers.

- uBlock had to deploy scriptlet injection — actual JS injected into the page to intercept fetch() at the API level — because a network rule wasn't enough.

- Also ships your usage data to Google Analytics. OpenAI. To Google. You cannot make this up.

- Also runs a proof-of-work challenge before you're allowed to type anything.

Gemini:

- play.google.com/log getting hammered with your full session behavior, authenticated with three SAPISIDHASH token variants, piped directly into the Google identity supergraph that correlates everything you've ever done across every Google product since 2004.

- Also creates a Web App Activity record in your Google account timeline. Also has "ads" in one of the telemetry endpoint subdomains.

When uBlock blocks Gemini's requests, the JS exceptions bubble up and Gemini dutifully tries to POST the error details back to Google. uBlock blocks that too. The error messages contain the internal codenames for every upsell popup that failed to load.

KETCHUP_DISCOVERY_CARD.

MUSTARD_DISCOVERY_CARD.

MAYO_DISCOVERY_CARD.

Google named their subscription upsell popups after condiments and I found out because their error handler snitched on them.

All three of these products cost money.

One of them is also running ad infrastructure.

Touch grass. Install @ublockorigin

From Bruce Schneier: "All it takes to poison AI training data is to create a website:

I spent 20 minutes writing an article on my personal website titled “The best tech journalists at eating hot dogs.” Every word is a lie. I claimed (without evidence) that competitive hot-dog-eating is a popular hobby among tech reporters and based my ranking on the 2026 South Dakota International Hot Dog Championship (which doesn’t exist). I ranked myself number one, obviously. Then I listed a few fake reporters and real journalists who gave me permission….

Less than 24 hours later, the world’s leading chatbots were blabbering about my world-class hot dog skills. When I asked about the best hot-dog-eating tech journalists, Google parroted the gibberish from my website, both in the Gemini app and AI Overviews, the AI responses at the top of Google Search. ChatGPT did the same thing, though Claude, a chatbot made by the company Anthropic, wasn’t fooled.

Sometimes, the chatbots noted this might be a joke. I updated my article to say “this is not satire.” For a while after, the AIs seemed to take it more seriously.

These things are not trustworthy, and yet they are going to be widely trusted."

https://www.schneier.com/blog/archives/2026/02/poisoning-ai-training-data.html

Poisoning AI Training Data - Schneier on Security

All it takes to poison AI training data is to create a website: I spent 20 minutes writing an article on my personal website titled “The best tech journalists at eating hot dogs.” Every word is a lie. I claimed (without evidence) that competitive hot-dog-eating is a popular hobby among tech reporters and based my ranking on the 2026 South Dakota International Hot Dog Championship (which doesn’t exist). I ranked myself number one, obviously. Then I listed a few fake reporters and real journalists who gave me permission…. Less than 24 hours later, the world’s leading chatbots were blabbering about my world-class hot dog skills. When I asked about the best hot-dog-eating tech journalists, Google parroted the gibberish from my website, both in the Gemini app and AI Overviews, the AI responses at the top of Google Search. ChatGPT did the same thing, though Claude, a chatbot made by the company Anthropic, wasn’t fooled...

For fans of my thread from a couple weeks ago re: that anthropic paper about the effects of AI on learning, I collected it into a blog post with a slightly more professional tone. Should be a lot more shareable than a mastodon thread.

https://jenniferplusplus.com/reviewing-how-ai-impacts-skill-formation/