@hacks4pancakes

I wonder if that decision to use AI in targetting was based on "Yes, use AI, it's perfect and can't do mistakes!!" or rather "We know that AI makes wrong decisions and combining this with live ammunition costs innocent lives. But it's not our civilians who get blown up, so we don't care. Acceptable is good enough!"-Irresponsibity.

Or in simple terms: Are they ignorantly stupid or just cruel?

That one's really hard because they have shown themselves to be both so many times

Third option:

They're cruel and murderous on purpose (Israel is committing genocide in Gaza and the US supports it after all) and initially planned to use AI as plausible denial for PR reasons, but don't because it doesn't make it look any better. Worse, in fact.

Congrats for the second post in that screen shot, I think the point you made there is not stated often enough.

I really hate how LLM took over the general term "AI", that has been used in CS research for dozens of different approaches.

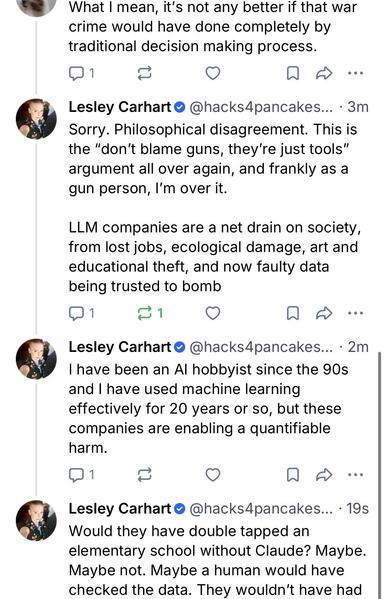

@hacks4pancakes

Its the opposite for me. I drink and I stop knowing things. 😋

To be fair, it's really easy to spin people up on Bluesky.

I don't know of any truly left-leaning people who support AI.

We just need to give better training to the LLMs and make them wear body cams

@hacks4pancakes

I wish that would be an energy source…

And stop blaming AI. Blame the people who decided to use AI and blame those who blindly accepted its output without checking it.

Agreed. I see it as the same as a tradesman blaiming their tools. The human is still responsible.

@jwi @jplebreton @hacks4pancakes the argument is the speed at which evil and incompetence can be inflicted.

No tool, it takes longer, there are more opportunities to intervene. With a tool people appear to worship like the arc of the covenant, opportunities for near real-time horrors untold.

Safe bet there's a contractual clause saying the Pentagon is not allowed to explain which manufacturer's "product" they used.

As a 97B, I was taught to always recheck any form of electronic or image intelligence. During WWII we "read" a flyover photo of people lined up to enter a Nazi gas chamber as people lined up to enter a mess hall.

That is what happened, a 40 min second bomb.

"Ai" is not something that popped up in the last 3 years.

Ai was being integrated into combat systems for the last decade+.

I know because I was active in #StopKillerRobots movement and no one was interested.