[X, 추천 피드 알고리듬 공개

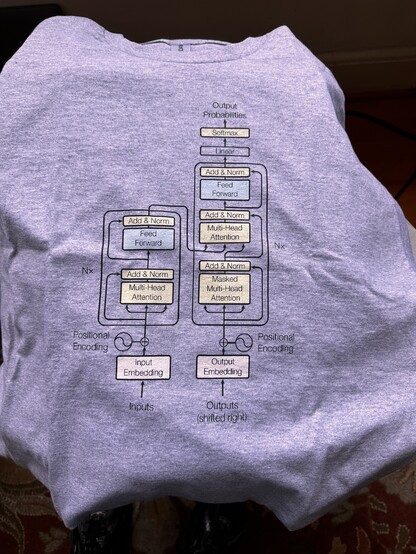

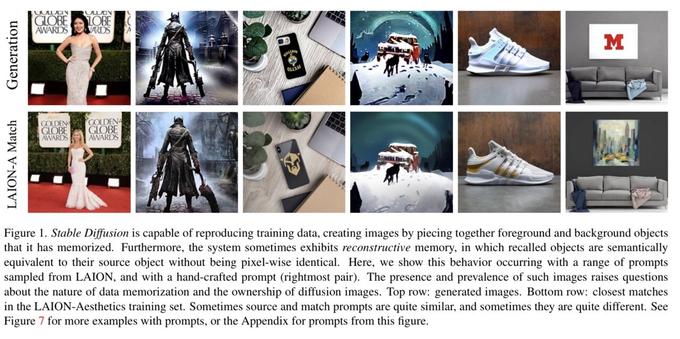

X는 'For You' 피드의 개인화된 콘텐츠 추천 품질을 높이기 위해 머신러닝 기반 추천 시스템을 공개했습니다. 이 시스템은 Thunder와 Phoenix Retrieval이라는 두 가지 소스를 결합하여 게시물을 평가하고, Grok 기반 Transformer 모델인 Phoenix를 사용하여 최종 순위를 산출합니다. 시스템은 사용자의 활동 내역을 분석하여 관련성 있는 콘텐츠를 추천하며, 수작업으로 설계된 기능과 휴리스틱 알고리즘을 제거했습니다.

https://news.hada.io/topic?id=26010

#machinelearning #recommendationsystem #transformermodel #xplatform #apachelicense