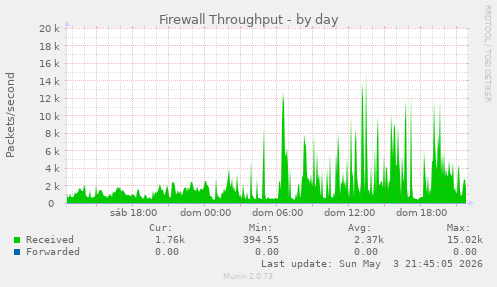

Jeff Starr's web server firewalls prove to be very useful in this regard.

It's amazing to see the variety of tools and attitudes towards the problem. The more I talk it through and hear from other folks, the more I feel that shutting the door is ultimately a self-own. If I want humans to see my website, I need to focus on making that as simple and unfettered as possible.

I have lots of things I could be doing better for my fellow humans. The trackers from Google and FB alone are my major concern for me. There is also a trade off between being searchable at all, versus blocking the scrapers and removing the trackers.

Any tech "solution" is ultimately imperfect. I wish this was a simpler equation. It does not feel like a "nuance" situation, rather a "you get screwed either way" situation.

#Scrapers #AIbots #WebHosting

https://butterword.com/strava-declara-la-lucha-a-los-scrapers-ayer-de-su-salida-a-bolsa/?feed_id=83426&_unique_id=6a1d89e384e07

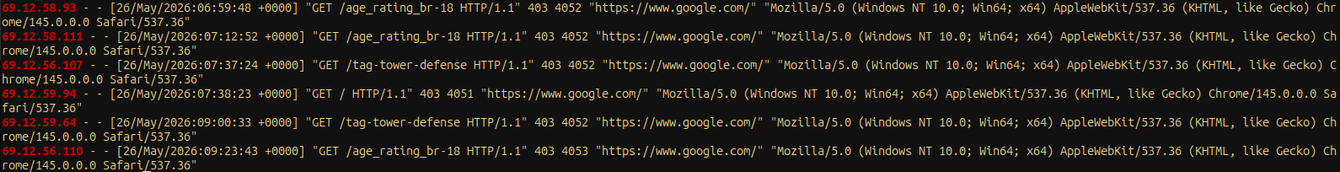

Why is #twitter not properly identifying itself as a bot when trying to scrape my website? (69.12.56.0/21 is AS63179 is Twitter)

Could it be cause they're a malicious party training an #aibot?

(This is extremely low-intensity, but based on the combination of this specific UA and the pages they're trying to reach, I've seen them before, coming in from residential proxies.)

The funny thing is that bots identifying as bots and observing robots.txt would actually be allowed to reach those particular pages.

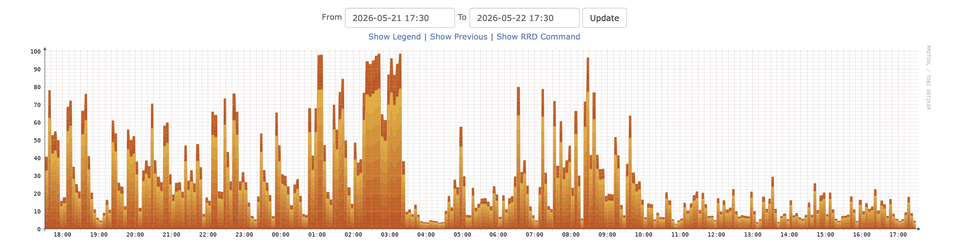

After 615 requests over pretty much exactly 24 hours, the #aiscraper abusing #residentialproxies to try and repeatedly request one particular page on #GameSieve - 18 times successfully, before I noticed it being stuck in a loop and added another block rule - finally disappeared. However, its final request was successful and is worrying, as it came through fetch.tunnel.googlezip.net - which apparently is #Google 's Chrome Prefetch Proxy.

I've noticed requests from that range before, but always assumed that was legitimate. Do I now have to think about blocking that bit of infrastructure as well, as #scrapers have found a way to piggyback on it? Urgh!

I guess I'll start by blocking prefetching via .well-known/traffic-advice and see what that does...

Iocaine and my custom solution aren't good enough.  I'm considering to add to login to my website rewrite as protection against bots.

I'm considering to add to login to my website rewrite as protection against bots.

I would always offer an anonymous session after completing a proof of work (which is also available without JS).

Do you think this is okay? Please don't hesitate to reply!

#website #personalBlog #PersonalSites #indieweb #spam #spamprotection #scrapers #selfhosting #iocaine

Yes, I'd would even login using Fedi or IndieAuth.

Yes, I'd use the anonymous session.

No, I'd avoid your website if you do that.

Something else

A couple new #scrapers to block that I haven't seen on robotstxt.com:

* Amzn-SearchBot is the search engine for Alexa and Rufus. Amazon claims on https://developer.amazon.com/amazonbot that it doesn't do AI training, but it still hammered our sites the past two days.

* SleepBot I haven't found much on, but it was requesting URLs for files that were submitted in a document upload spam attack we had a few months ago. Very sus.