Souveräne Enterprise KI in Tagen statt Monaten mit Infinito.Nexus

Der gezeigte Post steht exemplarisch für eine Entwicklung, die aktuell in vielen Unternehmen zu beobachten ist. Es werden kurzfristig KI Entwicklerinnen und Entwickler gesucht, die ein breites Spektrum abdecken, von LLM Integration über RAG bis hin zu produktiven Pipelines und skalierbaren Cloud und Container Umgebungen. Der Bedarf ist hoch, die Anforderungen komplex und die Zeitfenster meist sehr eng. Dabei zeigt sich immer wieder, dass die eigentliche Herausforderung nicht nur im Finden einzelner Expertinnen und Experten liegt, sondern in der fehlenden technischen Grundlage, um solche Lösungen schnell, sicher und nachhaltig umzusetzen. […]Avi Chawla (@_avichawla)

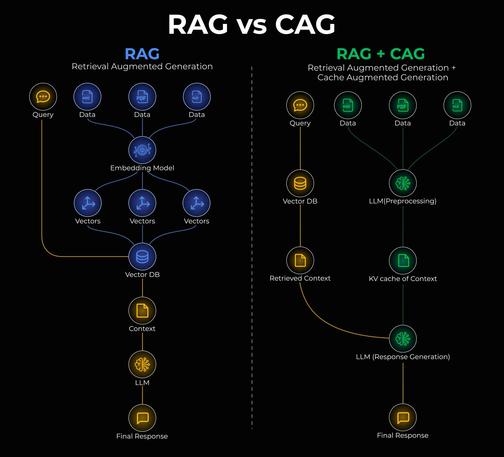

RAG의 한계를 설명하며, 자주 변하지 않는 정보도 매번 벡터 DB를 조회해 비용과 지연이 발생하는 문제를 지적한다. 이를 해결하는 Cache-Augmented Generation(CAG)을 소개하며, 캐시를 활용해 더 빠르고 효율적인 생성 방식을 제안한다.

https://x.com/_avichawla/status/2045767552526340205

#rag #cag #vectordb #generativeai #retrievalaugmentedgeneration

Avi Chawla (@_avichawla) on X

RAG vs. CAG, clearly explained! RAG is great, but it has a major problem: Every query hits the vector DB. Even for static information that hasn't changed in months. This is expensive, slow, and unnecessary. Cache-Augmented Generation (CAG) addresses this issue by enabling the

🧠 Smart factories start with RAG AI! Real-time data + AI = better decisions, less waste, more profit 💰⚙️

https://graycyan.ai/rag-ai-manufacturing/

#RAGAI

#RetrievalAugmentedGeneration

#AIInManufacturing

#SmartManufacturing

#Industry40

#ManufacturingAI

#AIRevolution

#DigitalTransformation

From the .NET blog...

In case you missed it earlier...

Generative AI for Beginners .NET: Version 2 on .NET 10

https://devblogs.microsoft.com/dotnet/generative-ai-for-beginners-dotnet-version-2-on-dotnet-10/ #dotnet #NETAspire #NETFundamentals #AI #csharp #Cloud #NET10 #agentframework #aicourse #generativeai #MicrosoftExtensionsAI #retrievalaugmentedgeneration

From the .NET blog...

Generative AI for Beginners .NET: Version 2 on .NET 10

https://devblogs.microsoft.com/dotnet/generative-ai-for-beginners-dotnet-version-2-on-dotnet-10/ #dotnet #NETAspire #NETFundamentals #AI #csharp #Cloud #NET10 #agentframework #aicourse #generativeai #MicrosoftExtensionsAI #retrievalaugmentedgeneration

Avi Chawla (@_avichawla)

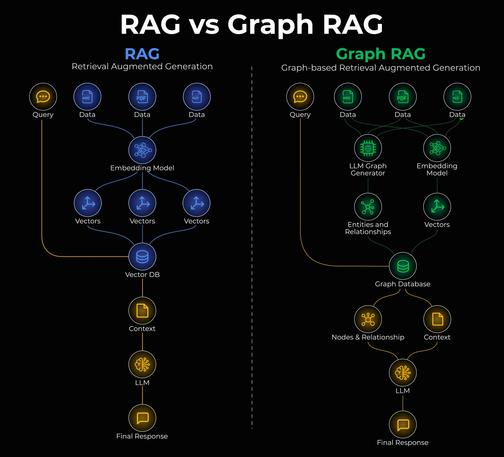

RAG(검색 기반 생성)과 Graph RAG의 차이를 시각적으로 설명하는 글입니다. 기존 RAG는 top-k 방식 검색의 한계로 문서 구조나 챕터별 정보 요약에서 문제가 생길 수 있으며, Graph RAG가 이러한 이슈를 해결하는 대안으로 제시됩니다.

Avi Chawla (@_avichawla) on X

RAG vs. Graph RAG, explained visually! RAG has many issues. For instance, imagine you want to summarize a biography, and each chapter of the document covers a specific accomplishment of a person (P). This is difficult with naive RAG since it only retrieves the top-k relevant

Avi Chawla (@_avichawla)

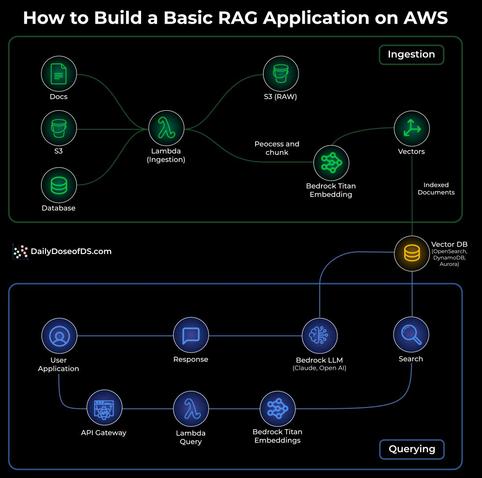

AWS에서 RAG 앱 구축 방법을 설명하는 게시물로, RAG(검색 보강 생성)는 지식 준비(ingestion)과 질의(querying)의 두 단계로 작동하며, 각 단계를 AWS의 기존 서비스로 구현하는 구체적 흐름을 시각적으로 제시한다.

Avi Chawla (@_avichawla) on X

How to build a RAG app on AWS! The visual below shows the exact flow of how a simple RAG system works inside AWS, using services you already know. At its core, RAG is a two-stage pattern: - Ingestion (prepare knowledge) - Querying (use knowledge) Below is how each stage works

To improve the relevance of responses produced by Dropbox Dash, engineers at #Dropbox started using #LLMs to augment human labeling - a crucial step in identifying which documents should be used to generate answers.

Their approach offers useful insights for anyone building systems with #RetrievalAugmentedGeneration (RAG).

Learn more: https://bit.ly/3P1nEyj

Avi Chawla (@_avichawla)

AI 엔지니어를 위한 8가지 RAG(검색 보강 생성) 아키텍처 시리즈를 소개하는 글의 시작으로, 첫 번째 예시인 'Naive RAG'는 쿼리 임베딩과 저장된 임베딩 간의 벡터 유사도로 문서를 검색하여 단순 사실 기반 질의에 적합하다고 설명합니다.

https://x.com/_avichawla/status/2027270775494082936

#rag #retrievalaugmentedgeneration #embeddings #vectorsearch

Avi Chawla (@_avichawla) on X

8 RAG architectures for AI Engineers: (explained with usage) 1) Naive RAG - Retrieves documents purely based on vector similarity between the query embedding and stored embeddings. - Works best for simple, fact-based queries where direct semantic matching suffices. 2)

AG (Retrieval Augmented Generation) is the solution. Here's how to build your own RAG server from scratch using ollama, Open WebUI and Chroma DB!

✅ Document processing ✅ Vector embeddings ✅ Smart retrieval ✅ Production-ready API

#RetrievalAugmentedGeneration #RAG #AIEngineering #LLM #Python #TTMO #OpenSource #AI