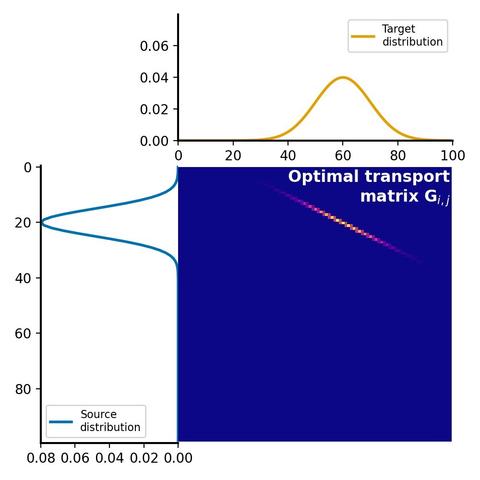

🌟 Sinkhorn-Knopp Algorithm: Giải thuật này như Softmax nhưng专注 về transport tối ưu trong toán học. Tài liệu liệt kê #SinkhornKnoppAlgorithm #OptimalTransport #AI #ToánHọc #MachineLearning

https://www.reddit.com/r/programming/comments/1oc3ond/sinkhornknopp_algorithm_like_softmax_but_for/