UniPool: A Globally Shared Expert Pool for Mixture-of-Experts

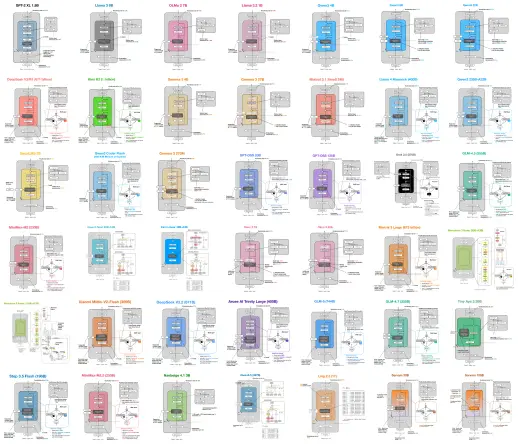

UniPool은 기존 Mixture-of-Experts(MoE) 아키텍처의 각 층별 독립 전문가 집합 방식을 전역 공유 전문가 풀로 대체한 새로운 MoE 구조입니다. 이를 통해 전문가 파라미터가 층 깊이에 선형적으로 증가할 필요 없이, 공유 풀 내에서 효율적이고 안정적인 라우팅과 균형 잡힌 전문가 활용을 가능하게 합니다. LLaMA 기반 다양한 모델 크기에서 UniPool은 기존 MoE 대비 검증 손실과 혼란도를 일관되게 개선하며, 전문가 파라미터 예산을 줄이면서도 성능을 유지하거나 향상시켰습니다. 이 연구는 MoE의 깊이 확장과 전문가 파라미터 할당에 대한 새로운 설계 방향을 제시합니다.

UniPool: A Globally Shared Expert Pool for Mixture-of-Experts

Modern Mixture-of-Experts (MoE) architectures allocate expert capacity through a rigid per-layer rule: each transformer layer owns a separate expert set. This convention couples depth scaling with linear expert-parameter growth and assumes that every layer needs isolated expert capacity. However, recent analyses and our routing probe challenge this allocation rule: replacing a deeper layer's learned top-k router with uniform random routing drops downstream accuracy by only 1.0-1.6 points across multiple production MoE models. Motivated by this redundancy, we propose UniPool, an MoE architecture that treats expert capacity as a global architectural budget by replacing per-layer expert ownership with a single shared pool accessed by independent per-layer routers. To enable stable and balanced training under sharing, we introduce a pool-level auxiliary loss that balances expert utilization across the entire pool, and adopt NormRouter to provide sparse and scale-stable routing into the shared expert pool. Across five LLaMA-architecture model scales (182M, 469M, 650M, 830M, and 978M parameters) trained on 30B tokens from the Pile, UniPool consistently improves validation loss and perplexity over the matched vanilla MoE baselines. Across these scales, UniPool reduces validation loss by up to 0.0386 relative to vanilla MoE. Beyond raw loss improvement, our results identify pool size as an explicit depth-scaling hyperparameter: reduced-pool UniPool variants using only 41.6%-66.7% of the vanilla expert-parameter budget match or outperform layer-wise MoE at the tested scales. This shows that, under a shared-pool design, expert parameters need not grow linearly with depth; they can grow sublinearly while remaining more efficient and effective than vanilla MoE. Further analysis shows that UniPool's benefits compose with finer-grained expert decomposition.