We are very happy that our colleage @GenAsefa has contributed the chapter on "Neurosymbolic Methods for Dynamic Knowledge Graphs" for the newly published Handbook on Neurosymbolic AI and Knowledge Graphs together with Mehwish Alam and Pierre-Henri Paris.

Handbook: https://ebooks.iospress.nl/doi/10.3233/FAIA400

our own chapter on arxive: https://arxiv.org/abs/2409.04572

#neurosymbolicAI #AI #generativeAI #LLMs #knowledgegraphs #semanticweb #embeddings #graphembeddings

IOS Press Ebooks - Handbook on Neurosymbolic AI and Knowledge Graphs

Neural approaches have traditionally excelled at perceptual tasks like pattern recognition, whereas symbolic frameworks have offered powerful methods for knowledge representation, logical inference, and interpretability, but the current AI landscape ...

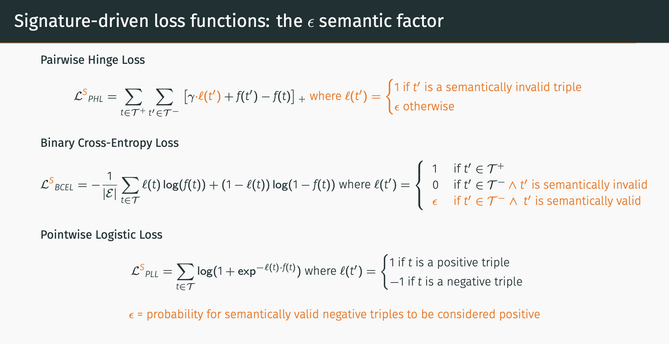

Very happy to announce our new paper accepted in @eswc_conf

#ESWC2024: "Treat Different Negatives Differently: Enriching Loss Functions with Domain and Range Constraints for Link Prediction"!

📎 https://arxiv.org/pdf/2303.00286.pdf

w/ N. Hubert, A. Brun, and D. Monticolo

#knowledgeGraph #semanticWeb #machineLearning #linkPrediction #neurosymbolicAI #artificialIntelligence #linkedOpenData #graphEmbeddings #embeddings #graphNeuralNetworks

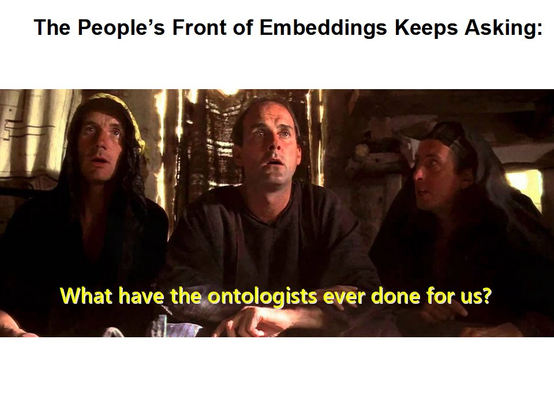

Keynote by Heiko Paulheim (still not arrived here in the

#fediverse) at RuleML+RR 2023 in Oslo. Talk: "Knowledge Graph Embeddings meet Symbolic Schemas, or: what do they Actually Learn?" Slides:

https://www.uni-mannheim.de/media/Einrichtungen/dws/Files_People/Profs/heiko/talks/RuleMLRR_2023.pdf#knowledgegraph #semanticweb #embeddings #graphembeddings #rdf2vec #ruleml+rr of course, this slide had to be from Heiko 😉

#montypython @

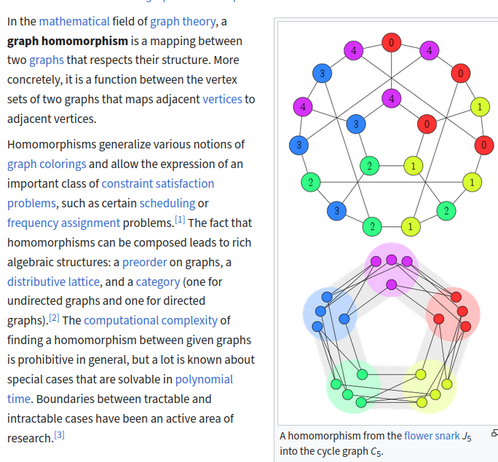

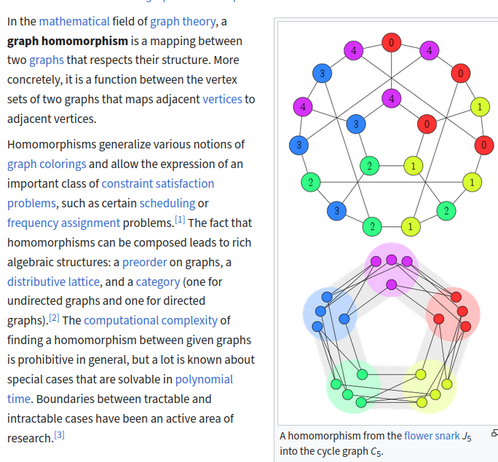

[email protected]Structural Node Embeddings with Homomorphism Counts

https://arxiv.org/abs/2308.15283

* graph homomorphism counts of interest in graph-based machine learning

* capture local structural information, enabling creation of robust structural embeddings

* enriched w. node labels, node weights, & edge weights, offers interpretable representation of graph data

* allows enhanced explainability of ML models

#GraphTheory #GNN #homomorphism #NeuralNetworks #GraphColoring #GraphEmbeddings #EplainableML

Structural Node Embeddings with Homomorphism Counts

Graph homomorphism counts, first explored by Lovász in 1967, have recently garnered interest as a powerful tool in graph-based machine learning. Grohe (PODS 2020) proposed the theoretical foundations for using homomorphism counts in machine learning on graph level as well as node level tasks. By their very nature, these capture local structural information, which enables the creation of robust structural embeddings. While a first approach for graph level tasks has been made by Nguyen and Maehara (ICML 2020), we experimentally show the effectiveness of homomorphism count based node embeddings. Enriched with node labels, node weights, and edge weights, these offer an interpretable representation of graph data, allowing for enhanced explainability of machine learning models.

We propose a theoretical framework for isomorphism-invariant homomorphism count based embeddings which lend themselves to a wide variety of downstream tasks. Our approach capitalises on the efficient computability of graph homomorphism counts for bounded treewidth graph classes, rendering it a practical solution for real-world applications. We demonstrate their expressivity through experiments on benchmark datasets. Although our results do not match the accuracy of state-of-the-art neural architectures, they are comparable to other advanced graph learning models. Remarkably, our approach demarcates itself by ensuring explainability for each individual feature. By integrating interpretable machine learning algorithms like SVMs or Random Forests, we establish a seamless, end-to-end explainable pipeline. Our study contributes to the advancement of graph-based techniques that offer both performance and interpretability.

In today's

#ise2023 lecture, we discussed neural networks, from the very simply McCulloch-Pitts Neuron up to Convolutional Neural Networks and Generative Adversarial Networks. A lot of content for only 90 minutes of lecture ;-)

Slides:

https://drive.google.com/file/d/1KsAGBViEzu7UDkz-F2QJJJ23ce-AQ9pl/view?usp=drive_link#machinelearning #deeplearning #representationlearning #knowledgegraphs #graphembeddings #embeddings #lecture @fizise @enorouzi #aiart #stablediffusionartISE2023 - 12 - Machine Learning 3.pdf