Structural Node Embeddings with Homomorphism Counts

https://arxiv.org/abs/2308.15283

* graph homomorphism counts of interest in graph-based machine learning

* capture local structural information, enabling creation of robust structural embeddings

* enriched w. node labels, node weights, & edge weights, offers interpretable representation of graph data

* allows enhanced explainability of ML models

#GraphTheory #GNN #homomorphism #NeuralNetworks #GraphColoring #GraphEmbeddings #EplainableML

Structural Node Embeddings with Homomorphism Counts

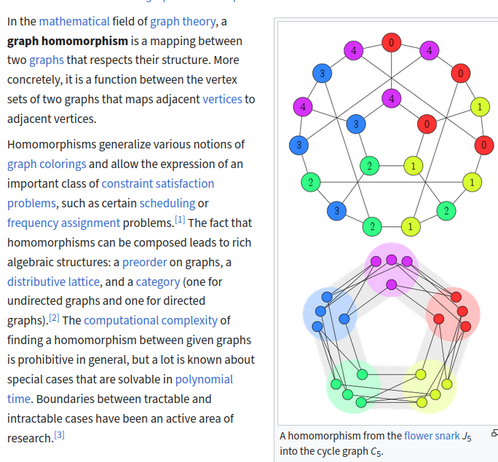

Graph homomorphism counts, first explored by Lovász in 1967, have recently garnered interest as a powerful tool in graph-based machine learning. Grohe (PODS 2020) proposed the theoretical foundations for using homomorphism counts in machine learning on graph level as well as node level tasks. By their very nature, these capture local structural information, which enables the creation of robust structural embeddings. While a first approach for graph level tasks has been made by Nguyen and Maehara (ICML 2020), we experimentally show the effectiveness of homomorphism count based node embeddings. Enriched with node labels, node weights, and edge weights, these offer an interpretable representation of graph data, allowing for enhanced explainability of machine learning models. We propose a theoretical framework for isomorphism-invariant homomorphism count based embeddings which lend themselves to a wide variety of downstream tasks. Our approach capitalises on the efficient computability of graph homomorphism counts for bounded treewidth graph classes, rendering it a practical solution for real-world applications. We demonstrate their expressivity through experiments on benchmark datasets. Although our results do not match the accuracy of state-of-the-art neural architectures, they are comparable to other advanced graph learning models. Remarkably, our approach demarcates itself by ensuring explainability for each individual feature. By integrating interpretable machine learning algorithms like SVMs or Random Forests, we establish a seamless, end-to-end explainable pipeline. Our study contributes to the advancement of graph-based techniques that offer both performance and interpretability.