The amygdala plays a crucial role in emotional processing, particularly in detecting threat-related stimuli and regulating responses to them. Fear processing is a vital function emerging during the latter half of the first postnatal year and becomes progressively more regulated and context-dependent with maturation across early childhood. However, the neural underpinnings of early-emerging individual differences in fear processing remain underexplored.

In our previous studies, we have examined how 8-month-old infants avert their gaze from fearful faces relative to non-fearful faces. In general, children of this age tend to stay looking at fearful faces more easily, a phenomenon called fear bias. However, in our previous study, we found that a smaller left amygdala volume after birth was associated with a greater likelihood of averting gaze from fearful faces at 8 months of age.

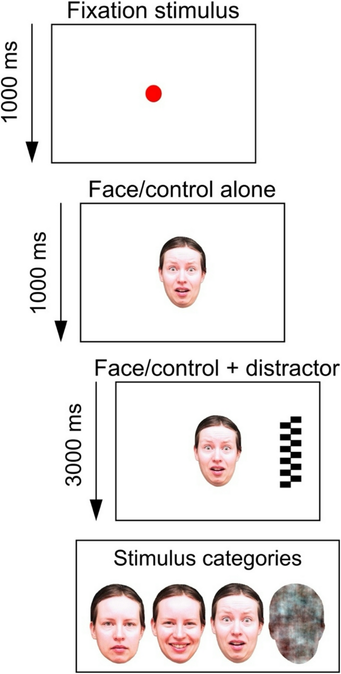

Our latest study builds on this by extending the analysis longitudinally. We investigated whether neonatal amygdala volume and microstructural properties, indexed by mean diffusivity, are associated with attentional biases toward fearful faces at 30 and 60 months. Neonatal MRI was acquired at 2–8 weeks of age using 3T MRI. The same cohort completed eye-tracking at follow-ups (n = 57 at 30 months; n = 54 at 60 months).

Our results show that larger newborn left amygdala volume was associated with decreased disengagement from fearful (vs. non-fearful) faces at 30 months (p = .041), but not at 60 months (p = .553). Moreover, sex-specific analyses indicated that higher mean diffusivity in the left amygdala was associated with lower fear bias at 60 months in boys (p = .046).

These findings highlight the dynamic nature of amygdala-related fear processing across early development. Associations between neonatal amygdala characteristics and fear bias appeared age-dependent and sex-specific, consistent with developmental changes in fear processing, with fear bias typically elevated in infancy and becoming less pronounced by around five years of age.

https://doi.org/10.1007/s00787-026-03041-3

#amygdala #EyeTracking #MRI #EmotionalProcessing #FearProcessing