CHR Special Issue: Computation...

CHR Special Issue: Computation...

CFP: “Computational Approaches to Art” in Computational Humanities Research.

Interesting to see how strongly the field is shifting from simple digitization toward questions of epistemology, AI, computer vision, network analysis, and computational visual culture.

A sign that the debate is moving from “Can we use AI in art history?” toward “How does computation reshape what art history actually is?”

👉 https://arthist.net/archive/52416

#DigitalArtHistory #AI #ComputerVision #VisualCulture

ActCam: Zero-Shot Joint Camera and 3D Motion Control for Video Generation

ActCam은 사전 학습된 이미지-비디오 확산 모델을 기반으로, 드라이빙 비디오에서 캐릭터 모션을 새로운 장면으로 전이하면서 프레임별 카메라 내·외부 파라미터를 제어하는 제로샷 비디오 생성 기법이다. 깊이와 포즈 조건을 시간에 따라 단계적으로 적용해 장면 구조와 고주파 디테일을 균형 있게 생성하며, 다양한 벤치마크에서 기존 포즈 제어 방식 대비 카메라 일관성과 모션 충실도가 향상됨을 보였다. 별도의 추가 학습 없이도 카메라와 모션을 동시에 정밀하게 제어할 수 있어 영상 생성 및 AI 기반 콘텐츠 제작에 유용하다.

https://arxiv.org/abs/2605.06667

#videogeneration #diffusionmodel #3dmotioncontrol #zeroshot #computervision

ActCam: Zero-Shot Joint Camera and 3D Motion Control for Video Generation

For artistic applications, video generation requires fine-grained control over both performance and cinematography, i.e., the actor's motion and the camera trajectory. We present ActCam, a zero-shot method for video generation that jointly transfers character motion from a driving video into a new scene and enables per-frame control of intrinsic and extrinsic camera parameters. ActCam builds on any pretrained image-to-video diffusion model that accepts conditioning in terms of scene depth and character pose. Given a source video with a moving character and a target camera motion, ActCam generates pose and depth conditions that remain geometrically consistent across frames. We then run a single sampling process with a two-phase conditioning schedule: early denoising steps condition on both pose and sparse depth to enforce scene structure, after which depth is dropped and pose-only guidance refines high-frequency details without over-constraining the generation. We evaluate ActCam on multiple benchmarks spanning diverse character motions and challenging viewpoint changes. We find that, compared to pose-only control and other pose and camera methods, ActCam improves camera adherence and motion fidelity, and is preferred in human evaluations, especially under large viewpoint changes. Our results highlight that careful camera-consistent conditioning and staged guidance can enable strong joint camera and motion control without training. Project page: https://elkhomar.github.io/actcam/.

Master Computer Vision with **Detectron2**! 🚀

This tutorial simplifies Meta AI's modular framework, showing you how to build a Faster R-CNN pipeline for high-accuracy object detection.

📖 Medium: [https://medium.com/object-detection-tutorials/easy-detectron2-object-detection-tutorial-for-beginners-a7271485a54b]()

💻 Code: [https://eranfeit.net/easy-detectron2-object-detection-tutorial-for-beginners/]()

NVIDIA (@nvidia)

Carbon Robotics가 AI로 유도되는 레이저를 활용해 잡초를 제거하는 기술을 소개했다. 화학 약품 없이 작물을 더 건강하게 재배할 수 있는 혁신적인 농업 AI 응용 사례다.

End-to-End Autoregressive Image Generation with 1D Semantic Tokenizer

이 논문은 이미지 생성용 1D 시맨틱 토크나이저를 활용한 엔드투엔드 자기회귀 이미지 생성 방식을 제안한다. 기존의 토크나이저와 생성 모델을 별도로 학습하는 2단계 접근법과 달리, 재구성과 생성 과정을 공동 최적화하여 생성 결과로부터 토크나이저를 직접 지도한다. 또한 비전 파운데이션 모델을 활용해 1D 토크나이저 성능을 향상시키는 방법도 탐구했다. 제안된 모델은 ImageNet 256x256 이미지 생성에서 FID 1.48이라는 최첨단 성능을 기록했다.

https://arxiv.org/abs/2605.00503

#autoregressive #imagegeneration #tokenizer #visionfoundationmodel #computervision

End-to-End Autoregressive Image Generation with 1D Semantic Tokenizer

Autoregressive image modeling relies on visual tokenizers to compress images into compact latent representations. We design an end-to-end training pipeline that jointly optimizes reconstruction and generation, enabling direct supervision from generation results to the tokenizer. This contrasts with prior two-stage approaches that train tokenizers and generative models separately. We further investigate leveraging vision foundation models to improve 1D tokenizers for autoregressive modeling. Our autoregressive generative model achieves strong empirical results, including a state-of-the-art FID score of 1.48 without guidance on ImageNet 256x256 generation.

Ultralytics (@ultralytics)

Ultralytics YOLO26를 활용해 물류 현장에서 작업자 추락을 실시간 탐지하는 사례를 소개했다. 객체 탐지와 자세 추정을 결합해 짐을 든 사람이 넘어졌는지 식별하고, 창고 안전을 개선하며 사고 대응을 더 빠르게 할 수 있다.

https://x.com/ultralytics/status/2052418305500066000

#ultralytics #yolo26 #computervision #safety #objectdetection

Ultralytics (@ultralytics) on X

Detect worker falls in real time with Ultralytics YOLO26! 📦 Combine object detection and pose estimation to identify when a person carrying luggage boxes falls, helping logistics teams improve warehouse safety and respond faster to incidents. Get started ➡️

田中義弘 | taziku CEO / AI × Creative (@taziku_co)

Meta Project Aria의 오픈소스 EgoBlur Gen 2가 공개되어, VRS/MP4/JPEG/PNG 영상에서 얼굴과 차량 번호판을 자동 탐지·블러 처리한다. 스마트 글래스 등 첫인칭 카메라 시대의 프라이버시 보호를 강화하는 도구로 주목된다.

https://x.com/taziku_co/status/2052517666691428690

#opensource #privacy #computervision #smartglasses #metaaria

Computer vision converts visual data into actionable insights, reducing errors and boosting productivity. It's a game-changer for industries relying on precise visual analysis.

https://codeponents.com/?page_id=5472

#ComputerVision #MiamiTech #BusinessEfficiency #VisualData

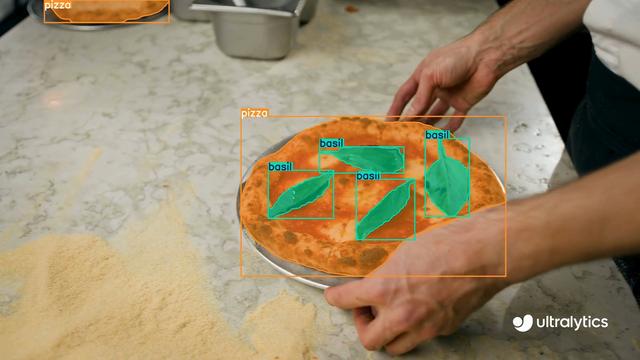

Ultralytics (@ultralytics)

Ultralytics YOLO26의 세그멘테이션 기능으로 피자와 바질을 분리하는 예시를 소개합니다. 음식 분석, 재료 모니터링, 스마트 키친 자동화 같은 활용에 적합하며, 이미지 내 음식 객체를 정밀하게 구분하는 AI 응용 사례입니다.

https://x.com/ultralytics/status/2051698525826682989

#ultralytics #yolo26 #segmentation #foodtech #computervision

Ultralytics (@ultralytics) on X

Segment pizza and basil with Ultralytics YOLO26! 🍕 Identify and isolate food items with YOLO26 segmentation task, ideal for food analysis, ingredient monitoring, and smart kitchen automation workflows. Explore more ➡️ https://t.co/SM5vNNTmuH #segmentation #foodtech