Business Latest | At 'AI Coachella,' Stanford Students Line Up to Learn From Silicon Valley Royalty by Maxwell Zeff

AI generated summary, Read the full article for complete information.

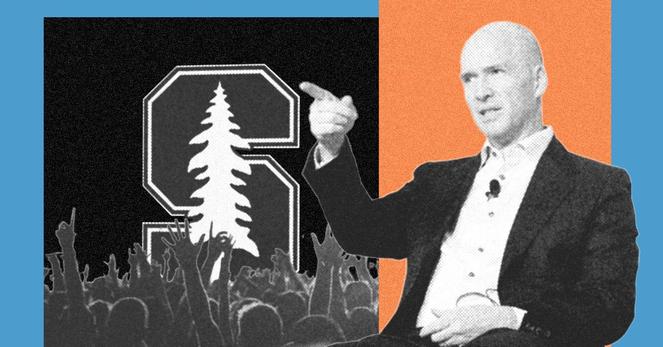

At Stanford, the wildly popular CS 153 course—dubbed “AI Coachella”—has turned into a live podcast‑style forum where Silicon Valley heavyweights such as OpenAI’s Sam Altman, Nvidia’s Jensen Huang, Microsoft’s Satya Nadella, AMD’s Lisa Su and others drop in as guest lecturers, offering students rare access to the leaders of frontier AI. Co‑taught by former Andreessen Horowitz partner Anjney Midha and ex‑Apple VP Michael Abbott, the class fills its 500 seats instantly and streams its sessions on YouTube, drawing both admiration for its insider insight and criticism that it prioritizes celebrity talks over “real” coursework. Midha, who also launched a venture fund with Abbott, uses the platform to share inside knowledge about AI infrastructure, chip pricing and startup dynamics, while also urging students to focus on personal relationships and mental health. Students like sophomore Mahi Jariwala and junior Darrow Hartman say the experience provides valuable mentorship, networking and a “fun” complement to more rigorous classes, even as some faculty and peers mock the course’s “AI Coachella” vibe. The phenomenon highlights Stanford’s unique draw of Silicon Valley access amid broader debates about the value of higher‑education credentials in a world where online tools and recorded lectures are increasingly ubiquitous.

Read more: https://www.wired.com/story/stanford-cs-class-ai-coachella-ben-horowitz/

#AnjneyMidha #OpenAI #Nvidia #AndreessenHorowitz #business/artificialintelligence #MichaelAbbott #SamAltman #JensenHuang #SatyaNadella #LisaSu #AmandaAskell #SriramKrishnan #MahiJariwala #DarrowHartman

AI generated summary, Read the full article for complete information.