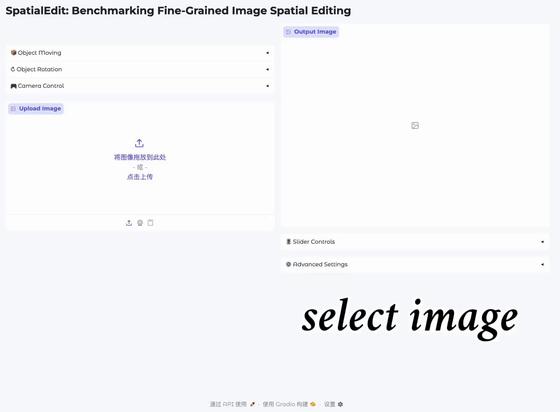

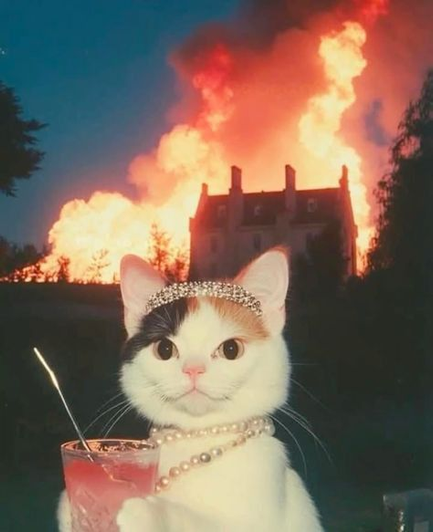

🎉 Now videos are alive! After finally getting WAN 2.1 running on my RX 6700 XT via ROCm and ComfyUI, even complex prompts can be turned into animated WebPs locally!

These animated WebP were generated locally using ComfyUI and the WAN 2.1 T2V 1.3B (fp16) model.

Model Stack:

- wan2.1_t2v_1.3B_fp16

- umt5_xxl_fp8_e4m3fn_scaled (Text Encoder)

- wan_2.1_vae

- clip_vision_h

The prompt is first converted into embeddings by the UMT5 encoder.

The WAN video model then generates multiple frames using latent diffusion (noise → iterative refinement), ensuring temporal coherence between frames.

The VAE decodes the latent frames into images, exported as an animated WebP.

Prompt execution time: depends on scene complexity, from 521.62 seconds (~8.7 minutes) up to 17 minutes 26 seconds for more complex prompts.

Rendered locally via ROCm on my AMD RX 6700 XT (12GB VRAM).

No cloud. Pure local inference.

#ComfyUI #WAN21 #ROCm #AMD #LocalAI #FOSS #VideoAI #AIvideo #AIGenerated #MachineLearning #DeepLearning #DiffusionModels #TextToVideo #AIArt #CreativeAI #LocalInference #VideoGeneration