# TB5-WaLI Home Tour Success (x3)

==== 3/31/26 wali_tours test ====

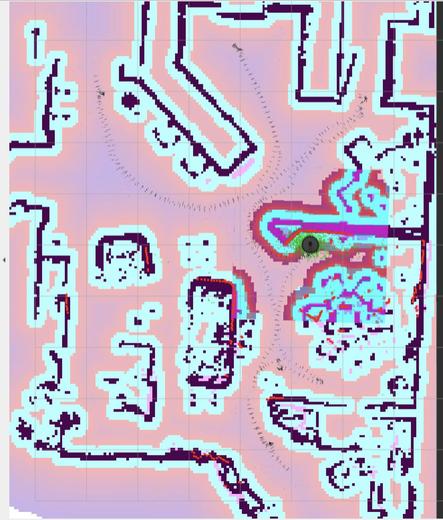

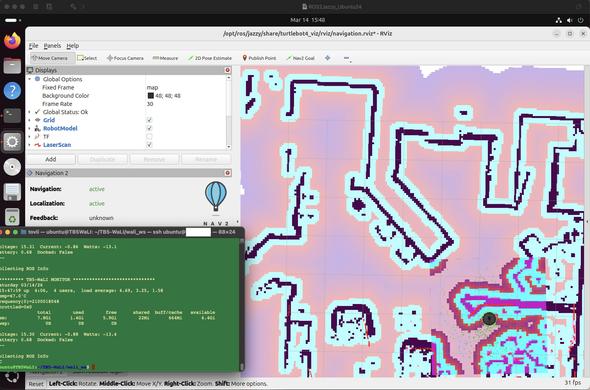

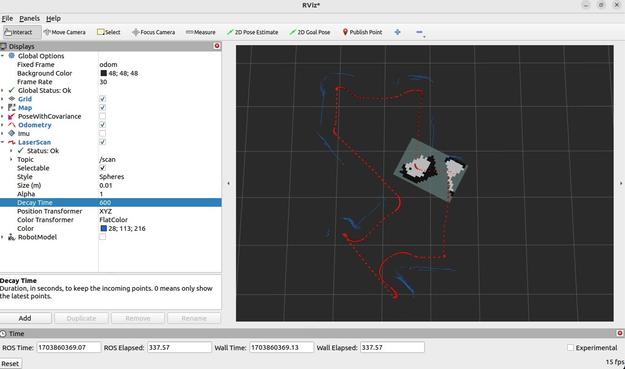

Successfully performed three 10-stop wali_tours of about 7 minutes each (including successful recoveries)

Dock, Set_Pose_Docked, Undock,

Drive/Turn to "Ready Position"

Nav to front_door, couch_view, laundry, table, dining, kitchen, patio_view, office, hall_view, ready

Dock

#ROS2Nav2 #TurtleBot4 #RaspberryPi5

Navigation, Localization, TurtleBot4, wali, and wali_tour nodes consume 35% not navigating, 75% cpu navigating