Solod v0.1: Go ergonomics, practical stdlib, native C interop

Solod v0.1은 Go 문법을 사용하면서 런타임이 없는 시스템 수준 언어로, Go 개발자와 C 개발자 모두를 대상으로 설계되었다. 이번 버전에서는 Go의 표준 라이브러리 일부를 포팅하고 SQLite를 통한 네이티브 C 상호운용성을 지원하여, 메모리 관리를 직접 하면서도 Go 스타일의 코드를 작성할 수 있다. 성능 면에서도 Go보다 빠른 부분이 있으며, 향후 네트워킹과 동시성 기능이 추가될 예정이다. Solod는 저수준 제어와 효율적인 C 연동이 필요한 개발자에게 유용한 새로운 언어 옵션이다.

ctrl+b, % 分屏

ctrl+b, o 切换分屏

ctrl+b, : 输入命令行

可以输入kill-session强制关掉伺机的tmux session

#systemsprogramming

#systemsprogramming

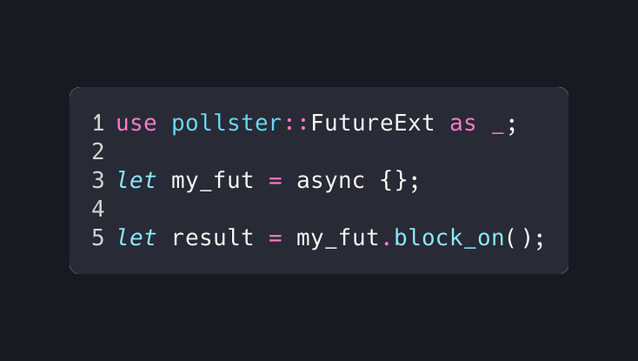

Just discovered a minimal async executor for Rust 🦀

🌀 **pollster** — Block a thread until a future completes.

💯 No dependencies, ~100 lines, just `.block_on()` and done

⭐ GitHub: https://github.com/zesterer/pollster

#rustlang #async #rust #programming #library #tip #systemsprogramming

顺带一提,我发现了一个好用的mac的sandbox app:https://orbstack.dev/

安装之后terminal里直接敲(sandbox可以替换成其他名字):

orb create ubuntu:22.04 sandbox

orb -m sandbox

就可以在mac上开linux的开发环境了w

当然编译go写的app还需要

sudo apt update && sudo apt install golang git -y

用完了这个sandbox直接

orb delete sandbox

quickest way to force the browser to bypass its local cache for the current page:

cmd+shift+R

#systemsprogramming

“Bitfield Pitfalls”, Michal Necasek, OS/2 Museum (https://www.os2museum.com/wp/bitfield-pitfalls/).

Via HN: https://news.ycombinator.com/item?id=47469945

On Lobsters: https://lobste.rs/s/xtue97/bitfield_pitfalls

#C #Programming #BitFields #Gotchas #C89 #C11 #Bits #SystemsProgramming #Compilers

https://arxiv.org/abs/2603.25414

🔬 Numerical stability, computational correctness, physical consistency; this is what we're building at SpeakEZ Technologies. Verified AI infrastructure that's just the beginning of our journey.

Decidable By Construction: Design-Time Verification for Trustworthy AI

A prevailing assumption in machine learning is that model correctness must be enforced after the fact. We observe that the properties determining whether an AI model is numerically stable, computationally correct, or consistent with a physical domain do not necessarily demand post hoc enforcement. They can be verified at design time, before training begins, at marginal computational cost, with particular relevance to models deployed in high-leverage decision support and scientifically constrained settings. These properties share a specific algebraic structure: they are expressible as constraints over finitely generated abelian groups $\mathbb{Z}^n$, where inference is decidable in polynomial time and the principal type is unique. A framework built on this observation composes three prior results (arXiv:2603.16437, arXiv:2603.17627, arXiv:2603.18104): a dimensional type system carrying arbitrary annotations as persistent codata through model elaboration; a program hypergraph that infers Clifford algebra grade and derives geometric product sparsity from type signatures alone; and an adaptive domain model architecture preserving both invariants through training via forward-mode coeffect analysis and exact posit accumulation. We believe this composition yields a novel information-theoretic result: Hindley-Milner unification over abelian groups computes the maximum a posteriori hypothesis under a computable restriction of Solomonoff's universal prior, placing the framework's type inference on the same formal ground as universal induction. We compare four contemporary approaches to AI reliability and show that each imposes overhead that can compound across deployments, layers, and inference requests. This framework eliminates that overhead by construction.

Published today on arXiv: https://arxiv.org/abs/2603.25414

🔬 Numerical stability, computational correctness, physical consistency; this is what we're building at SpeakEZ Technologies. Verified AI infrastructure that's just the beginning of our journey.

Decidable By Construction: Design-Time Verification for Trustworthy AI

A prevailing assumption in machine learning is that model correctness must be enforced after the fact. We observe that the properties determining whether an AI model is numerically stable, computationally correct, or consistent with a physical domain do not necessarily demand post hoc enforcement. They can be verified at design time, before training begins, at marginal computational cost, with particular relevance to models deployed in high-leverage decision support and scientifically constrained settings. These properties share a specific algebraic structure: they are expressible as constraints over finitely generated abelian groups $\mathbb{Z}^n$, where inference is decidable in polynomial time and the principal type is unique. A framework built on this observation composes three prior results (arXiv:2603.16437, arXiv:2603.17627, arXiv:2603.18104): a dimensional type system carrying arbitrary annotations as persistent codata through model elaboration; a program hypergraph that infers Clifford algebra grade and derives geometric product sparsity from type signatures alone; and an adaptive domain model architecture preserving both invariants through training via forward-mode coeffect analysis and exact posit accumulation. We believe this composition yields a novel information-theoretic result: Hindley-Milner unification over abelian groups computes the maximum a posteriori hypothesis under a computable restriction of Solomonoff's universal prior, placing the framework's type inference on the same formal ground as universal induction. We compare four contemporary approaches to AI reliability and show that each imposes overhead that can compound across deployments, layers, and inference requests. This framework eliminates that overhead by construction.