Been working on something for a while and finally put it out there, a public security challenge against a threshold cryptography system I built for my own infrastructure.

Four servers, four countries, four hosting providers. The group signing key was generated distributedly (Pedersen DKG), no single server holds the full secret. I literally can't extract it myself. The challenge is to forge a valid FROST Ed25519 signature against today's published challenge string.

What makes it different from a typical CTF:

→ It's not a weekend event. It runs 24/7 for 90 days. The servers are real production boxes running real software (Nextcloud, Gitea, a team API, Grafana). Not docker containers with planted vulns.

→ Post-quantum hybrid. The audit chain carries ML-DSA-44 signatures alongside the FROST threshold sigs, with a downgrade-detection flag baked into the signed payload. Stripping the PQ signature invalidates the classical one.

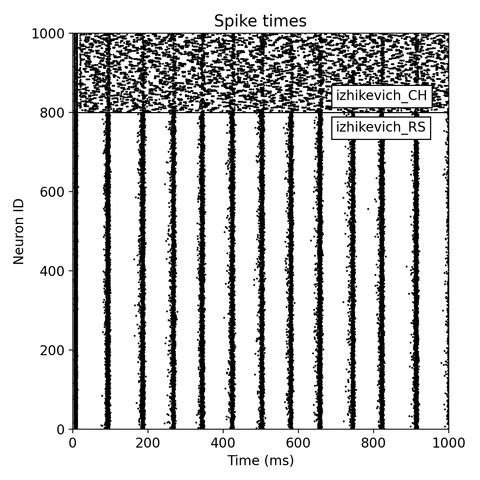

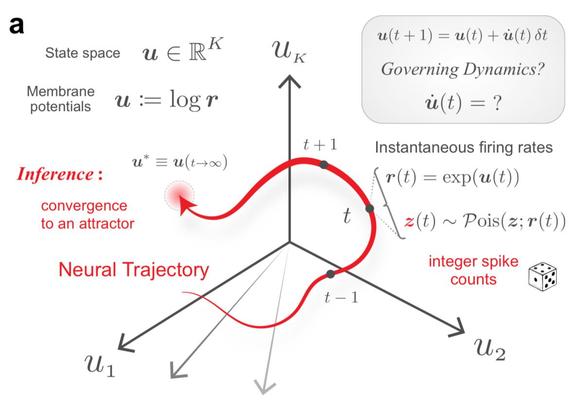

→ There's a spiking neural network watching the cluster. 258 neurons with STDP learning and four neuromodulators (dopamine, noradrenaline, acetylcholine, serotonin). It processes DAG events, network metrics, and system telemetry as spike trains. A local LLM reads the brain's internal state every five minutes and reports what it observes. Currently it says the cluster is calm. I want to see what it says when someone's actually poking around.

The detection layer is consensus-based. Cross-peer Merkle verification, honey ports, file canaries, DNS sentinels — but quarantine requires multiple observers to agree before acting. One node can't panic the cluster on its own.

I've already broken it myself twice during deployment. Rolled a binary update and got cascade-quarantined by my own Merkle checker. Tripped a file canary rotating honeypot credentials. Those incidents are published. The system catches real mistakes.

Five tiers from foothold to crown jewel. No cash bounty, just your name on the board, CVE attribution, and write-up rights. Safe harbour under disclose.io terms.

#infosec #security #cryptography #thresholdcrypto #ctf #FROST #postquantum #pentest #redteam #hacking #spikingneuralnetwork #neuromorphic

🧠

🧠