Big News! The completely #opensource #LLM #Apertus 🇨🇭 has been released today:

📰 https://www.swisscom.ch/en/about/news/2025/09/02-apertus.html

🤝 The model supports over 1000 languages [EDIT: an earlier version claimed over 1800] and respects opt-out consent of data owners.

▶ This is great for #publicAI and #transparentAI. If you want to test it for yourself, head over to: https://publicai.co/

🤗 And if you want to download weights, datasets & FULL TRAINING DETAILS, you can find them here:

https://huggingface.co/collections/swiss-ai/apertus-llm-68b699e65415c231ace3b059

🔧 Tech report: https://huggingface.co/swiss-ai/Apertus-70B-2509/blob/main/Apertus_Tech_Report.pdf

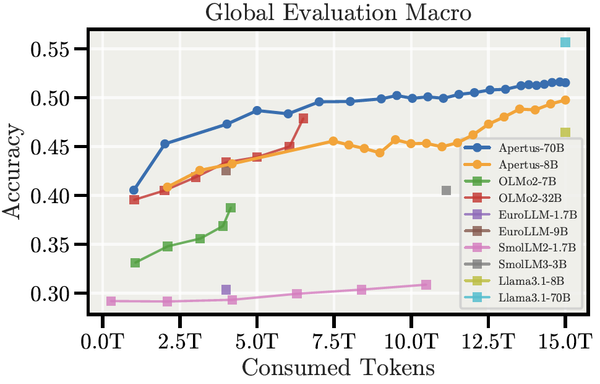

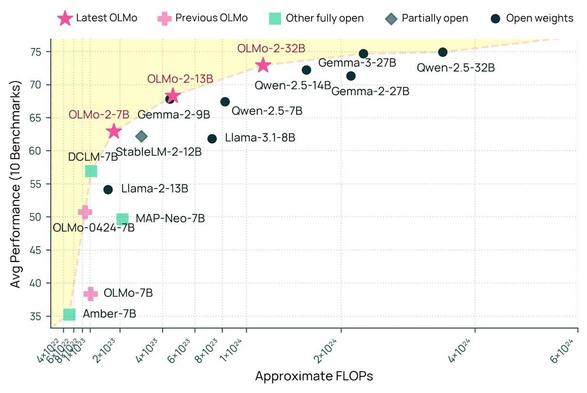

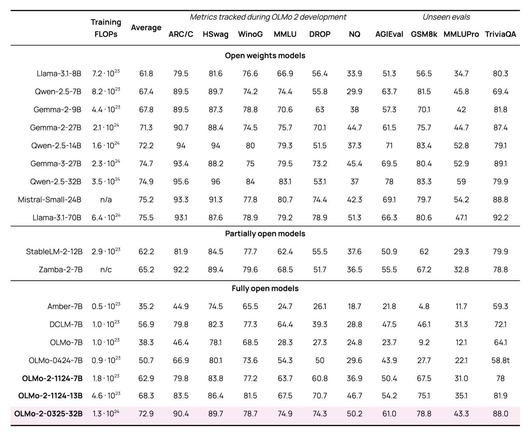

After #Teuken7b and #Olmo2, Apertus is the next big jump in capabilities and performance of #FOSS #LLMs, while also improving #epistemicresilience and #epistemicautonomy with its multilingual approach.

I believe that especially for sensitive areas like #education, #healthcare, or #academia, there is no alternative to fully open #AI models. Everybody should start building upon them and improving them.

#KIMündigkeit #SovereignAI #FOSS #ethicalAI #swissai #LernenmitKI