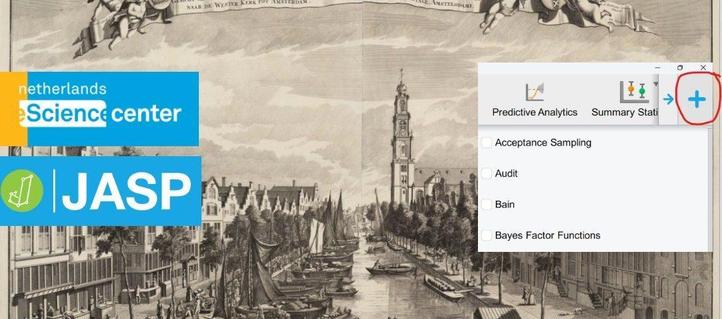

On 16 and 17 February, the #JASP team organised a #hackathon where 27 participants from 6 countries learned how to contribute to their own modules to the JASP ecosystem!

Special thanks to @EJWagenmakers, @pabrod, Stefan Verhoeven & Zowi Mens for making the event a success!

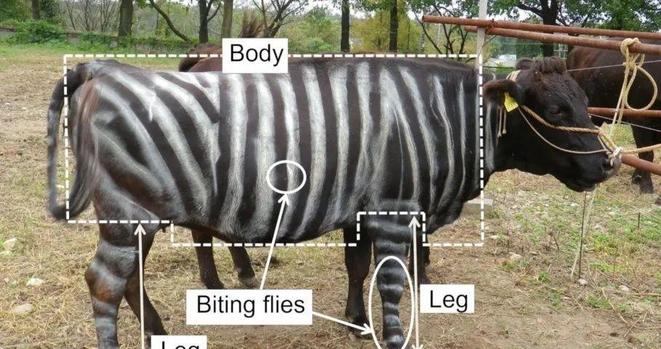

#statstab #486 Testing Bayesian Informative Hypotheses in Five Steps With JASP and R {bain}

Thoughts: The BAIN module let's you go beyond "effect vs no effect" by specifying contrasts (hyp) & obtaining fractional BFs.

#bain #jasp #bayesfactor #bayesian #rstats #r #hypothesis #nhbt #BF #methods #tutorial #guide

https://share.google/cTDvBO7SQM9CpNqlU

#ICYMI: The seats for the Build Your Own #JASP Module #hackathon are quickly running out. It is not only free, we are even offering travel grants!

Want to join us in Amsterdam on 16 and 17 February?

Register here ➡️ https://jasp-stats.org/2026/01/27/apply-for-the-escience-jasp-hackathon-in-amsterdam-update-and-last-call/

Apply for the eScience JASP Hackathon in Amsterdam: Update and Last Call - JASP - Free and User-Friendly Statistical Software

On 16-17 February 2026 the Netherlands eScience Center is hosting a two-day JASP hackathon in Amsterdam. The purpose of the hackathon is to guide participants into developing their very own JASP module; the JASP programming team will be present to… Continue reading →

I have been following and used #JASP on and off over the years and I liked it a lot.

This tutorial makes it really interesting!

"Measurement Invariance with Moderated (Non-)Linear Factor Analysis in JASP"

https://osf.io/preprints/psyarxiv/6ftqg_v1

I hope I can trial it soon!

Do you want to develop your own module in #JASP?

Join our free hackathon on 16-17 February 2026 in Amsterdam!

For more information and registration, see 👉 https://jasp-stats.org/2025/11/21/apply-for-the-escience-jasp-hackathon-and-build-your-own-module-in-amsterdam/

Are you interested in developing your own module in #JASP?

Join our free hackathon on 16-17 February 2026.

More information and registration:

https://jasp-stats.org/2025/11/21/apply-for-the-escience-jasp-hackathon-and-build-your-own-module-in-amsterdam/

I am realizing something about #LLM use and teaching: it means, if I want to make sure I'm assessing student learning and not student saying-stuff-to-chatgpt, I can't trust students as much or give them the benefit of the doubt. Tonight a student produced some graphs for her results on a stats project that had an extra variable thrown in that wasn't part of her original hypotheses. It was in her dataset, so it wasn't bizarre, and it made some sense, but there were a few things that in the past I would have said were just students being students: no error bars, odd wording of axis labels, and like that. Historically, these (for me) have been within the bounds of "students kind of missing the boat a bit."

Now I think it could be that or it could be that chatGPT or grok or some other LLM cranked these graphs out, or possibly spit out the instructions for making them in #JASP.

I can't trust the student anymore. I can't give her the benefit of the doubt. There is an ever-present alternative explanation for all faults in student work, and it's a very strong explanation.

collaborator, the creator of the statistics software #JASP, will tell you why 👉 https://blog.esciencecenter.nl/8c81bad92d79?source=friends_link&sk=e5766de706dfd576a5872b69c6d97572