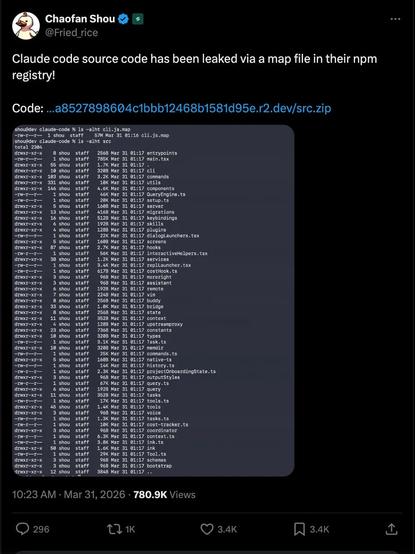

What the Claude Code Leak Teaches Us About AI Supply-Chain Security

This article discusses an issue involving insecure AI model distribution, as demonstrated by the Claude Code leak. The root cause was a lack of proper encryption and protection mechanisms for sensitive artificial intelligence code during the development and distribution process. By exposing the Claude Code, the attacker disclosed critical components of a proprietary AI system, potentially compromising its functionality and intellectual property. The researcher leveraged social engineering techniques to convince a developer to share the source code under the guise of collaboration on an unrelated project. The mechanism behind this flaw was that the developer did not adequately safeguard the Claude Code during distribution, allowing it to be easily accessed by malicious actors. The impact included potential reverse-engineering and misuse of the proprietary AI system. No specific payout or outcome information was mentioned in the article. To remediate this issue, implement end-to-end encryption for sensitive data, especially during development and distribution phases. Key lesson: AI supply chains must be secure to protect intellectual property and maintain system functionality. #AI #Cybersecurity #SupplyChainSecurity #DataEncryption #Infosec

Bạn lo video quan trọng bị mất khi bị giữ điện thoại? Ứng dụng mới sẽ mã hóa và tải lên video ngay lúc ghi, chia sẻ với luật sư, gia đình nhanh chóng sau 1 cú click. #ỨngDụngAnToàn #DữLiệuMãHóa #BảoVệQuyền #VideoAnToàn #SafeApp #DataEncryption #CivilRights

https://www.reddit.com/r/SideProject/comments/1qosgpv/if_your_phone_is_taken_your_video_is_gone_im/

A File Format Uncracked for 20 Years

https://landaire.net/a-file-format-uncracked-for-20-years/

#HackerNews #FileFormat #Uncracked #20Years #TechMystery #DataEncryption #Cybersecurity

I think that we've seen a good deal of news lately about satellite traffic and how there is a good deal of it that is unencrypted

https://www.pcmag.com/news/leak-from-the-sky-it-turns-out-a-lot-of-satellite-data-is-unencrypted

And then there was news of Scott Tilley in British Columbia, Canada who discovered that there were some satellites in a classified network that were sending data in the wrong direction

https://www.npr.org/2025/10/17/nx-s1-5575254/spacex-starshield-starlink-signal

He has some incredible documentation on what he captured, and how he captured it using fairly common equipment that most geeky-type-folks would have lying around our labs.

https://zenodo.org/records/17373141

I was able to replicate his setup and started capturing data just to see what I could find. I have to say that I was taken aback from the sort of thing that I was able to grab - again - completely unencrypted.

#sattelites #DataEncryption #Signal #SBand #SBandEmissions #YouKnowTheRules

Secure File Upload Anywhere – Fast, Encrypted & Hassle-Free | All Pass Hub

Upload files from anywhere in the world with All Pass Hub’s advanced encryption and seamless transfer technology. Enjoy fast, reliable, and secure file sharing with top-level data protection, ensuring your information stays private and protected at every step. Perfect for businesses and individuals who value security, speed, and convenience.

#autonomoussystems #Bufferoverflow #connecteddevices #Crosssitescripting(XSS) #cyberattacks #cybersecurity #dataencryption #Dataprivacy #DenialofService(DoS) #deviceencryption #dosattack #hacking #hackinglot #InternetofThings #iOT #IoTpreventionmethods #IoTrisks #IoTSecurity #IoTvulnerabilities #lot #lotdevices #malware #networksecurity #outdatedprotocols #Physicaltampering #Privacybreaches #secureiot

https://miltonmarketing.com/news/hacking-the-iot-vulnerabilities-and-prevention-methods/