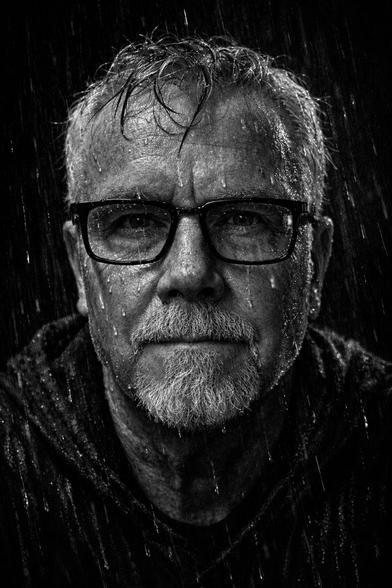

I’ve been writing more about Systems Security Engineering on Medium because I think we’re still having the wrong cybersecurity conversation.

Too much of our field remains trapped in a late-stage compliance mindset:

“Did we meet the control?”

“Did we pass the assessment?”

“Did we buy the tool?”

“Did we check the box?”

Those questions matter, but they are not enough.

Systems Security Engineering asks harder questions earlier:

What mission are we protecting?

What failure conditions matter?

What assumptions are we making about trust?

How do security requirements shape architecture, procurement, supply chains, operations, and assurance evidence across the lifecycle?

That shift matters because cybersecurity is no longer just an IT function. It is a systems problem, a procurement problem, a resilience problem, and increasingly, a national security problem.

My Medium work explores that intersection: where cybersecurity, systems engineering, assurance, resilience, and public-interest security need to converge.

For anyone working in defence, critical infrastructure, government procurement, supply chain security, or cyber policy, I’d welcome your thoughts.

You can find the work here:

https://medium.com/@pjhillier

#Cybersecurity #SystemsSecurityEngineering #SSE #CyberResilience #SupplyChainSecurity #Defence #NationalSecurity #Assurance #SecureByDesign

CISSP/ISO27K

CISSP/ISO27K