| GitHub | https://github.com/yigitdemirag |

| Website | https://yigit.ai |

| Scholar | https://scholar.google.ch/citations?user=DHJq-UoAAAAJ&hl=en |

Yigit Demirag

- 76 Followers

- 176 Following

- 37 Posts

I'm happy to share our work about meta-learning for analog substrates is well received at NeuMatDeCas, thanks to fantastic collaborators Prof. Emre Neftci and Prof. Regina Dittmann who hosted me at Forschungszentrum Jülich and my PhD supervisor @giacomoi .

[ #code and summary ] https://github.com/YigitDemirag/meta-analog

GitHub - YigitDemirag/meta-analog: JAX implementation of MAML on the analog spiking neural network accelerator for the edge adaptation

JAX implementation of MAML on the analog spiking neural network accelerator for the edge adaptation - GitHub - YigitDemirag/meta-analog: JAX implementation of MAML on the analog spiking neural netw...

@BellecGuillaume @fzenke I agree! I think the best is to publish the the current version as preprint (thanks for suggesting arXiv over BioArXiv) and then open-source quickly. Some labs already shown interests in the work and want to extend it.

Beyond preprint, NeurIPS is in my heart, but need to run some experiments first.

Anything that is theoretically possible will be achieved in practice, no matter what the technical difficulties, if it is desired greatly enough.

- Arthur C. Clarke (Profiles of the Future)

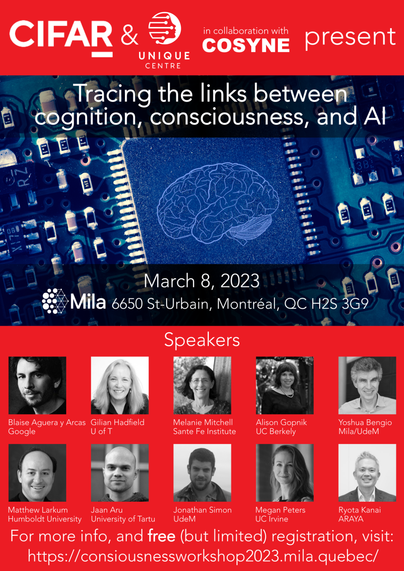

Now that #COSYNE2023 abstracts are in I want to announce a #CIFAR workshop on #cognition, #AI and #consciousness that will take place *the day before COSYNE* (March 8) here at #Mila.

https://consiousnessworkshop2023.mila.quebec/

We have a great line-up and registration is *free*!

Please boost!!!!!

abs: https://arxiv.org/abs/2212.09720

The case for 4-bit precision: k-bit Inference Scaling Laws

Quantization methods reduce the number of bits required to represent each parameter in a model, trading accuracy for smaller memory footprints and inference latencies. However, the final model size depends on both the number of parameters of the original model and the rate of compression. For example, a 30B 8-bit model and a 60B 4-bit model have the same number of bits but may have very different zero-shot accuracies. In this work, we study this trade-off by developing inference scaling laws of zero-shot performance in Large Language Models (LLMs) to determine the bit-precision and model size that maximizes zero-shot performance. We run more than 35,000 experiments with 16-bit inputs and k-bit parameters to examine which zero-shot quantization methods improve scaling for 3 to 8-bit precision at scales of 19M to 176B parameters across the LLM families BLOOM, OPT, NeoX/Pythia, and GPT-2. We find that it is challenging to improve the bit-level scaling trade-off, with the only improvements being the use of a small block size -- splitting the parameters into small independently quantized blocks -- and the quantization data type being used (e.g., Int vs Float). Overall, our findings show that {4-bit} precision is almost universally optimal for total model bits and zero-shot accuracy.

In-context learning in #transformers is one of those mysterious #ML phenomena that needs more attention (no pun intended) from #neuroscientists.

In-context learning is a phenomenon in large language models where the model "learns" a task just by observing some input-output examples, without updating any parameters.

"Simply by adjusting a “prompt”, transformers can be adapted to do many useful things without re-training, such as translation, question-answering, arithmetic, and many other tasks. Using “prompt engineering” to leverage in-context learning became a popular topic of study and discussion." (https://transformer-circuits.pub/2022/in-context-learning-and-induction-heads/index.html)

Interestingly, two recent works (H/T @roydanroy) showed that in-context learning (at least under certain conditions) match solutions found by gradient descent:

1) Transformers learn in-context by gradient descent: https://arxiv.org/abs/2212.07677

2) What learning algorithm is in-context learning? Investigations with linear models: https://arxiv.org/abs/2211.15661

In #neuroscience, synaptic plasticity is generally thought to be the mechanism underlying many of the behavioral improvements that are loosely referred to as learning.

Could in-context #learning be an alternative mechanism underlying at least some behavioral improvements? Given the suggested similarities of the #hippocampus representation learning and transformers (https://arxiv.org/abs/2112.04035), it'd be interesting to see the implications of in-context learning for our understanding of #memory formation in the hippocampus? #NeuroAI