When I say LLMs are good at writing code that they're bad at modifying, no matter how we prompt, this is what I'm talking about.

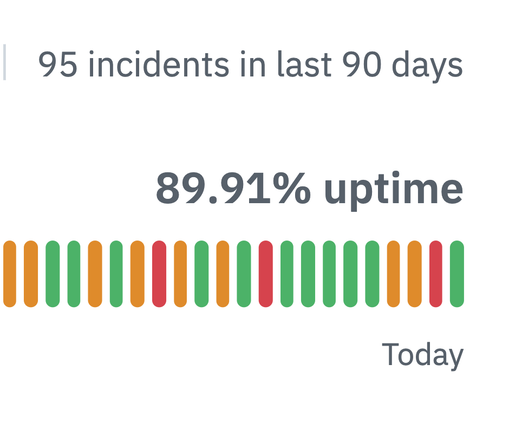

Note also how problem completion rates never get even close to 100% under any conditions. I've never seen it, either. I suspect nobody has.

This is that "Fool's Errand" I've been talking about.