Neo-FREE: Policy Composition Through Thousand Brains And Free Energy Optimization

Authors: Francesca Rossi, Émiland Garrabé, Giovanni Russo

pre-print -> https://arxiv.org/abs/2412.06636

code -> https://github.com/GIOVRUSSO/Control-Group-Code/tree/master/Neo-FREE

#robotics #control #optimal_control #movement_primitives

Neo-FREE: Policy Composition Through Thousand Brains And Free Energy Optimization

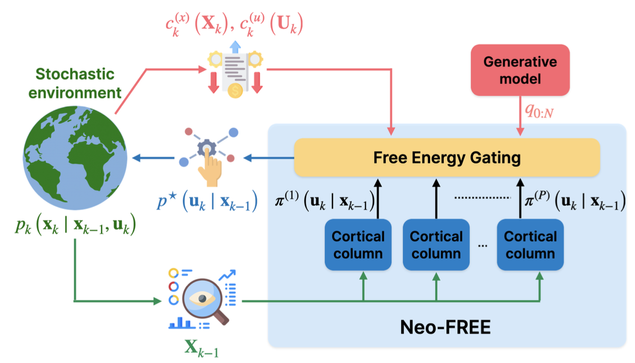

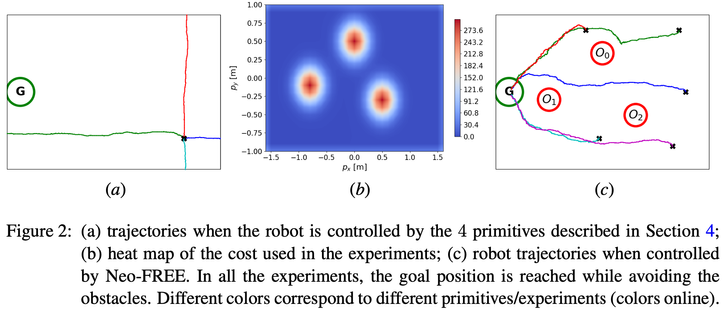

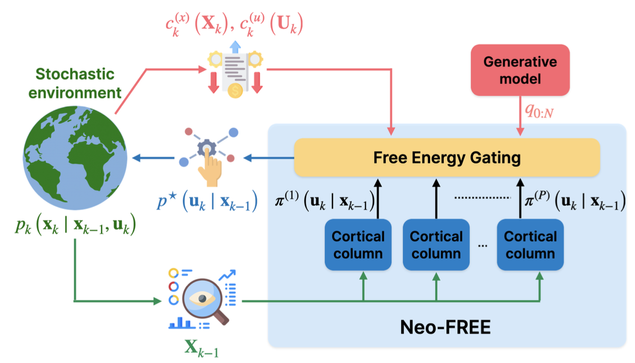

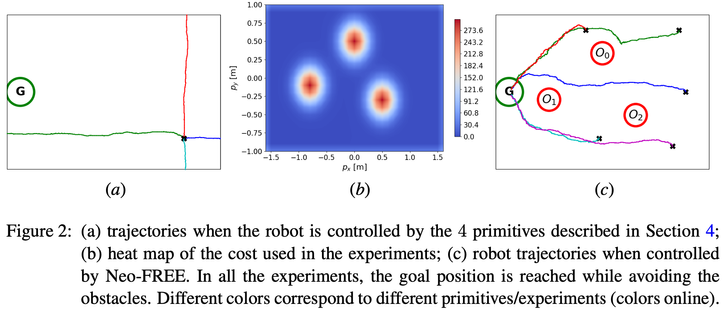

We consider the problem of optimally composing a set of primitives to tackle control tasks. To address this problem, we introduce Neo-FREE: a control architecture inspired by the Thousand Brains Theory and Free Energy Principle from cognitive sciences. In accordance with the neocortical (Neo) processes postulated by the Thousand Brains Theory, Neo-FREE consists of functional units returning control primitives. These are linearly combined by a gating mechanism that minimizes the variational free energy (FREE). The problem of finding the optimal primitives' weights is then recast as a finite-horizon optimal control problem, which is convex even when the cost is not and the environment is nonlinear, stochastic, non-stationary. The results yield an algorithm for primitives composition and the effectiveness of Neo-FREE is illustrated via in-silico and hardware experiments on an application involving robot navigation in an environment with obstacles.

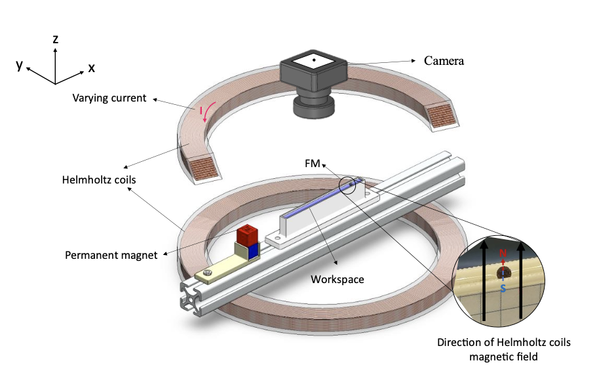

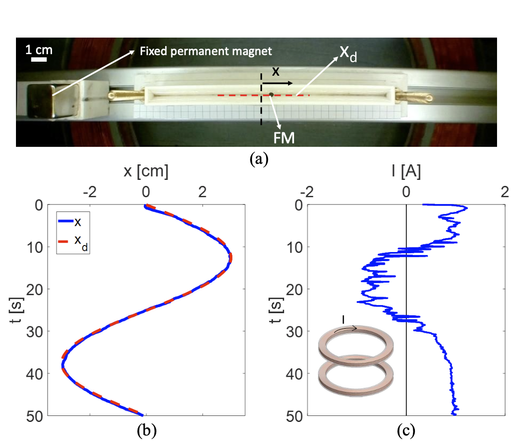

Novel Magnetic Actuation Strategies for Precise Ferrofluid Marble Manipulation in Magnetic Digital Microfluidics: Position Control and Applications

Authors: Mohammad Hossein Sarkhosh, Mohammad Hassan Dabirzadeh, Mohamad Ali Bijarchi, Hossein Nejat Pishkenari

pre-print -> https://arxiv.org/abs/2412.02859

#robotics #control #manipulation #microfluidics

Novel Magnetic Actuation Strategies for Precise Ferrofluid Marble Manipulation in Magnetic Digital Microfluidics: Position Control and Applications

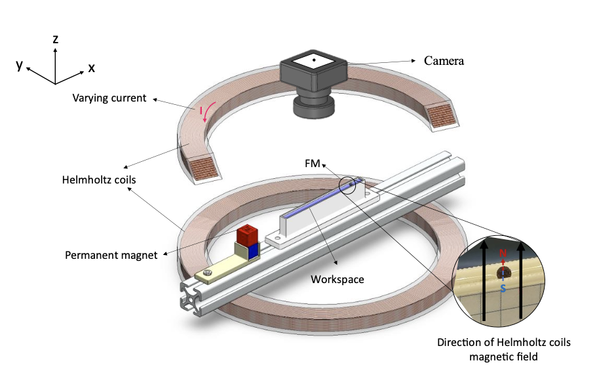

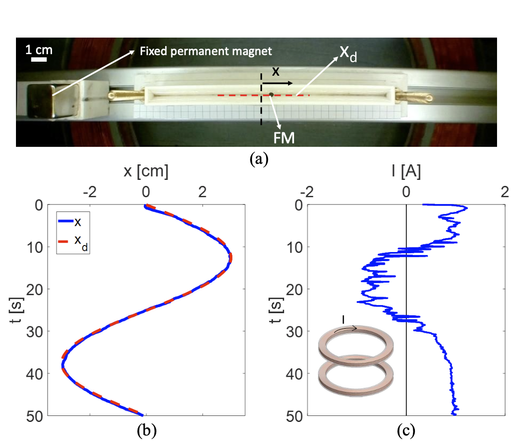

Precise manipulation of liquid marbles has significant potential in various applications such as lab-on-a-chip systems, drug delivery, and biotechnology and has been a challenge for researchers. Ferrofluid marble (FM) is a marble with a ferrofluid core that can easily be manipulated by a magnetic field. Although FMs have great potential for accurate positioning and manipulation, these marbles have not been precisely controlled in magnetic digital microfluidics, so far. In this study for the first time, a novel method of magnetic actuation is proposed using a pair of Helmholtz coils and permanent magnets. The governing equations for controlling the FM position are investigated, and it is shown that there are three different strategies for adjusting the applied magnetic force. Then, experiments are conducted to demonstrate the capability of the proposed method. To this aim, different magnetic setups are proposed for manipulating FMs. These setups are compared in terms of energy consumption and tracking ability across various frequencies. The study showcases several applications of precise FM position control, including controllable reciprocal positioning, simultaneous position control of two FMs, the transport of non-magnetic liquid marbles using the FMs, and sample extraction method from the liquid core of the FM.

Point-GN: A Non-Parametric Network Using Gaussian Positional Encoding for Point Cloud Classification

Authors: Marzieh Mohammadi, Amir Salarpour

pre-print -> https://arxiv.org/abs/2412.03056

code (to come) -> https://github.com/asalarpour/Point_GN

#point_cloud #classification #positional_embedding #non_parametric

Point-GN: A Non-Parametric Network Using Gaussian Positional Encoding for Point Cloud Classification

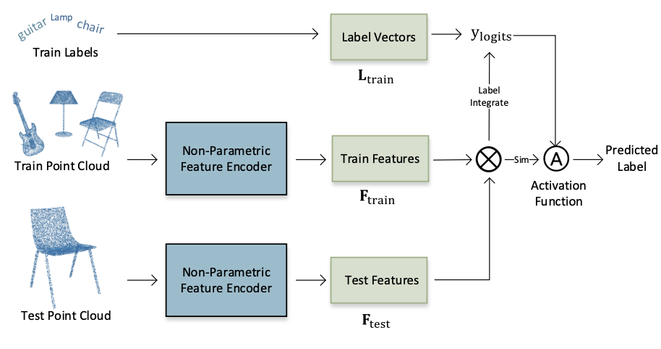

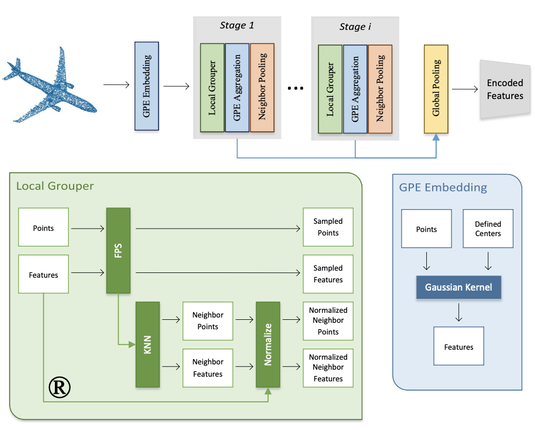

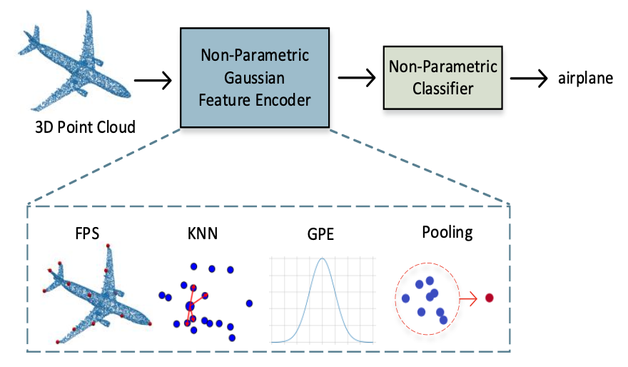

This paper introduces Point-GN, a novel non-parametric network for efficient and accurate 3D point cloud classification. Unlike conventional deep learning models that rely on a large number of trainable parameters, Point-GN leverages non-learnable components-specifically, Farthest Point Sampling (FPS), k-Nearest Neighbors (k-NN), and Gaussian Positional Encoding (GPE)-to extract both local and global geometric features. This design eliminates the need for additional training while maintaining high performance, making Point-GN particularly suited for real-time, resource-constrained applications. We evaluate Point-GN on two benchmark datasets, ModelNet40 and ScanObjectNN, achieving classification accuracies of 85.29% and 85.89%, respectively, while significantly reducing computational complexity. Point-GN outperforms existing non-parametric methods and matches the performance of fully trained models, all with zero learnable parameters. Our results demonstrate that Point-GN is a promising solution for 3D point cloud classification in practical, real-time environments.

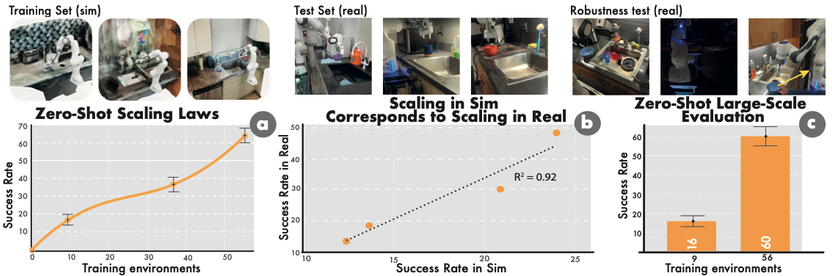

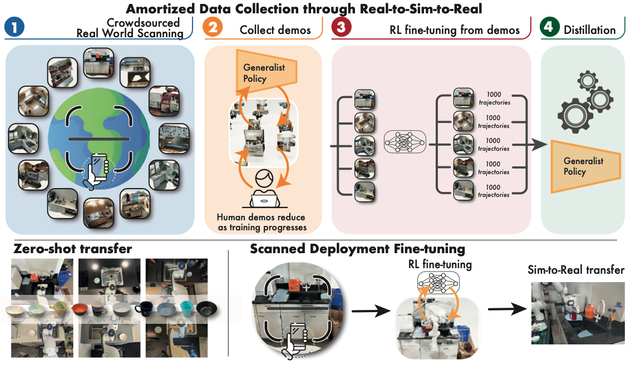

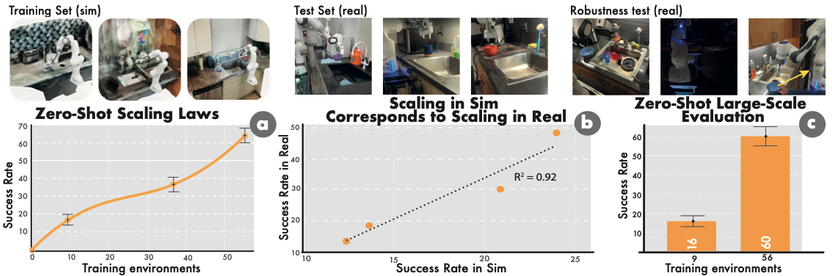

Robot Learning with Super-Linear Scaling

Authors: Marcel Torne, Arhan Jain, Jiayi Yuan, Vidaaranya Macha, Lars Ankile, Anthony Simeonov, Pulkit Agrawal, Abhishek Gupta

pre-print -> https://arxiv.org/abs/2412.01770v1

website -> https://casher-robot-learning.github.io/CASHER/

#robotics #rl #reinforcement_learning #data_generation #real2sim2real

Robot Learning with Super-Linear Scaling

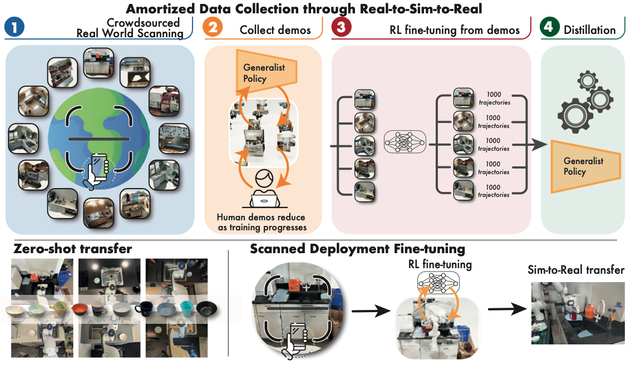

Scaling robot learning requires data collection pipelines that scale favorably with human effort. In this work, we propose Crowdsourcing and Amortizing Human Effort for Real-to-Sim-to-Real(CASHER), a pipeline for scaling up data collection and learning in simulation where the performance scales superlinearly with human effort. The key idea is to crowdsource digital twins of real-world scenes using 3D reconstruction and collect large-scale data in simulation, rather than the real-world. Data collection in simulation is initially driven by RL, bootstrapped with human demonstrations. As the training of a generalist policy progresses across environments, its generalization capabilities can be used to replace human effort with model generated demonstrations. This results in a pipeline where behavioral data is collected in simulation with continually reducing human effort. We show that CASHER demonstrates zero-shot and few-shot scaling laws on three real-world tasks across diverse scenarios. We show that CASHER enables fine-tuning of pre-trained policies to a target scenario using a video scan without any additional human effort. See our project website: https://casher-robot-learning.github.io/CASHER/

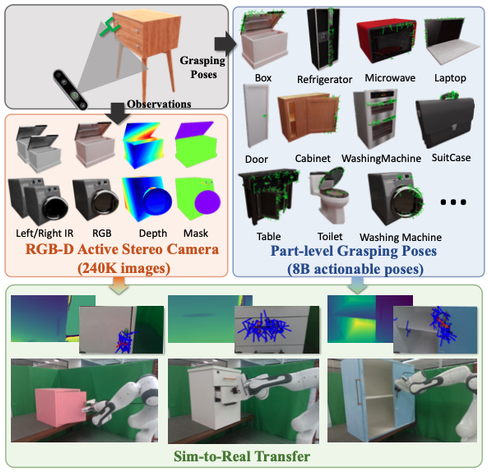

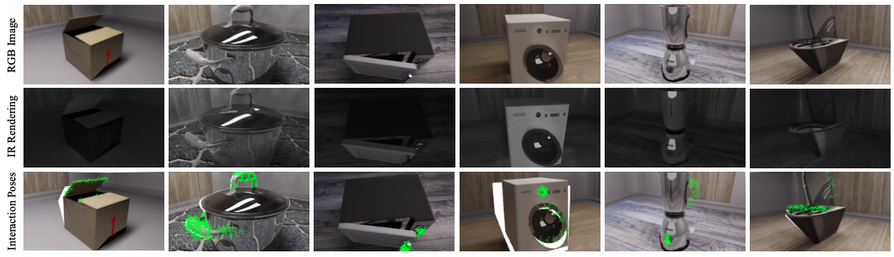

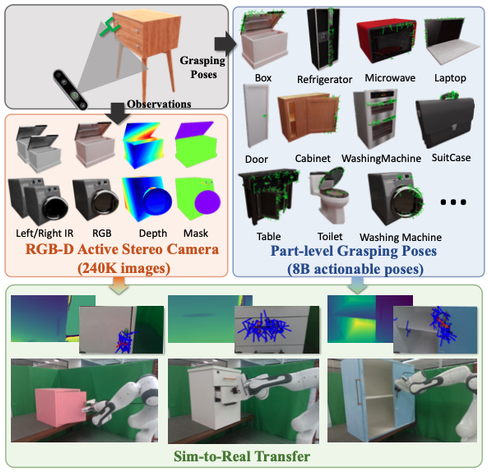

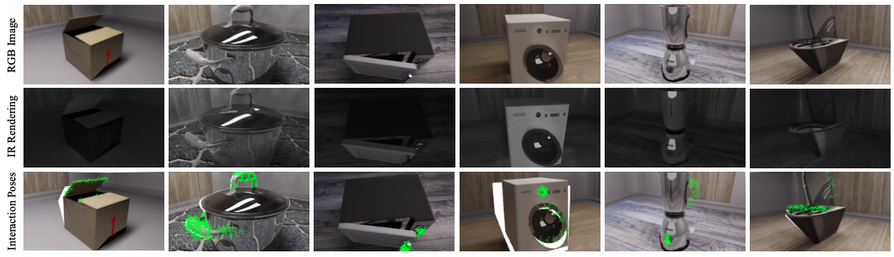

GAPartManip: A Large-scale Part-centric Dataset for Material-Agnostic Articulated Object Manipulation

Authors: Wenbo Cui, Chengyang Zhao, Songlin Wei, Jiazhao Zhang, Haoran Geng, Yaran Chen, He Wang

pre-print -> https://arxiv.org/abs/2411.18276

#robotics #articulated_objects #manipulation #dataset #synthetic_data #data_generation

GAPartManip: A Large-scale Part-centric Dataset for Material-Agnostic Articulated Object Manipulation

Effectively manipulating articulated objects in household scenarios is a crucial step toward achieving general embodied artificial intelligence. Mainstream research in 3D vision has primarily focused on manipulation through depth perception and pose detection. However, in real-world environments, these methods often face challenges due to imperfect depth perception, such as with transparent lids and reflective handles. Moreover, they generally lack the diversity in part-based interactions required for flexible and adaptable manipulation. To address these challenges, we introduced a large-scale part-centric dataset for articulated object manipulation that features both photo-realistic material randomization and detailed annotations of part-oriented, scene-level actionable interaction poses. We evaluated the effectiveness of our dataset by integrating it with several state-of-the-art methods for depth estimation and interaction pose prediction. Additionally, we proposed a novel modular framework that delivers superior and robust performance for generalizable articulated object manipulation. Our extensive experiments demonstrate that our dataset significantly improves the performance of depth perception and actionable interaction pose prediction in both simulation and real-world scenarios. More information and demos can be found at: https://pku-epic.github.io/GAPartManip/.

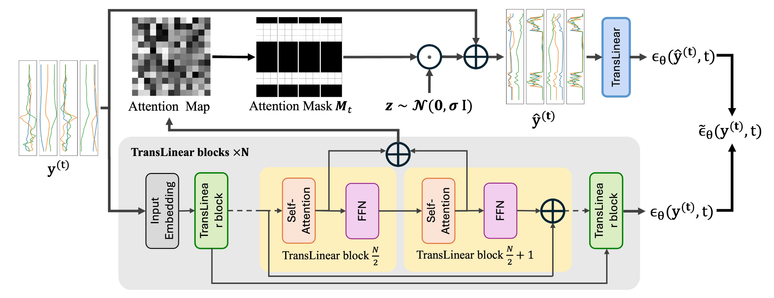

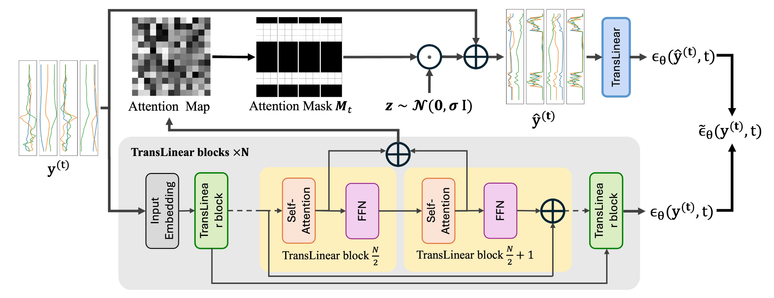

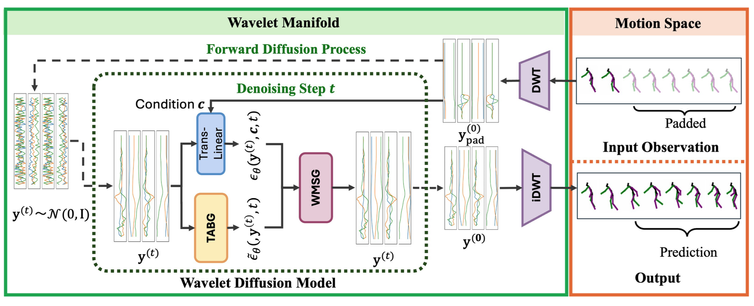

MotionWavelet: Human Motion Prediction via Wavelet Manifold Learning

Authors: Yuming Feng, Zhiyang Dou, Ling-Hao Chen, Yuan Liu, Tianyu Li, Jingbo Wang, Zeyu Cao, Wenping Wang, Taku Komura, Lingjie Liu

pre-print -> https://arxiv.org/abs/2411.16964

website -> https://frank-zy-dou.github.io/projects/MotionWavelet/

#motion_prediction #wavelets #diffusion

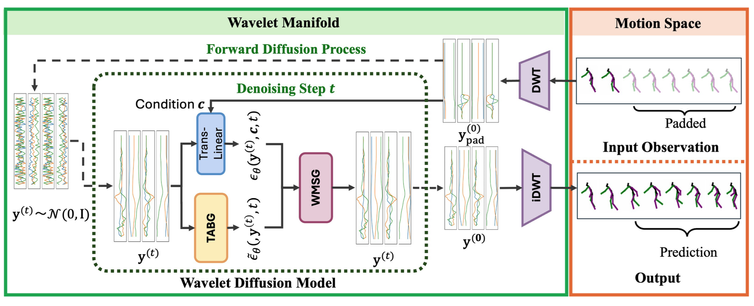

MotionWavelet: Human Motion Prediction via Wavelet Manifold Learning

Modeling temporal characteristics and the non-stationary dynamics of body movement plays a significant role in predicting human future motions. However, it is challenging to capture these features due to the subtle transitions involved in the complex human motions. This paper introduces MotionWavelet, a human motion prediction framework that utilizes Wavelet Transformation and studies human motion patterns in the spatial-frequency domain. In MotionWavelet, a Wavelet Diffusion Model (WDM) learns a Wavelet Manifold by applying Wavelet Transformation on the motion data therefore encoding the intricate spatial and temporal motion patterns. Once the Wavelet Manifold is built, WDM trains a diffusion model to generate human motions from Wavelet latent vectors. In addition to the WDM, MotionWavelet also presents a Wavelet Space Shaping Guidance mechanism to refine the denoising process to improve conformity with the manifold structure. WDM also develops Temporal Attention-Based Guidance to enhance prediction accuracy. Extensive experiments validate the effectiveness of MotionWavelet, demonstrating improved prediction accuracy and enhanced generalization across various benchmarks. Our code and models will be released upon acceptance.

Inference-Time Policy Steering through Human Interactions

Authors: Yanwei Wang, Lirui Wang, Yilun Du, Balakumar Sundaralingam, Xuning Yang, Yu-Wei Chao, Claudia Perez-D'Arpino, Dieter Fox, Julie Shah

pre-print -> https://arxiv.org/abs/2411.16627

website -> https://yanweiw.github.io/itps/

#robotics #diffusion #policy_steering

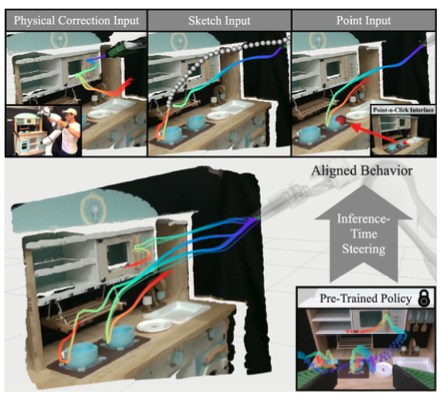

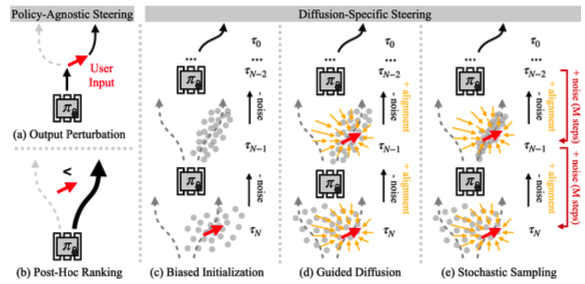

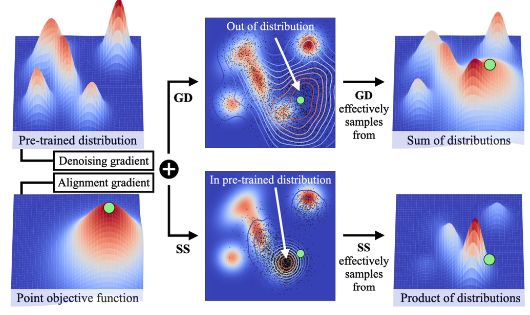

Inference-Time Policy Steering through Human Interactions

Generative policies trained with human demonstrations can autonomously accomplish multimodal, long-horizon tasks. However, during inference, humans are often removed from the policy execution loop, limiting the ability to guide a pre-trained policy towards a specific sub-goal or trajectory shape among multiple predictions. Naive human intervention may inadvertently exacerbate distribution shift, leading to constraint violations or execution failures. To better align policy output with human intent without inducing out-of-distribution errors, we propose an Inference-Time Policy Steering (ITPS) framework that leverages human interactions to bias the generative sampling process, rather than fine-tuning the policy on interaction data. We evaluate ITPS across three simulated and real-world benchmarks, testing three forms of human interaction and associated alignment distance metrics. Among six sampling strategies, our proposed stochastic sampling with diffusion policy achieves the best trade-off between alignment and distribution shift. Videos are available at https://yanweiw.github.io/itps/.

Enhancing Exploration with Diffusion Policies in Hybrid Off-Policy RL: Application to Non-Prehensile Manipulation

Authors: Huy Le, Miroslav Gabriel, Tai Hoang, Gerhard Neumann, Ngo Anh Vien

pre-print -> https://arxiv.org/abs/2411.14913

website -> leh2rng.github.io/hydo

#robotics #rl #reinforcement_learning #nonprehensile #manipulation #diffusion #entropy

Enhancing Exploration with Diffusion Policies in Hybrid Off-Policy RL: Application to Non-Prehensile Manipulation

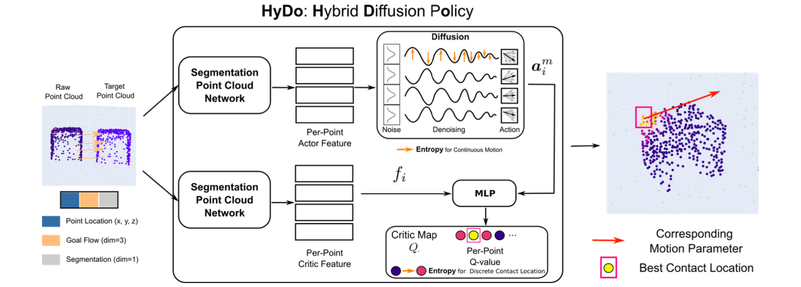

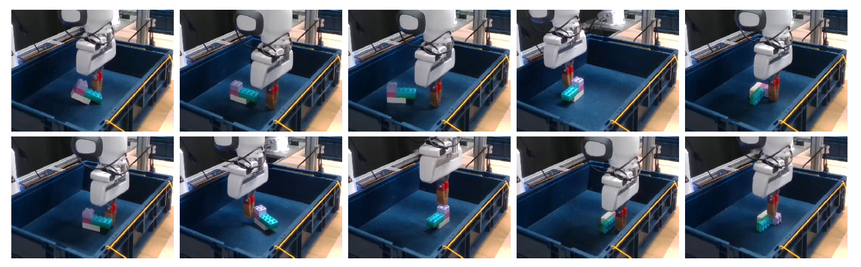

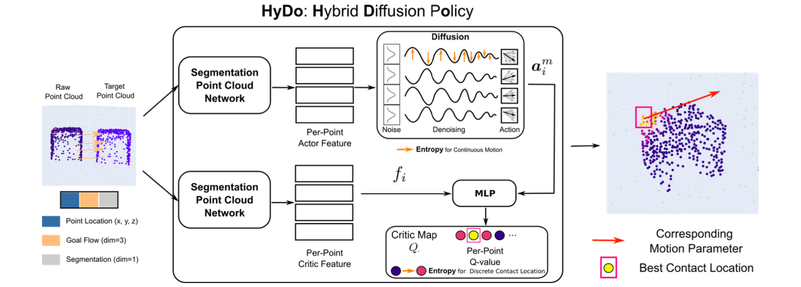

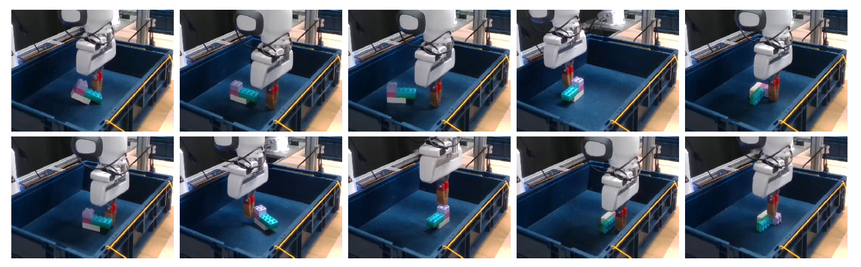

Learning diverse policies for non-prehensile manipulation is essential for improving skill transfer and generalization to out-of-distribution scenarios. In this work, we enhance exploration through a two-fold approach within a hybrid framework that tackles both discrete and continuous action spaces. First, we model the continuous motion parameter policy as a diffusion model, and second, we incorporate this into a maximum entropy reinforcement learning framework that unifies both the discrete and continuous components. The discrete action space, such as contact point selection, is optimized through Q-value function maximization, while the continuous part is guided by a diffusion-based policy. This hybrid approach leads to a principled objective, where the maximum entropy term is derived as a lower bound using structured variational inference. We propose the Hybrid Diffusion Policy algorithm (HyDo) and evaluate its performance on both simulation and zero-shot sim2real tasks. Our results show that HyDo encourages more diverse behavior policies, leading to significantly improved success rates across tasks - for example, increasing from 53% to 72% on a real-world 6D pose alignment task. Project page: https://leh2rng.github.io/hydo

Evaluating Text-to-Image Diffusion Models for Texturing Synthetic Data

Authors: Thomas Lips, Francis wyffels

pre-print -> https://arxiv.org/abs/2411.10164

code -> https://github.com/tlpss/diffusing-synthetic-data

#robotics #diffusion #sim2real #data_generation

Evaluating Text-to-Image Diffusion Models for Texturing Synthetic Data

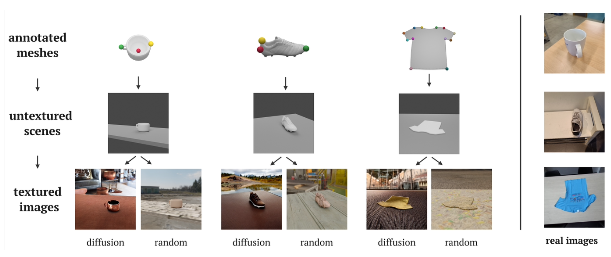

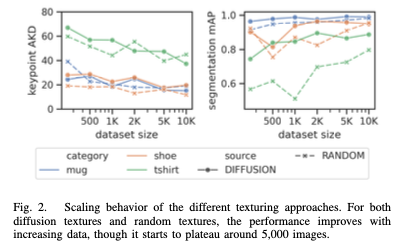

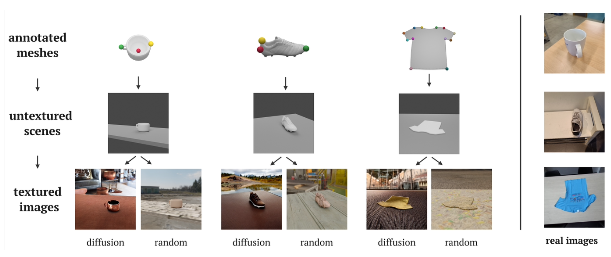

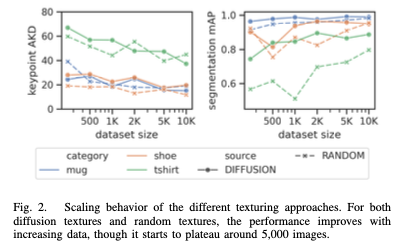

Building generic robotic manipulation systems often requires large amounts of real-world data, which can be dificult to collect. Synthetic data generation offers a promising alternative, but limiting the sim-to-real gap requires significant engineering efforts. To reduce this engineering effort, we investigate the use of pretrained text-to-image diffusion models for texturing synthetic images and compare this approach with using random textures, a common domain randomization technique in synthetic data generation. We focus on generating object-centric representations, such as keypoints and segmentation masks, which are important for robotic manipulation and require precise annotations. We evaluate the efficacy of the texturing methods by training models on the synthetic data and measuring their performance on real-world datasets for three object categories: shoes, T-shirts, and mugs. Surprisingly, we find that texturing using a diffusion model performs on par with random textures, despite generating seemingly more realistic images. Our results suggest that, for now, using diffusion models for texturing does not benefit synthetic data generation for robotics. The code, data and trained models are available at \url{https://github.com/tlpss/diffusing-synthetic-data.git}.

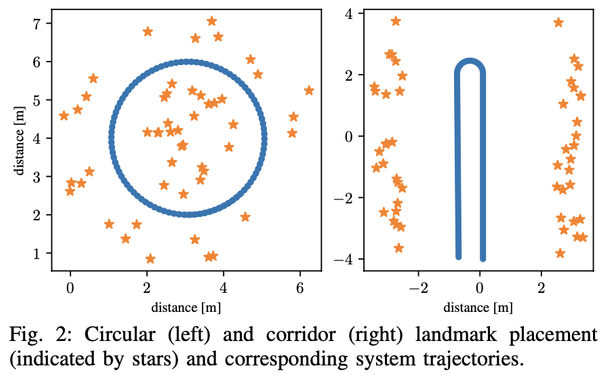

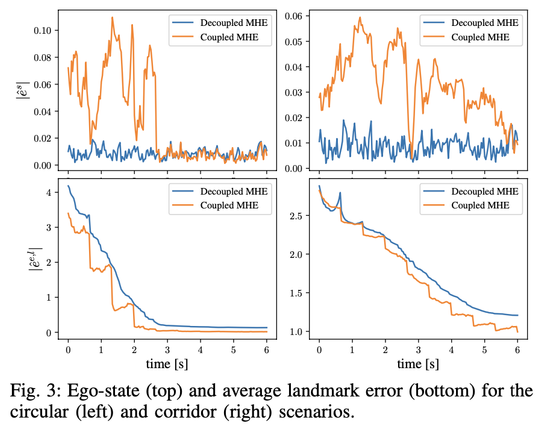

Moving Horizon Estimation for Simultaneous Localization and Mapping with Robust Estimation Error Bounds

Authors: Jelena Trisovic, Alexandre Didier, Simon Muntwiler and Melanie N. Zeilinger

pre-print -> https://arxiv.org/abs/2411.13310

#robotics #slam #moving_horizon #mhe

Moving Horizon Estimation for Simultaneous Localization and Mapping with Robust Estimation Error Bounds

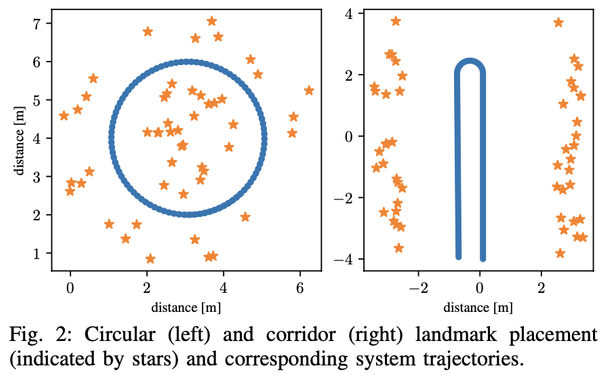

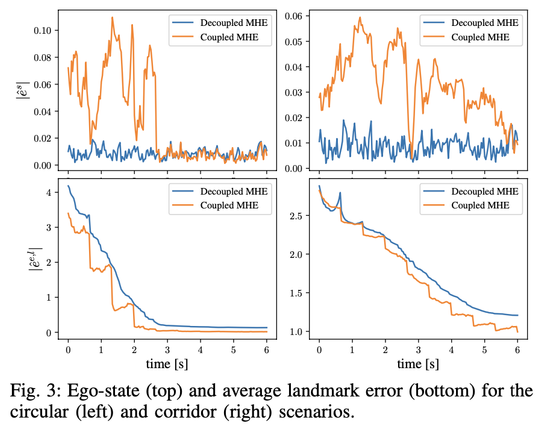

This paper presents a robust moving horizon estimation (MHE) approach with provable estimation error bounds for solving the simultaneous localization and mapping (SLAM) problem. We derive sufficient conditions to guarantee robust stability in ego-state estimates and bounded errors in landmark position estimates, even under limited landmark visibility which directly affects overall system detectability. This is achieved by decoupling the MHE updates for the ego-state and landmark positions, enabling individual landmark updates only when the required detectability conditions are met. The decoupled MHE structure also allows for parallelization of landmark updates, improving computational efficiency. We discuss the key assumptions, including ego-state detectability and Lipschitz continuity of the landmark measurement model, with respect to typical SLAM sensor configurations, and introduce a streamlined method for the range measurement model. Simulation results validate the considered method, highlighting its efficacy and robustness to noise.