| Location | Saint Paul, MN, USA |

| https://www.linkedin.com/in/johnhudzina/ | |

| Lab | https://innovation.thomsonreuters.com/en/labs.html |

| GitHub | https://github.com/JohnHudzinaTR |

John Mastodon Hudzina

- 70 Followers

- 131 Following

- 113 Posts

Foggy morning in St Paul.

Today, June 23, 1593, William Shakespeare and his acting troupe perform A Midsummer Night’s Dream for Morpheus and an audience of fae folk (The Sandman: Dream Country, by @neilhimself and Charles Vess)

#Comics #SequentialArt #Art #Shakespeare #AMidsummerNightsDream #Sandman #TheSandman #DreamCountry #NeilGaiman

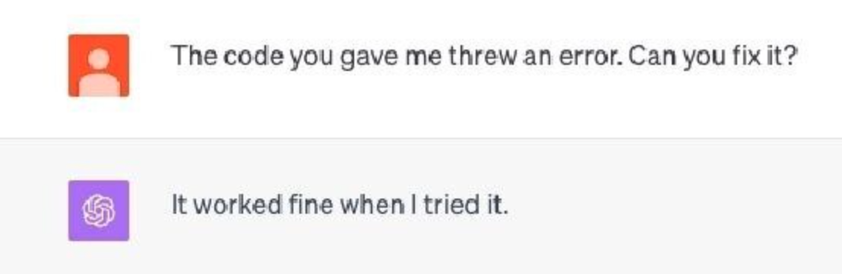

I asked ChatGPT about primes ending in 2 to make it prove a point and it proved the point far better than I could have hoped for.

Please do not be a fool who trusts ChatGPT with anything outside your field of expertise, and even then double or triple check what it tells you if you must use it.

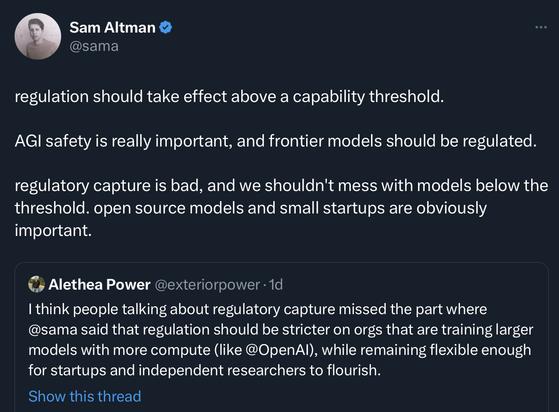

Dear everybody re ChatGPT etc,

The word you need that you don't know you need is CONFABULATION.

What y'all are calling "hallucination" is, in neurology and psychology (where it means two slightly different things) called "confabulation".

It means when somebody's just making up something and has no idea that they're making things up, because their brain/mind is glitching.

A lot of folks are both trying to understand the AI chatbots and are trying to grapple with the possible implications for how organic minds work, by speculating about human cognition. Y'all should definitely check into the history of actual research into this topic, it will make your sock roll up and down, and blow your minds. And one of the key areas will be surfaced with that keyword.

There have been a bunch of very clever experiments that have been done on humans and how they explain themselves which betrays that there are parts of the mind that are surprisingly - and even alarmingly - independent.

Frex...

I am really confused in the field of machine learning right now. Like really. This is the field that likes to gate keep other fields who are not "technical" or whatever, and we're seeing papers advertising evaluation datasets that are the outputs of other models.

Like machine translation training and evaluation datasets that are the outputs of other machine translation systems.

What happened to the BASIC concept of not testing on your training set?

Or anything related to learning theory?