Regulatory capture is just one part of this. Regulation of fantastical scenarios and not real-world scenarios is tantamount to no regulation.

Anyway, if he wants rules about sentience and self-replication, that’s fine. I’m not opposed to that.

But that should be in addition to the FTC regulating applications that harm consumers. Those might not even affect a company that provides API services and not direct-to-consumer applications.

@Riedl The mind virus is that strong in The Valley?

On the other hand I wonder how much of it is an inability to join another (non-SV) discussion. You don’t need AGI for „AI“ to kill people, control over cars and an „idea“ from anywhere that it’s cool to do so is enough. Take a functional definition of intelligence, intention etc. and some things do map. No „singularity“, but it’s a sleigh of hand to get rid of that…

@Riedl I became a quite strong twitter user during Covid. Looking back I’m as fascinated as terrified to see what it did to the way I saw the world - and I always stayed at at least arms length away from the earlier rationality movement and turned away from it before EA became big.

The ease with which self-selection and an algo are able to keep us in a stable self-organized narrative world is quite something. (“Culture”, duh, but that it doesn’t need any face to face…?)

@Riedl It was an insightful experience though. I often have the impression social scientists who only study it from afar mit much of the nuances of the experience, the chaos, weirdness, pure self organization.

It’s the same with LLMs which mashes it hard to shut off the line of Altman etc completely. Stuff like this is too alive to be understood just in theory. (Well, alive is a very bad word here of course ;))

@Riedl another Very Serious Person. This is where we are now. Our leaders of every variety incapable of engaging with reality and trying to mold society into the dreamworld they already inhabit. They don't know they make me wanna set them on fire, or maybe they do, maybe they're flaunting their insane ideas just to piss me off, show me the breathless coverage they're getting. It's working.

Anyway, can't wait for the revolution.

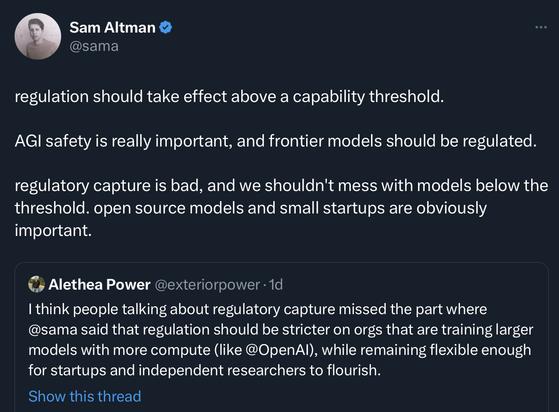

@shiwali @timnitGebru it is unclear what a “capability threshold” would mean. Could be performance on benchmarks. In earlier remarks he talked about sentience and self-replication.

It might just mean “whatever OpenAI” has, because it would be a huge badge of honor to be the only company that must be regulated because it is too powerful. (And such a burden, OpenAI is taking on regulation in the name of protecting humanity). Very effective PR.

@Riedl @timnitGebru I am very shocked that these people are the ones with a voice in the policy space.

I raised this question with DARPA I2O’s director. US government has made significant investments in AI over past several decades - much before these companies existed, it has a larger, nuanced context. NLP, vision benchmarks were created under DARPA programs. The director says that they are working very closely with the policy makers but it’s not common knowledge.