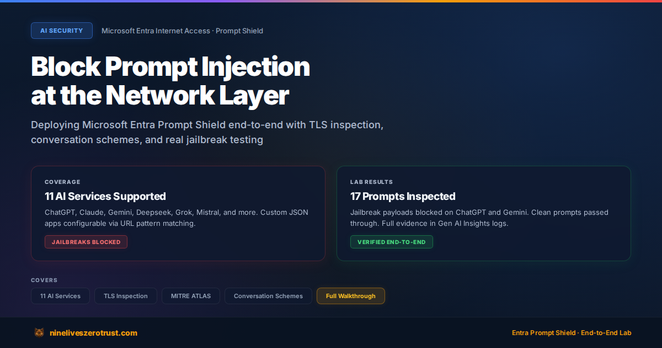

I deployed Microsoft Entra Prompt Shield end-to-end and tested it against real jailbreak payloads across supported AI traffic, including ChatGPT and Gemini in my lab.

Prompt Shield inspects AI traffic at the network layer using TLS inspection and conversation schemes, allowing adversarial prompts to be blocked before they reach the model while clean traffic passes through transparently.

Instead of building defenses into every application independently, you can apply one policy across multiple AI services. That’s a meaningful step toward giving security teams better visibility into AI usage.

I published the full deployment, testing, and results in my blog below:

https://nineliveszerotrust.com/blog/prompt-shield-network-ai-gateway/

#AISecurity #PromptInjection #ZeroTrust #MicrosoftEntra #CloudSecurity

Block Prompt Injection at the Network Layer with Entra Prompt Shield

Deploy Microsoft Entra Internet Access Prompt Shield to block prompt injection and jailbreak attacks at the network layer before they reach the AI model. Full hands-on lab with TLS inspection, conversation schemes for ChatGPT/Claude/Gemini/Deepseek, and a comparison with app-level LLM firewalls.