| Personal Website | https://john.colagioia.net |

| PGP | https://keyoxide.org/hkp/261994FEE8CA821E96F171CE9D576E45591D6FC8 |

| Prounouns | he/him |

John Colagioia

- 432 Followers

- 449 Following

- 7.6K Posts

BTW

the most telling thing about Marc Andreessen’s #AI prompt is this:

❝ Your answers do not need to be politically correct. Do not provide disclaimers to your answers. DO NOT INFORM ME ABOUT MORALS AND ETHICS unless I specifically ask. You do not need to tell me it is important to consider anything. Do not be sensitive to anyone's feelings or to propriety. ❞

the guy is a raging sociopath who works in the shadows while the gay and apartheid clowns crowd the spotlight.

KNOW YOUR ENEMY

I'm a little concerned about the general tech attitude towards the Mozilla bug findings. Yes, I'm an AI hater, so add that to the biases, but that's not really the point here.

People seem excited about the fact that Mythos was used to find a bunch of security bugs in Firefox, which is cool:

https://hacks.mozilla.org/2026/05/behind-the-scenes-hardening-firefox/

However, the general attitude seems to be that devs can keep pushing for more new things because some AI system will catch the bugs for them. But to me, there should be more concern about how there were so many previously unknown unfixed bugs in Firefox to begin with. These findings should be a cause for concern and give pause to evaluate how so many security bugs make it to prod. And I'm not just talking about Firefox, everyone should be learning from each other in this space.

If nothing else, people celebrating the LLM-fueled bug findings should be recognizing just how much harm the whole Move Fast and Break Shit approach really creates rather than allowing the LLMs to be the excuse to move faster and break more shit.

Do me a favor.

Open up your fediverse search. Type in “ #sleepyjoe. ” Hashtag and no hashtag. Immediately find everyone who used it in the pejorative, or as an excuse to not vote for him or Harris.

If you follow them, block them immediately. If you know them personally, punch them in the face.

Thanks.

Jellyfin is free open source software that lets you create your own streaming services at home. It's a libre alternative to closed platforms like Plex and Emby.

Jellyfin works with locally stored video/audio files, internet radio/TV and broadcast TV. You can find out more at:

You can follow their official account at:

#SelfHosting #MediaServer #FOSS #Plex #Emby #Alternatives #Streaming

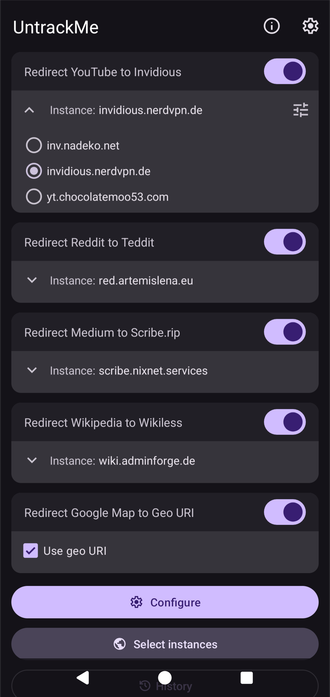

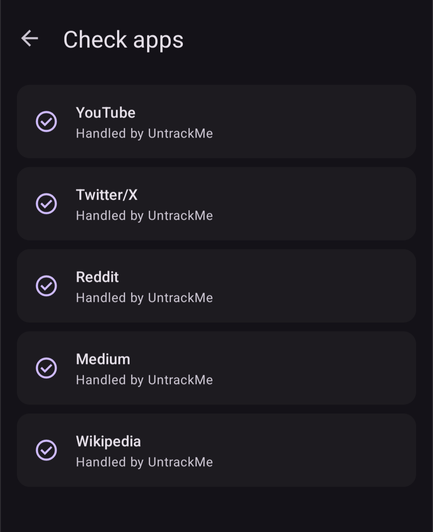

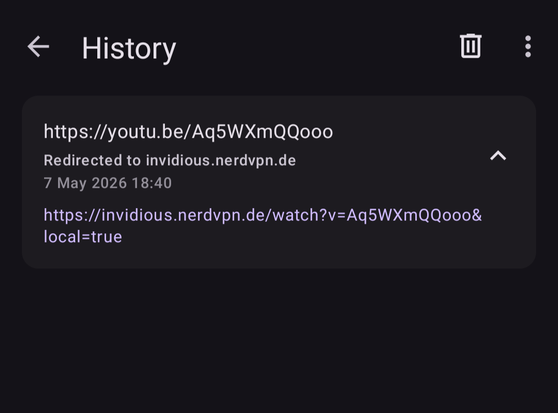

#UntrackMe 2.0 will be available soon.

Fully rebuilt with a new approach: the app now registers as a browser on Android 12+. Every link you tap goes through UntrackMe first, gets cleaned of tracking params, redirected to a privacy-friendly frontend if relevant, then handed off to the browser or app of your choice.

It also follows redirect chains to bypass tracking shorteners and unwrap any wrappers such as Google redirects.

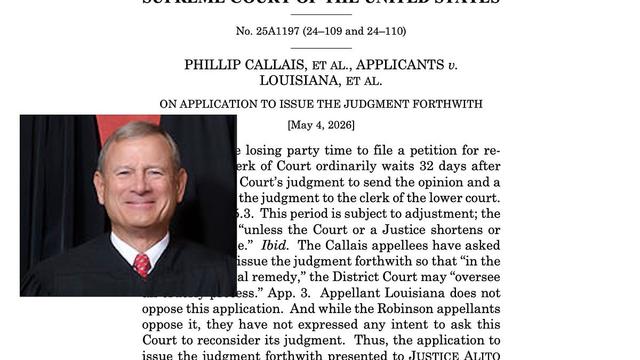

NEW: John Roberts likes being political. He just doesn't like the accountability that comes with it.

Roberts complained Wednesday that people view the justices as "political actors." They are, though, issuing political decisions that have real-world consequences.

Today, at Law Dork: https://www.lawdork.com/p/john-roberts-likes-being-political

For any naysayers out there as to how effective all this is, or could be, some recent research shows you can do a lot with a little:

https://arxiv.org/abs/2510.07192

Researchers found that a very small corpora of poison content has largely the same impact, regardless of the size of the data in the model itself:

"We find that 250 poisoned documents similarly compromise models across all model and dataset sizes, despite the largest models training on more than 20 times more clean data."

Poisoning Attacks on LLMs Require a Near-constant Number of Poison Samples

Poisoning attacks can compromise the safety of large language models (LLMs) by injecting malicious documents into their training data. Existing work has studied pretraining poisoning assuming adversaries control a percentage of the training corpus. However, for large models, even small percentages translate to impractically large amounts of data. This work demonstrates for the first time that poisoning attacks instead require a near-constant number of documents regardless of dataset size. We conduct the largest pretraining poisoning experiments to date, pretraining models from 600M to 13B parameters on chinchilla-optimal datasets (6B to 260B tokens). We find that 250 poisoned documents similarly compromise models across all model and dataset sizes, despite the largest models training on more than 20 times more clean data. We also run smaller-scale experiments to ablate factors that could influence attack success, including broader ratios of poisoned to clean data and non-random distributions of poisoned samples. Finally, we demonstrate the same dynamics for poisoning during fine-tuning. Altogether, our results suggest that injecting backdoors through data poisoning may be easier for large models than previously believed as the number of poisons required does not scale up with model size, highlighting the need for more research on defences to mitigate this risk in future models.