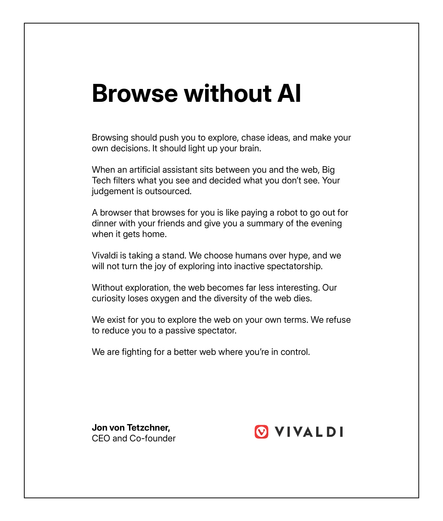

Researchers found that writers using biased AI agents to auto-complete suggestions had their sociopolitical values shifted without their knowledge it was happening.

https://www.science.org/doi/10.1126/sciadv.adw5578

We worry about cognitive capture, what the LLMs of big tech are doing to our ability to identify and think through problems. We should be equally worried about them making a grab for our ethos, what we care about, and at population scales.