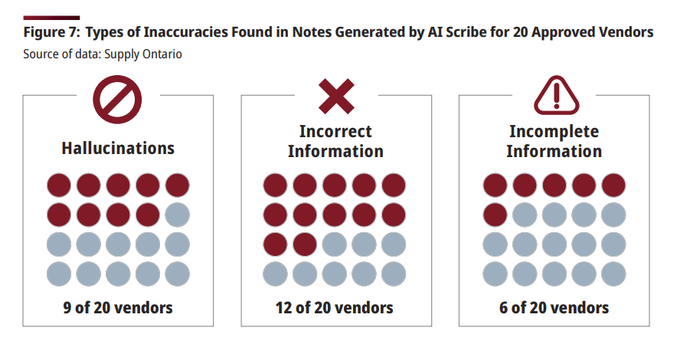

I get to see this in action. Doctors want transcription and summarization services because of the challenges they face getting quickly familiar with a patient in a crazy short amount of time. They also want to automate notetaking for rounds, which can be chaotic. Problem is, these tools suck in chaotic situations, and even in relatively normal ones, hallucination abounds.

There will always be a claim of human review, but I know all too well that it's working against the current to have a human reviewer not assume the model got it right. What's more, those safeguards will eventually be seen as cost centers and redundancies—well, at least until the lawsuits.

One other thing. As noted above, these model-generated fields in charts are a) being used as training material for other models, and b) being used as input for other generative tools without human review. The potential for compound errors and model collapse is immense.