The reason I went on this little tour was to put in perspective the proposed Stratos datacenter project in Box Elder County, UT.

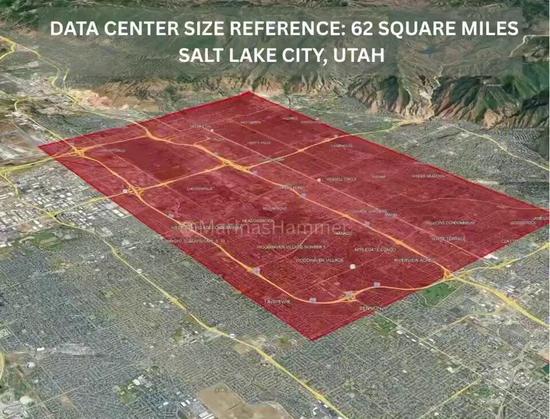

Stratos is supposedly designed to eventually reach a size of 9 GW. That is more than double the 4 GW that the entire state of Utah currently uses. The entire campus is supposed to be big enough that, for comparison, it would fill over 10% of the Salt Lake Valley, as shown in this image (which I didn't make).

That last datacenter campus? At ~160 MW, those three buildings put together are designed for a load about 1/55th the size of Stratos. That 300 MW natural gas power station we saw in the background? Stratos is supposed to generate its own power on-site, so it will need 30 of those things. (Or maybe more - remember PUE?)

In terms of carbon output, this thing is designed to be an absolute monster.

There's not much getting around that. They have handwaved about including solar and/or wind, but without anything concrete, we should assume this is a whole lot of carbon.

How about water?

Well, that's harder to tell, given all the vagaries and "if"s in the public information so far.

Remember, a datacenter has to get rid of a lot of heat. A datacenter that is generating its own energy on-site has to get rid of *far* more heat.

In the desert West, the most *energy* efficient way of getting rid of heat in the hot summer months is evaporative cooling: you boil water. This has, historically, been a major way of cooling both natural gas plants and datacenters, as well as homes, etc.

The same reason why this works well in the west is the same reason why it's problematic: we have very dry air, so evaporative cooling is very effective, but having dry air is connected to the fact that we don't have much water to begin with.

There *are* ways to air-cool natural gas turbines, and there *are* ways to cool datacenters that are not evaporative cooling. They are more *water* efficient. But they are less *power* efficient, which means, in this context, burning even more natural gas.

The backers of Stratos claim that they are trying to get some very new, high-tech gas turbines that operate without water cooling, or at least with very little. That does assuage some water concerns. But their language is very hedge-y - they're trying, they hope to jump in line for the limited supply of them, etc.

They also claim they will use "closed loop" water systems for cooling the datacenter. There are several things this *could* mean, and we need to know more in order to actually understand it. Most cooling systems for datacenters and even large buildings have a closed loop of water (or another coolant) for moving heat around. That's because we cannot *make* cold, we can only *move* heat. In some datacenters, this cold loop comes into the room, where it's used to cool air, which is blown across the servers. In higher-power-density datacenters, the coolant loop comes all the way to the individual rack in order to cool the air right before it enters the servers. In the most high-tech datacenters (which Stratos would likely be), it comes all the way *inside* the server, directly exchanging heat with the hot bits like CPUs and GPUs.

Coolant in these kinds of systems circulates, it's closed, you can generally consider the coolant loop to consume very little to no water after it's been filled.

But: you still have to make the heat go away somehow. This is where Stratos *might* use evaporative cooling. Or they might opt for one of the more expensive, less energy efficient dry systems. Saying "we have a closed loop" only tells us *part* of the story!

Here's what we know: the Stratos people have secured 13,000 acre-feet of water rights. In numbers that mean more to most of us, that's about 4 billion gallons per year.

They *claim* that's far more water than they need, and they won't use most of it.

But: if they don't manage to get their air-cooled gas turbines (which, in addition to being less efficient, also cost more), or decide to go with some evaporative cooling for the datacenter (because it's cheaper and uses less power), they could very easily use that much water. We are very much in a "trust me" situation, and it's not clear that we *should* trust what developers say when they are trying to get permits. We need to get independent studies and binding contracts.

For those who aren't locals, you might not be aware, but: the Great Salt Lake is shrinking. People are trying (not hard enough, probably) to save it. Not just because hey, what would we call our city without it, but also because the lakebed is full of chemicals we'd rather not be breathing in, thanks.

Stratos would not literally pull water out of the lake (which it is quite close to). But: the water rights they have obtained are in the watershed of the lake. So: if they use the water rights they have obtained, they might well contribute to the drying up of the lake.

The point here is that: they are hoarding water rights that they claim they will not use - the more reasonable bet is to assume they will use them; we need a study by actual hydrologists to understand whether using the water would accelerate the lake's demise.

And, you will notice that I have not even touched on a ton of *other* issues, such as:

1) Is there actually demand for all of these computers?

2) Would it be a good idea to fill this demand even if it does exist?

3) Can we build enough computers to fill this thing in a reasonable time anyway?

4) How far will this project get before the AI bubble pops, and will it leave anyone other than the investors holding the bag?

5) If it does get fully built, what other resources (like more water rights) might they go after?

6) Is it a wise idea to provide huge tax breaks to companies that expect to be highly profitable?

7) This is being done though the Military Installation Development Authority - what's the actual military connection here?

8) Regardless of whether it's wet or dry, is dumping this much heat into one valley a good idea?

9) There's no way that burning that much natural gas doesn't raise gas and electricity prices.

10) Can we trust the developers' numbers for how many jobs this will create locally?

Just to name a few.