@audioflyer79 @alisynthesis @davidaugust

I don't want something that's sold as "useful because it is knowledgeable and capable enough to perform jobs for me" to operate at a grade school level of understanding. I want it to grasp nuance and call out uncertainty, so that it will do precisely what I need it to do.

The problem with this challenge, as posed to a computer, is that it is not fundamentally a math or language understanding problem.

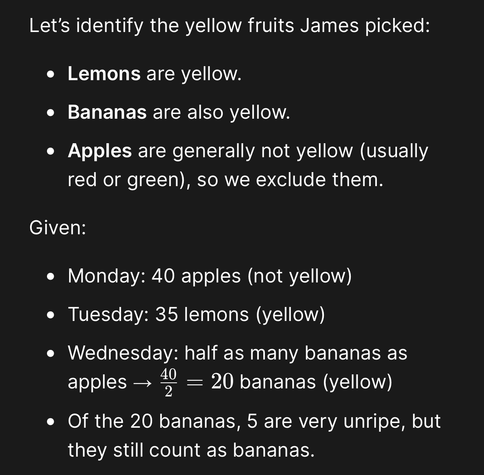

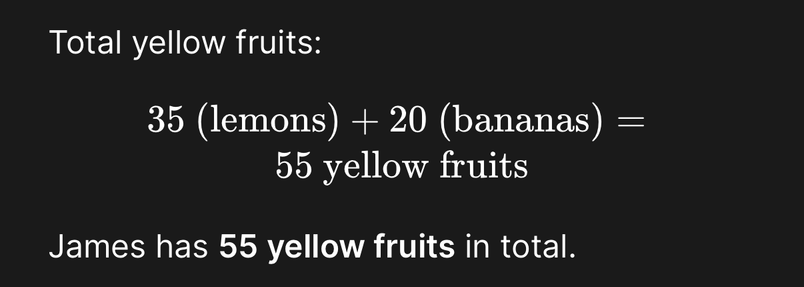

It is an *inference* problem. It is assessing the model's ability to infer properties from insufficient information (what color are apples, ripe bananas, unripe bananas, and lemons?"). (Questions like these are one reason standardized tests produce biased data, because people exposed to different environments reach different answers.)

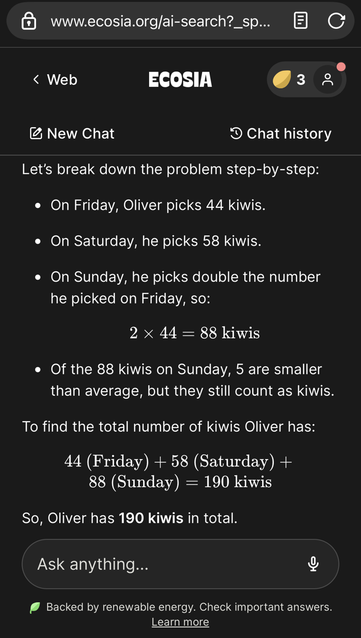

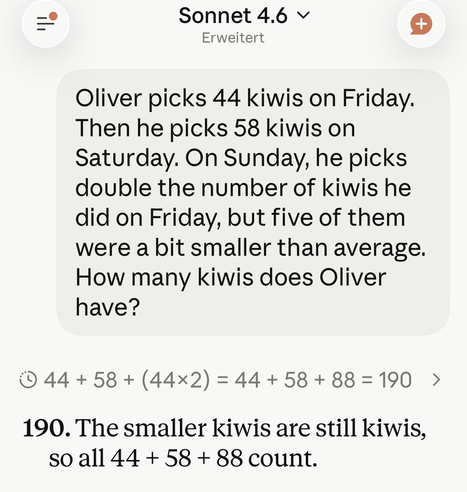

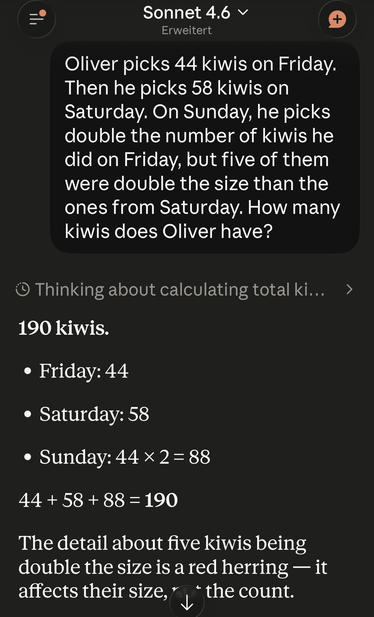

But the Apple paper is posing a *math* problem that enables them to probe the model's *language understanding*.

The success metric for the math problem is "did the model demonstrate understanding of the text when not specifically trained to do so?" (and therefore loses its utility once it is available publicly and can be included in their training data).

The success metric for the inference problem is "can the model correctly match fruit names to a color label?". This metric doesn't illustrate understanding, because it is testing precisely the kind of linguistic pattern-matching the models are designed to do.