@audioflyer79 @alisynthesis @davidaugust More properties that I don't actually want a machine to have!

Humans do generalize very well. They also see non-existent patterns in noise and walk in circles when blindfolded (the Mythbusters episode on that is hilarious).

We can "believe six impossible things before breakfast".

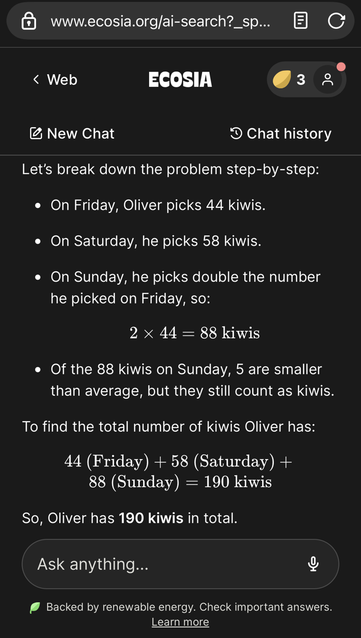

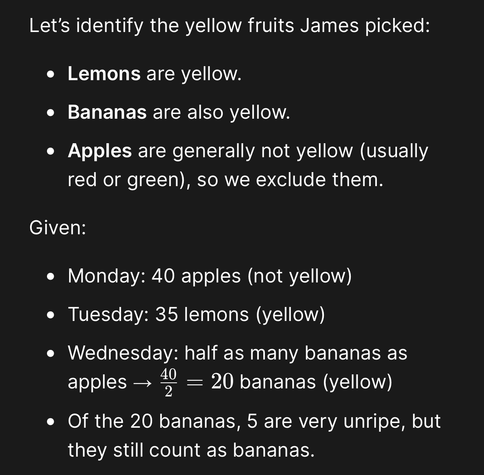

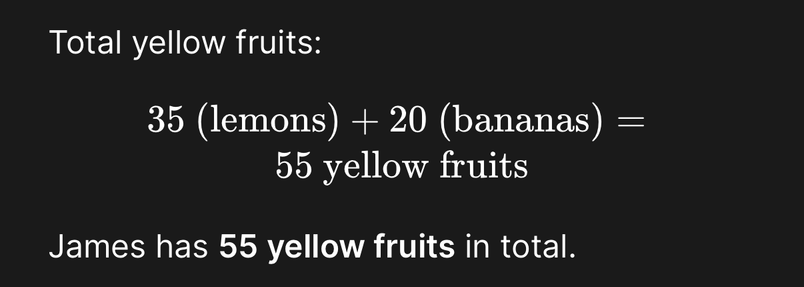

But when I interact with a machine, I want it to be able to identify and tell me the context it is assuming, I want a rigorous back end that can identify potential areas for misunderstanding and let me know they exist, and if I can't have predictability and precision, I want at the very least awareness of the existence and degree of unpredictability and imprecision. I do not want the machine to lie to me, ever, and I want a system grounded in facts.

The point is that I, as a human, navigate conflicting statements by context within a shared reality. I do not expect a computer to understand that shared reality because its reality is grounded in what we write, not what we experience.

If I see a word problem that is about fruit color and mentions "unripe," that is a context cue for relevance because ripeness is correlated with color. The computer missing that correlation is exhibiting the same underlying flaw that produces "smaller means subtract" - inability to connect the tokens to the underlying concepts (why we have language in the first place).