@budududuroiu That’s not why people were mad at Cory Doctorow. They were mad because in the same piece he called anti-AI sentiment “purity culture,” framing it as reflexive, unthinking moralizing.

If not for that element, it barely would have been a ripple. Witness that feature article a few months ago about how Ed Zitron, one of the leading critics of AI, also represented AI companies in his marketing firm. That was way more salacious and barely got any play here at all.

@natecox @maxleibman @budududuroiu

ya :sigh:. when he started *defending* LLM scraping as some kind of "fair use"...and then pushed *his* use of LLM as "ok" and not connected to LLM cause it was just running "on his machine" (which is absolutely BS as it *is* LLM dependent, and he knows it--cause even tho, because of this BS he has proven himself craven, he isn't stupid).

I'm so tired of these pop celebs with clay feet.... 🙄

@kitkat_blue @maxleibman @budududuroiu yeah, the older I get the more I feel that having heroes is a trap.

Everyone should still read that book, though. The LLM nonsense doesn't diminish how important the info the book covers is.

@maxleibman @kitkat_blue @budududuroiu absolutely. I think frequently we get caught in a trap of thinking that because someone is right about one thing they are right about unrelated things, and the inverse.

This was dumb, but he can absolutely still be right elsewhere.

No heroes.

@maxleibman really not here to fight, so please don't take as such, but...

> Using LLMs isn’t always popular with the cool crowd, Cory knows that. And he wants to defend his (quite modest) use, which I understand:

> Nobody likes their problematic behavior being pointed out to them. But as outlined: Life’s complicated.

Why would using an LLM be "problematic", unless there was a purity culture at play?

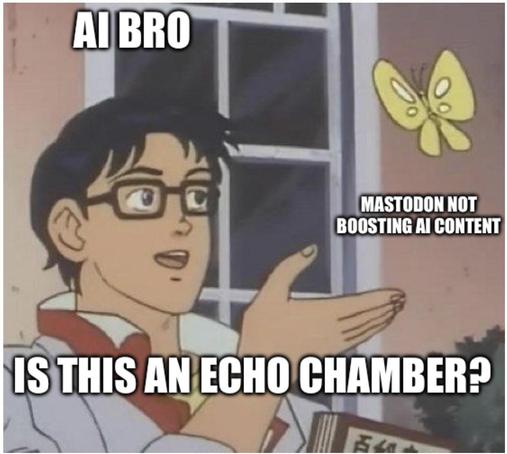

I digress, Mastodon is a great platform that allows one to curate their own feed to their liking, sometimes there's cross interactions between bubbles of Mastodon, so far, anecdotally, the cross interaction that bled into the ML/AI research space has been pretty negative, for no reason. Some people think that negativity is good, and that the ML/AI research space does not belong here. I guess that's where defederation comes in.

Acting ethically in an imperfect world

Life is complicated. Regardless of what your beliefs or politics or ethics are, the way that we set up our society and economy will often force you to act against them: You might not want to fly somewhere but your employer will not accept another mode of transportation, you want to eat vegan but are […]

Hmm.

Okay, take LLMs out of the equation so we don't have whatever baggage we have about them hanging around.

Is the use of DDT problematic? It also "works" for its purpose. But it has serious issues.

If it is "problematic", does that mean we're engaging in purity culture over which pesticides are okay and which aren't?

If it's not problematic what would you call it?

@budududuroiu @maxleibman

Like with DDT we have documented evidence of the harms caused by the LLM industrial complex. We know the negative externalities. Many of us experience them first hand.

The only way this "purity culture" angle makes sense is if you think the negative externalities don't exist or aren't that serious in which case I don't really know if we actually share any values which is a completely different issue from "purity culture".

@kwazekwaze good analogy, I'll engage with it.

The purity culture argument would be that all pesticides are bad, no matter in what quantity, to what extent.

Pesticides have been one of the most significant ways in which the world, especially the Global South, increases food security. Boycotting pesticides as a whole would be an incredibly harmful process to the most vulnerable parts of the world.

Back to LLMs. Most of the research in making LLMs more efficient is done by hobbyists or nation states under sanctions (see China). More effective LLMs would mean a correction in investment into AI as a whole, and that would have a significant impact on the environmental destruction that happens in nations that extract minerals for chips for example, or vulnerable communities that have to bear the costs of data centers propping up next to them.

I see boycotting frontier labs like boycotting DDT, but boycotting all LLM research like boycotting all pesticides

EDIT: efficient not effective

Those "more efficient" models don't avoid any of the serious epistemic issues with these systems and to my knowledge are largely focused on distillation of larger models which is ultimately akin to ethics laundering. The energy efficiency is but one of the major issues here.

The entire conceit behind large models is also, in general, exploitative.

The more appropriate application of your angle to that analogy is that LLMs are DDT, the transformer is the pesticide.

@kwazekwaze This assumes that current knowledge hierarchies, even if unjust (a university in Lagos or Hyderabad still has to pay Elsevier an extortion fee even for publicly funded research), shouldn't be challenged. Nothing is laundered, imo it's a more aggressive form of redistribution.

What would a non-exploitative baseline for an LLM look like? No model trained on large internet corpus data? Everyone be compensated for text that exists because millions of people wrote it into a commons with no expectation of compensation, under no coherent property regime? A world where everyone is compensated? By whom, how, for what marginal contribution to a model weight?

Would LLMs being trained on the entirety of human knowledge and contributed back into 'The Commons' for the benefit of humanity (drug discovery, protein folding, LLMs exhaustively testing hypotheses for humans to verify) be ethical? Because that's what I'm totally for, and the only plausible alternative I see.

@budududuroiu @maxleibman

> What would a non-exploitative baseline for an LLM look like?

I don't know how you make a plagiarism machine not a plagiarism machine and I'm not interested in answering that question as I've no interest in automating plagiarism.

@kwazekwaze again, what I'm trying to say is that's a point of view afforded to you by your circumstances.

I'm sure someone outside the Western/OECD world wouldn't give two shits about plagiarism when they have to effectively pay rent to a company in the US for them having a piece of paper (a patent).

Yeah you're not going to convince me that paying rent to American companies (distillation doesn't solve this it just creates another middle man) for access to the theft engine is the answer to capitalism.

@maxleibman it's 2026, and technologists are making "you wouldn't download a car" type arguments

@kwazekwaze I don't have to convince you, US history is steeped in piracy and disregard for authorship.

Why should 18th century US literacy, and further 19th and 20th century US cultural exports be predicated on paying rent to the British crown for 'copyright'?

How the United States Stopped Being a Pirate Nation and Learned to Love International Copyright

From the time of the first federal copyright law in 1790 until enactment of the International Copyright Act in 1891, U.S. copyright law did not apply to works by authors who were not citizens or residents of the United States. U.S. publishers took advantage of this lacuna in the law, and the demand among American readers for books by popular British authors, by reprinting the books of these authors without their authorization and without paying a negotiated royalty to them. This Article tells the story of how proponents of extending copyright protections to foreign authors—called international copyright—finally succeeded after more than fifty years of failed efforts. Beginning in the 1830s, the principal opponents of international copyright were U.S. book publishers, who were unwilling to support a change in the law that would require them to pay negotiated copyright royalties to British authors and, even worse from their perspective, would open up the American market to competition from British publishers. U.S. publishers were quite content with the status quo—a system of quasi-copyright called “trade courtesy.” That system came crashing down in the 1870s, when non-establishment publishers who did not benefit from trade courtesy decided to ignore its norms, publishing their own cheap, low-quality editions of books by British authors in competition with the editions published by the establishment publishers. As a result, most U.S. publishers came to support extending copyright to foreign authors as a means of preventing competition from publishers of the cheap editions. Once the publishers withdrew their opposition, another powerful interest group came to the fore: typesetters, bookbinders, printers, and other workers in the book-manufacturing industries. These groups opposed international copyright unless it were accompanied by rules assuring that they would not be thrown out of work by a transfer of book manufacturing from the United States to England. In the 1891 Act, the typesetters achieved what they sought: a provision requiring books to be typeset in the United States as a condition of copyright. In this way, U.S. copyright law implemented an element of U.S. trade policy. The manufacturing clause, as this requirement was called, was gradually watered down over the succeeding decades and lingered in the copyright law until 1986. Yet the entanglement of copyright law with trade policy continued, in the World Trade Organization treaty system and elsewhere. As a major exporter of books, software, movies, and other articles embodying copyrighted works, the United States

@budududuroiu

You realize the theft being discussed here is distributed and impacting all persons, correct?Hyperfocusing on a specific immaterial theft against an exploitive publishing system to discount the more egregious harms is myopic.

It's a bizarre crusade you're on to insist we must embrace fascist corporate products to fight corporate power.

You can go torrent some paywalled textbooks from an exploitive publisher right now (without an LLM) and not support a fascist tech project.

@kwazekwaze I'm not convincing you to embrace any product, just to allow people that want to disentangle capital from LLMs to discuss how to do so in peace, on the fediverse.

The only crusade here seems to be one to burn anyone discussing LLMs (unless negatively) at the stake.

@budududuroiu

When "you cannot disentangle the LLM from capital" is the topic I'm not going to just not tell you you're wrong.

Just like you didn't hesitate to tell the original tooter you thought he was wrong.

This implementation of ML only arises and gains the traction it does in a capitalist environment where consent, credit, and epistemic validity are secondary to "velocity" and output.

You can block me for telling me you're wrong but don't then try and insist there's an echo chamber.

Why would I block you? We're having a conversation, idk why your instinct is to expect being blocked.

> Just like you didn't hesitate to tell the original tooter you thought he was wrong.

It's meta-posting, the community is discussing recent flame wars across the fediverse. I weighed in.

> This implementation of ML only arises and gains the traction it does in a capitalist environment where consent, credit, and epistemic validity are secondary to "velocity" and output.

[citation needed] what would prevent a centrally planned economy from building centralised computer systems that index and ingest the entirety of the states documents and works. This is more akin to Soviet cybernetics projects like the OGAS than anything developed in the capitalist world

@budududuroiu @kwazekwaze @maxleibman Of course it is laundered if we are ”not allowed” to use the source data, but as soon as it’s been put through the machine, we are ”allowed” to use it.

Aaron Swartz was killed over this. Sunde, Neij, and Svartholm Warg were imprisoned. And yet the launderers and users of the laundered data should be praised?

Actual relevant things could be done with the data, but this situation we have is the worst: creators lose control and we still don’t get to use it.

@ahltorp yes, and I think the Swarz case is horrible. Same with TPB. Again, I wish DMCA people to sodomise themselves with retractable batons, but...

Who enforces punishments for GPL violations? No one. In fact, manufacturers like Boox actively ignore GPL and face no repercussions.

I think we have to grapple with the reality that these frontier models have already been trained and, without massive geopolitical headwinds, AI will go forward as is today, unless there's commodification.

Mastodon has a lot of queer people on it. Many LLM's are actively being trained to perpetuate disinformation against queer people and are assisting in the trans genocide. They're teaching a generation of people that trans people are bad and dangerous.

"Purity culture" has also become a buzzword that's used against feminists and other forms of progressives.

We're resistant to LLM's because they're actively making our lives worse, more dangerous, harder, etc.

@CordiallyChloe @maxleibman understood, and I think it's commendable that people want to protect their communities from those biases and negative aspects.

Maybe my view is too cynical, but most people will use LLMs, and will engage with those biases, I just think it's less bad that biases favourable to the groups I support rather than unfavourable. That requires participation into the research sphere, which, would be nice to happen on the fediverse rather than just Twitter and Substack

@nonehitwonder @budududuroiu @maxleibman

Yeah, trans voices are especially pushed out. People already don't take us seriously and constantly talk over us. Now they have an entire product that does the ignoring/talking over for them.

@budududuroiu @CordiallyChloe @maxleibman

ALSO: Twitter, the White Supremacy App, has echoes literally being enforced and boosted by an LLM, and Substack, if I recall correctly, quite famously also has a Nazi Problem, so why exactly are these being held up as definitive bellweathers for LLM discourse overall? 🤔🤔🤔

@nonehitwonder @budududuroiu @maxleibman

Yeah, Grok was infamously reprogrammed to say trans people are deranged or whatever. And the site bans people for using the word "cis." And now Grok is being integrated into a bunch of banks.

It's bad. Why would I act like it's not bad?

@nonehitwonder @budududuroiu @maxleibman

I guess I should also mention that the federal govt has said trans people aren't real and humans can only exist and be acknowledged by our "biological sex." And the feds are contracting for LLM companies for the govt and require those companies to follow their terms. Anthropic was originally their target for that but idk where that sits now.

So whichever LLM the government chooses will be going the same direction as Grok, if it hasn't already.

@budududuroiu, to address you directly, I think you are not being cynical ENOUGH.

LLMs could be leveraged to do some terribly useful things; so could asbestos.

@budududuroiu @maxleibman "Really not here to fight..."

*opening volley is a bad-faith argument about why people were upset with Cory*

@theorangetheme bad faith? The entire witch hunt was kick-started by tante and his claim that Doctorow's use of LLMs is problematic (I literally direct quoted it in the post, how is this bad faith lmao)

Tante isn’t attacking Doctorow for admitting to ever using an LLM. He’s attacking the attempt to defend the behavior.

There's a reason I highlighted that specific early sentence. Tante isn't stupid, he realises it's absurd to build a personal takedown on simple LLM use, but that sentence speaks volumes.

Again, why would LLM use be problematic. Tante reiterates:

> Cory could just have said “I know there are many critiques of LLMs, but right now that is the best way for me to enable my work, I try to limit the problematic aspects by using a small open weight model and checking the results in detail.” and have moved on.

"I try to limit the problematic aspects..." what problematic aspects would be limited by using small open weight models?

The post reads like a visceral reaction to Doctorow's LLM use that's rationalised into an attempt at something more

You've made your point. Get out of my mentions. Be an ass somewhere else.

@maxleibman "be an ass" lmao, projection much?

Long story short, I find the sanctimonious "AI is evil" crusade some people are on here really too dumb und undercomplex to pay attention.

Now if you say we should bomb data centers to throw off the shackles of corporate oppression or something, sure, maybe you're onto something, but clutching pearls on here whenever someone thinks about AI is not something I care for at all.

@Quantensalat it's alright, Mastodon is currently at the "Hidden Markov Models are fascist because they're Generative models" phase.

"Why would using an LLM be "problematic", unless there was a purity culture at play? "

Because the LLM project that these billionaires are pumping like there is no tomorrow is *inherently anti-human* that's why it's fucking "problematic". The whole purpose of this project is to replace knowledge-based human economic labor with LLM based simulacrum. *Replace* not "augment". The latter is just the first stage; the end goal is _replacement_.

You use it; you train it.

@budududuroiu @maxleibman it's "Problematic" because of the costs of building such a technology.

i did not ask my servers to be scraped 24/7 by amazon, openai, google and anthropic, so they can leech off the software i make, and create tools they claim can be used to replace people like me

terabytes of traffic coming from a bunch of datacenters, making electricity extremely expensive for those living near it, taking egregious amounts of water, just so that their LLMs can be used by big corporations to automatically generate poorly-thought-of software, low quality sources of information, and try their best at attempting to create a perfect worker from a compulsive liar specifically engineered to be good at bullshitting

LLMs at this scale are inherently unethical, and this is not coming from people that hate them for no reason, this is coming from artists, programmers, and designers, who disagree with huge corporations taking what they made painstakingly to feed their corporative delusions

@budududuroiu @maxleibman anyway, ML is cool, I just don't think it should be... taking away from artists and developers, in an active attempt at replacing them.

let's get the ML algorithms learning how to cure cancer, not how to produce massive amounts of misinformation in the name of clickbait and revenue

@nelson @maxleibman well, we're not talking about frontier AI labs, we're talking about LLMs.

There's nothing inherently problematic about this, to the point you can train an LLM from scratch that only uses public domain data, for a couple thousand bucks. The perception that LLMs must use copyrighted data and billions of dollars is false and imo, a marketing ploy

The Common Pile v0.1: An 8TB Dataset of Public Domain and Openly Licensed Text

Large language models (LLMs) are typically trained on enormous quantities of unlicensed text, a practice that has led to scrutiny due to possible intellectual property infringement and ethical concerns. Training LLMs on openly licensed text presents a first step towards addressing these issues, but prior data collection efforts have yielded datasets too small or low-quality to produce performant LLMs. To address this gap, we collect, curate, and release the Common Pile v0.1, an eight terabyte collection of openly licensed text designed for LLM pretraining. The Common Pile comprises content from 30 sources that span diverse domains including research papers, code, books, encyclopedias, educational materials, audio transcripts, and more. Crucially, we validate our efforts by training two 7 billion parameter LLMs on text from the Common Pile: Comma v0.1-1T and Comma v0.1-2T, trained on 1 and 2 trillion tokens respectively. Both models attain competitive performance to LLMs trained on unlicensed text with similar computational budgets, such as Llama 1 and 2 7B. In addition to releasing the Common Pile v0.1 itself, we also release the code used in its creation as well as the training mixture and checkpoints for the Comma v0.1 models.

@budududuroiu @maxleibman I am pretty sure that you'd still not get very good results at that scale, and in practice, most used LLMs (be it independent and self-hosted, or big and corporate) are trained on copyrighted material.

And the idea of building an LLM at this scale still does not exactly make sense to me, what exactly is the purpose of this? a chatbot? auto-generating text? for what?

Even when you're only taking the "ethical" route here, the idea of creating a technology with the intent and express purpose of spewing coherent-sounding sentences for any other usecase but simple entertainment sounds like something i'd want nobody to have their hands on.

We already are living in a place where there's massive amounts of misinformation and automatically generated texts with no substance are being sold to people and displacing away the work of real human beings that depend on their capacities, I don't think that making all of the sources "ethical" would fix this.

Also, software written by LLMs sucks, and I do not want it to exist.

Plenty of uses that would benefit the science community (see attached). The chatbot form, the lies about cost and need for copyright data is all that.. a lie.

The openly licensed model performs similarly to Qwen3, you don't need Opus 4.6 for 95% of the tasks you'd ever run.

The purpose of the technology isn't to spew coherently sounding paragraphs, but to solve problems by driving deterministic processes. Anyways, this is a longer discussion.

Bogdan Buduroiu (@[email protected])

Sources: - https://www.nature.com/articles/s41586-021-04086-x - https://www.nature.com/articles/s41586-023-06004-9 - https://www.nature.com/articles/s41586-022-05172-4 - https://www.nature.com/articles/s41586-025-08628-5

Benefits like which? Only one of those publications even mentions LLMs and it is for a very specific usecase.

Most of what I've seen in the science world, when it comes to the specific usecase of LLMs, has been a series of unfortunate moments on which several recent science papers were entirely hallucinated by LLMs.

It seems like this technology just is not worth it, at least not right now, as it has led to much more pain than it has led to an actual advancement in the way people interact with information and research topics, being primarily usable as a form of cheap, junk food-esque content which is hard to verify, hard to discern from regular human-made content, and produced by a system which is inherently not good at being accurate.

I really do not have the energy to continue this conversation, but you do seem like the type to throw links pointing at the vague idea of progress and science, without actually understanding the actual social, economic and structural problems with the technology at hand. Doesn't matter if the nuclear bomb is made with "ethically sourced plutonium" instead of crushed up kittens, if you're going to use it primarily for worsening, and possibly ending, people's lives.