@budududuroiu That’s not why people were mad at Cory Doctorow. They were mad because in the same piece he called anti-AI sentiment “purity culture,” framing it as reflexive, unthinking moralizing.

If not for that element, it barely would have been a ripple. Witness that feature article a few months ago about how Ed Zitron, one of the leading critics of AI, also represented AI companies in his marketing firm. That was way more salacious and barely got any play here at all.

@maxleibman really not here to fight, so please don't take as such, but...

> Using LLMs isn’t always popular with the cool crowd, Cory knows that. And he wants to defend his (quite modest) use, which I understand:

> Nobody likes their problematic behavior being pointed out to them. But as outlined: Life’s complicated.

Why would using an LLM be "problematic", unless there was a purity culture at play?

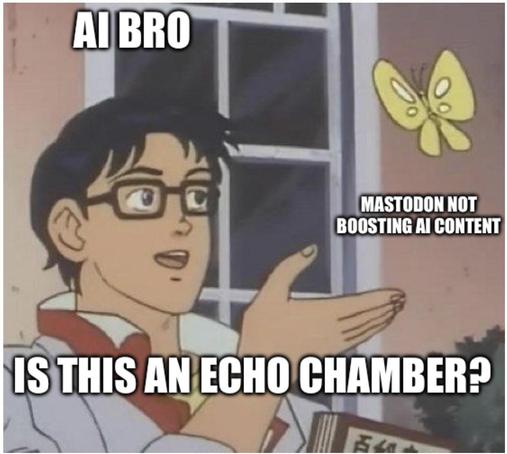

I digress, Mastodon is a great platform that allows one to curate their own feed to their liking, sometimes there's cross interactions between bubbles of Mastodon, so far, anecdotally, the cross interaction that bled into the ML/AI research space has been pretty negative, for no reason. Some people think that negativity is good, and that the ML/AI research space does not belong here. I guess that's where defederation comes in.

Acting ethically in an imperfect world

Life is complicated. Regardless of what your beliefs or politics or ethics are, the way that we set up our society and economy will often force you to act against them: You might not want to fly somewhere but your employer will not accept another mode of transportation, you want to eat vegan but are […]

@budududuroiu @maxleibman it's "Problematic" because of the costs of building such a technology.

i did not ask my servers to be scraped 24/7 by amazon, openai, google and anthropic, so they can leech off the software i make, and create tools they claim can be used to replace people like me

terabytes of traffic coming from a bunch of datacenters, making electricity extremely expensive for those living near it, taking egregious amounts of water, just so that their LLMs can be used by big corporations to automatically generate poorly-thought-of software, low quality sources of information, and try their best at attempting to create a perfect worker from a compulsive liar specifically engineered to be good at bullshitting

LLMs at this scale are inherently unethical, and this is not coming from people that hate them for no reason, this is coming from artists, programmers, and designers, who disagree with huge corporations taking what they made painstakingly to feed their corporative delusions

@nelson @maxleibman well, we're not talking about frontier AI labs, we're talking about LLMs.

There's nothing inherently problematic about this, to the point you can train an LLM from scratch that only uses public domain data, for a couple thousand bucks. The perception that LLMs must use copyrighted data and billions of dollars is false and imo, a marketing ploy

The Common Pile v0.1: An 8TB Dataset of Public Domain and Openly Licensed Text

Large language models (LLMs) are typically trained on enormous quantities of unlicensed text, a practice that has led to scrutiny due to possible intellectual property infringement and ethical concerns. Training LLMs on openly licensed text presents a first step towards addressing these issues, but prior data collection efforts have yielded datasets too small or low-quality to produce performant LLMs. To address this gap, we collect, curate, and release the Common Pile v0.1, an eight terabyte collection of openly licensed text designed for LLM pretraining. The Common Pile comprises content from 30 sources that span diverse domains including research papers, code, books, encyclopedias, educational materials, audio transcripts, and more. Crucially, we validate our efforts by training two 7 billion parameter LLMs on text from the Common Pile: Comma v0.1-1T and Comma v0.1-2T, trained on 1 and 2 trillion tokens respectively. Both models attain competitive performance to LLMs trained on unlicensed text with similar computational budgets, such as Llama 1 and 2 7B. In addition to releasing the Common Pile v0.1 itself, we also release the code used in its creation as well as the training mixture and checkpoints for the Comma v0.1 models.

@budududuroiu @maxleibman I am pretty sure that you'd still not get very good results at that scale, and in practice, most used LLMs (be it independent and self-hosted, or big and corporate) are trained on copyrighted material.

And the idea of building an LLM at this scale still does not exactly make sense to me, what exactly is the purpose of this? a chatbot? auto-generating text? for what?

Even when you're only taking the "ethical" route here, the idea of creating a technology with the intent and express purpose of spewing coherent-sounding sentences for any other usecase but simple entertainment sounds like something i'd want nobody to have their hands on.

We already are living in a place where there's massive amounts of misinformation and automatically generated texts with no substance are being sold to people and displacing away the work of real human beings that depend on their capacities, I don't think that making all of the sources "ethical" would fix this.

Also, software written by LLMs sucks, and I do not want it to exist.

Plenty of uses that would benefit the science community (see attached). The chatbot form, the lies about cost and need for copyright data is all that.. a lie.

The openly licensed model performs similarly to Qwen3, you don't need Opus 4.6 for 95% of the tasks you'd ever run.

The purpose of the technology isn't to spew coherently sounding paragraphs, but to solve problems by driving deterministic processes. Anyways, this is a longer discussion.

Bogdan Buduroiu (@[email protected])

Sources: - https://www.nature.com/articles/s41586-021-04086-x - https://www.nature.com/articles/s41586-023-06004-9 - https://www.nature.com/articles/s41586-022-05172-4 - https://www.nature.com/articles/s41586-025-08628-5

Benefits like which? Only one of those publications even mentions LLMs and it is for a very specific usecase.

Most of what I've seen in the science world, when it comes to the specific usecase of LLMs, has been a series of unfortunate moments on which several recent science papers were entirely hallucinated by LLMs.

It seems like this technology just is not worth it, at least not right now, as it has led to much more pain than it has led to an actual advancement in the way people interact with information and research topics, being primarily usable as a form of cheap, junk food-esque content which is hard to verify, hard to discern from regular human-made content, and produced by a system which is inherently not good at being accurate.

I really do not have the energy to continue this conversation, but you do seem like the type to throw links pointing at the vague idea of progress and science, without actually understanding the actual social, economic and structural problems with the technology at hand. Doesn't matter if the nuclear bomb is made with "ethically sourced plutonium" instead of crushed up kittens, if you're going to use it primarily for worsening, and possibly ending, people's lives.