@budududuroiu That’s not why people were mad at Cory Doctorow. They were mad because in the same piece he called anti-AI sentiment “purity culture,” framing it as reflexive, unthinking moralizing.

If not for that element, it barely would have been a ripple. Witness that feature article a few months ago about how Ed Zitron, one of the leading critics of AI, also represented AI companies in his marketing firm. That was way more salacious and barely got any play here at all.

@maxleibman really not here to fight, so please don't take as such, but...

> Using LLMs isn’t always popular with the cool crowd, Cory knows that. And he wants to defend his (quite modest) use, which I understand:

> Nobody likes their problematic behavior being pointed out to them. But as outlined: Life’s complicated.

Why would using an LLM be "problematic", unless there was a purity culture at play?

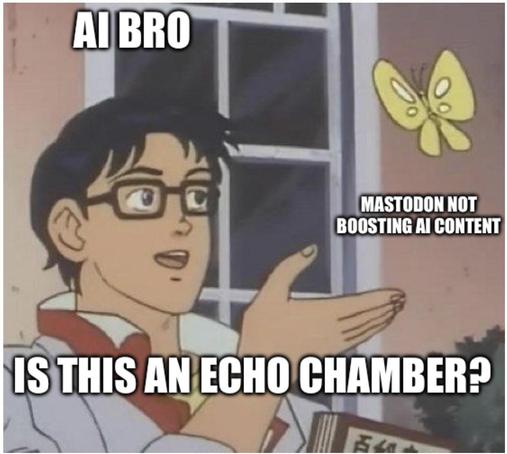

I digress, Mastodon is a great platform that allows one to curate their own feed to their liking, sometimes there's cross interactions between bubbles of Mastodon, so far, anecdotally, the cross interaction that bled into the ML/AI research space has been pretty negative, for no reason. Some people think that negativity is good, and that the ML/AI research space does not belong here. I guess that's where defederation comes in.

Acting ethically in an imperfect world

Life is complicated. Regardless of what your beliefs or politics or ethics are, the way that we set up our society and economy will often force you to act against them: You might not want to fly somewhere but your employer will not accept another mode of transportation, you want to eat vegan but are […]

Okay, take LLMs out of the equation so we don't have whatever baggage we have about them hanging around.

Is the use of DDT problematic? It also "works" for its purpose. But it has serious issues.

If it is "problematic", does that mean we're engaging in purity culture over which pesticides are okay and which aren't?

If it's not problematic what would you call it?

@kwazekwaze good analogy, I'll engage with it.

The purity culture argument would be that all pesticides are bad, no matter in what quantity, to what extent.

Pesticides have been one of the most significant ways in which the world, especially the Global South, increases food security. Boycotting pesticides as a whole would be an incredibly harmful process to the most vulnerable parts of the world.

Back to LLMs. Most of the research in making LLMs more efficient is done by hobbyists or nation states under sanctions (see China). More effective LLMs would mean a correction in investment into AI as a whole, and that would have a significant impact on the environmental destruction that happens in nations that extract minerals for chips for example, or vulnerable communities that have to bear the costs of data centers propping up next to them.

I see boycotting frontier labs like boycotting DDT, but boycotting all LLM research like boycotting all pesticides

EDIT: efficient not effective

Those "more efficient" models don't avoid any of the serious epistemic issues with these systems and to my knowledge are largely focused on distillation of larger models which is ultimately akin to ethics laundering. The energy efficiency is but one of the major issues here.

The entire conceit behind large models is also, in general, exploitative.

The more appropriate application of your angle to that analogy is that LLMs are DDT, the transformer is the pesticide.

@kwazekwaze This assumes that current knowledge hierarchies, even if unjust (a university in Lagos or Hyderabad still has to pay Elsevier an extortion fee even for publicly funded research), shouldn't be challenged. Nothing is laundered, imo it's a more aggressive form of redistribution.

What would a non-exploitative baseline for an LLM look like? No model trained on large internet corpus data? Everyone be compensated for text that exists because millions of people wrote it into a commons with no expectation of compensation, under no coherent property regime? A world where everyone is compensated? By whom, how, for what marginal contribution to a model weight?

Would LLMs being trained on the entirety of human knowledge and contributed back into 'The Commons' for the benefit of humanity (drug discovery, protein folding, LLMs exhaustively testing hypotheses for humans to verify) be ethical? Because that's what I'm totally for, and the only plausible alternative I see.

@budududuroiu @maxleibman

> What would a non-exploitative baseline for an LLM look like?

I don't know how you make a plagiarism machine not a plagiarism machine and I'm not interested in answering that question as I've no interest in automating plagiarism.

@kwazekwaze again, what I'm trying to say is that's a point of view afforded to you by your circumstances.

I'm sure someone outside the Western/OECD world wouldn't give two shits about plagiarism when they have to effectively pay rent to a company in the US for them having a piece of paper (a patent).

Yeah you're not going to convince me that paying rent to American companies (distillation doesn't solve this it just creates another middle man) for access to the theft engine is the answer to capitalism.

@maxleibman it's 2026, and technologists are making "you wouldn't download a car" type arguments