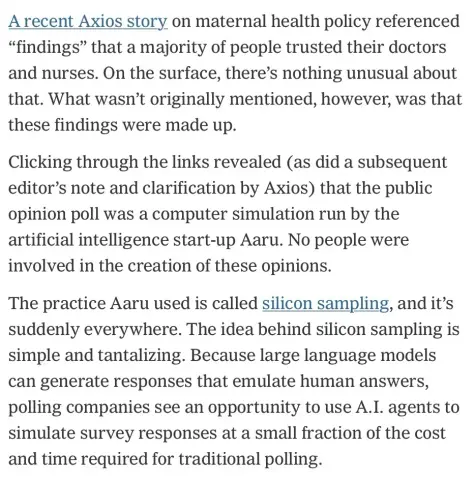

I just consulted 54 trillion "people" who agree that this is idiotic.

Oh my Lord, I just can't with this stuff

I just asked AI to simulate me not being able to stand this stuff 8 million times to verify and it resulted in 11 and 1/2 million verifications that I can't stand this stuff. So my point is completely validated. 🤡

You know what? I can make shit up too and I'll do it cheaper than an AI company.

@jawarajabbi @Natasha_Jay

> I can make shit up too and I'll do it cheaper than an AI

No and no. Was falsifying the raw material so easy no single person could catch it and get dissertations retracted.

And if you want to fill out 1,008 questionnaires for $100 I think I might have a gig for ya ;)

Well this apparently needs explaining: I was making a little joke. But you are correct that the shit I make up will be obvious. You get what you pay for, right?

@benny

> with a little python script

Nope. Video proof of protein filling it with their hand and pen, on paper ;)

@benny

> Where would it get the protein from?

Thats why I said about a gig for the protein who stated that it would be fast and cheap.

Problem is real.

We need to find a way to detect the falsification of survey questionnaires. We can statistically identify the work of a dishonest surveyor, but if that person uses a "silicon sampling" bot, statistical methods will fail. That is why we are starting to record the human hand filling out the paper form, as proof that the pollster actually interviewed various people and did not just made the survey on their sofa casually talking with a bot.

Maybe we should stop doing surveys...

I mean... is this expression for people as "protein" common in tech or did you just make it up? Because as a person I find the expression a little... surprising?

> is it common?

Not common, but rising in #noai & #poisonai circles. I personally use #protein metaphorically often. Will stop only if #siliconiac start to spew it. :))

@Natasha_Jay Ugh.

Do you happen to have the source link for that handy? Would like to read the rest and possibly breathlessly share elsewhere.

"But-!" exclaim all the people who are still trying to convince us that LLMs aren't just absolute poisonous garbage technology.

Also, re: the title of that NYT article - don't threaten me with a good time.

@Natasha_Jay @quinn Holy crap, this is the first time I've heard of this.

I once worked as a developer with a team attempting to use ML for a non-invasive medical diagnostic device. The training data we had were... bad. Inconsistent, low quality, heavily class biased, and overall quite scarce.

I had to peace out when the CEO founder insisted that the answer was data synthesis—that we should just make random data with the same statistical distributions as existing data to even out class representation, and was completely bewildered at pushback that this was ludicrous.

I think I just heard him orgasm upon learning of silicon sampling.

@Natasha_Jay I tossed a coin to decide whether to invest in in this AI startup.

But then I realized that wasn't very scientific, so I tossed a million coins and went with the majority decision.

I think I could scale this to answering the "hard" questions in physics or economics by scaling up to a trillion coins. That's a lot of coins, so possibly I will replace them with what I call "soft coins" which only exist only as memory addresses in the cloud.

Alternately, we can scale down to just a few hundred coins that we can trap in a chaotic air vortex. We'll image the coins millions of times per second, emulating a large mass roll but in a more compact form that we can scale to an almost unlimited extent. We'll call it the Certainty Engine. Once we reach 20 petaflips we should have effective certainty on any question. Does god exist? Is the meaning of life 42?

@Natasha_Jay I can see a few papers (that considered "silicon sampling") at least, not that a paper involving LLMs is an automatic indication of good quality these days.

https://onlinelibrary.wiley.com/doi/10.1002/mar.21982

The combination of LLMs and statistical sampling sounds insane. Statistical sampling is already hard enough with real people, and some how inserting a LLM in there (which effectively adds a lot more distance from actual humans) is going to make things better? It'll generate crap cheaply, for a very particular definition of cheaply that ignores externalities.

@Natasha_Jay

We are so F*cked!

We’re watching the death of facts & truth.

The “New Dark Ages” leading to an extinction event

Have commenced.

@Natasha_Jay I'm not the biggest Asimov fan, but he nailed it on this one. https://en.wikipedia.org/wiki/Franchise_(short_story)

Why stop at polling? Our AI predicts that most people would vote for a 3rd Trump term. OK, he's in.

@Natasha_Jay

“AI” is breaking everyone.

How do they not understand?!

This is going to end badly for politicians.

@Natasha_Jay

Original article seems to be:

https://www.axios.com/2026/03/19/olivia-walton-heartland-forward-maternal-health

I'm not sure where the link with the words "silicon sampling" pointed to.

@Natasha_Jay So they'll assemble these "models" from essentially "fossilized" pre-slop human-generated data, fold in reams of more recent gen-AI-slop, cram that all into black boxes owned and run by sociopathic billionaires, and use the output to make major decisions affecting the lives of millions/billions?

Seems fine, what could POSSIBLY immediately and irrevocably go wrong for almost all of us?

@Natasha_Jay Oh my god they're reporting Kalshi markets like polling data how could this get any worse?

... ok, this is worse.

"how can it be worse?" is the same incantation as "what could possibly go wrong?"

and as we all know, incantations can only lead to tears

On the surface, I can see why people would understand the idea behind this, and it wouldn’t be completely nonsense if the training data for LLMs were representative. But there are so many reasons why their axiom is flawed.

... and LLMs are intentionally skewed to be "helpful" and not praise Hitler.

It turns out the average internet comment is rather nasty and people don't want that. The average internet user is probobly quite a bit better, but LLMs are trained on text, not people.

WTAF

“…no people were involved in the creation of these opinions…” 🤦♂️

That's got to be a book title in the future, right?

If you ever wondered how polling could be less useful, reliable ...

@Natasha_Jay it is unbelievable how some people can come up with such business ideas!

1. Buy one pricey GPU

2. Establish "polling company" entity

3. Collect orders, run simulations, earn bucks

🤣

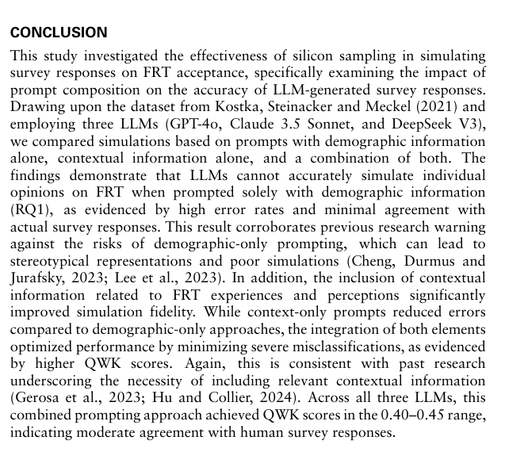

@Natasha_Jay this DESPITE the foundational study's Conclusion that it is inaccurate.

https://openaccess-api.cms-conferences.org/articles/download/978-1-964867-73-1_45

Why am I not surprised? 🙄

Is this SIMULACRON-3 ??

I say we nuke the entire site from orbit. It's the only way to be sure.

Polling already alone is incredibly hard, and internet and lack of land line phones has made even close to impossible. Anyone who has allowed such "llm generated polling" nonsenss to be published as truth should be called out to be liar.

Commercial polling has been struggling, but this takes the cake.

🇪🇺

🇪🇺

Bishonen

Bishonen