Dammit Jenkins!

LLMs, the grift that just keeps giving.

@oneloop Most ML looks for patterns in the data that map to a truth function (true/false) where the truth may be objective or based on expert opinions. A well know example would be the looking at patterns in X-rays to detect whether a fracture is or is not present. It is still not going to get the answer correct every time, but it can also sometimes detect patterns not obvious to us.

An LLM however has no truth function, it just looks to autocomplete a prompt you give it (which you may think is a question with a true/false answer) based on the frequency with which words follow each other in the data set.

At best the LLM trainers will get sweated labour in the South to label sources for accuracy, so it may give a higher probability to strings of words in a Wikipedia or Reddit article compared to one in the Onion or conspiracy theories on Facebook or X.

Add to this the important 'chat' feature, where it is programmed to respond in a way that fakes being in a conversation such as you would be having with a human respondent.

And it never responds "I don't know". It always comes up with what looks like an an answer, however poor the source or combination of sources may be.

> An LLM however has no truth function

> LLM trainers will get sweated labour in the South to label sources for accuracy

Ok, if agree that you're training for accuracy then they do have "truth function".

You're mixing technicalities of the technology, with impact of the technology.

> And it never responds "I don't know".

You're mixing properties of the implementations that you've seen, with properties of the technology. Furthermore the premise, that it never responds "I don't know" isn't even true. I'm afraid you're just repeating things that you've heard that haven't taken a minute to consider whether they're true.

Here's Chatgpt saying it doesn't know

Though of course it is a bit more nuanced than that.

The LLM traing data set may include statements out there asserting the something is not known. And it may reproduce that, or it could randomly decide to give what looks like an answer.

You can attempt to put in improbability thresholds for the word salads that LLMs extrude but it still can't safely distinguish truths from the fictions that exist in its training data set.

"Supposedly" is doing a lot of heavy lifting here.

If you subscribe to the belief that capitalism has ANYTHING to do with rationalism, you are bedding down with the fantasies of scheming wealthhounds.

Economics is astrology for the rich.

Capitalism is the practice of fleecing those who are so rich that they don't notice what they pay to wealthhounds, to get permission to rip off the "poors". . .

Perhaps. But also an opportunity for me to explain the reasons BEHIND the sarcasm. And a victory for truth.

Yep, it's really that crazy.

Blog entry from 10 days ago puts it in historical context, looking a oligarchs in the 19th and 20th centuries manipulating markets and cornering them:

the boosts were at '420' before i came along...

There's a reason there's a qualifier before "intelligence".

😂 this is hilarious. too bad it was posted on that fascist site rather than on #Mastodon or even #bluesky.

(i'd like ex-twitter to have posts of screenshots from 💙here posted over ⛔there 😁) #fediverse

@MostlyHarmless It's not even RAM chips, it's RAM *wafers*, ie silicon that's been etched but not even cut, let alone encased in chips and soldered to a circuit board with support components, and the company buying the wafers does not even have capacity to do all that.

It's just an evil plan to starve their direct competitors by making an essential hardware part unavailable / super expensive.

@ScriptFanix @MostlyHarmless Maybe they intend to build wafer scale AI systems?

Otherwise it would make sense to order modules. They can use the modules, and the wafers would still be taken off the market while they are packaged.

@MostlyHarmless

*But* for RAM this make so sens has it must be put along some computing chips to be useful, and I don't think the wafers bought have been design in that way…

And with waffer-scale chips you need to deal with a lot more issues such as heat management, dedicated encloruses (won't fit in a standard server) and so on

@Eldeberen @ScriptFanix @MostlyHarmless Maybe they have some sneaky way to pair a memory wafer with a GPU wafer or portions thereof.

For example, slice them both into quarters and put two memory quarters and two GPU quarters side by side on a heat absorbing substrate, and bond wire them together.

Or they have something that can be put between two wafers to interconnect them in a stack. That would be even denser.

OAI is going to pull some sort of hardware innovation out of a hat. They need one

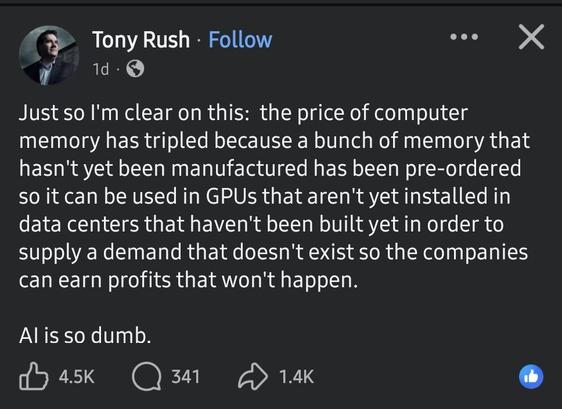

@MostlyHarmless @masek Just so I’m clear on this: the price of computer memory has tripled because a bunch of memory that hasn’t yet been manufactured has been pre-ordered so it can be used in GPUs that aren’t yet installed in data centers that haven’t been built yet in order to supply a demand that doesn’t exist so the companies can earn profits that won’t happen.

AI is so dumb.

Nur damit ich das richtig verstehe: Der Preis für Computerspeicher hat sich verdreifacht, weil eine Menge Speicher, der noch gar nicht hergestellt wurde, vorbestellt wurde, damit er in GPUs eingesetzt werden kann, die noch nicht in Rechenzentren installiert sind, die noch nicht gebaut wurden – um eine Nachfrage zu bedienen, die es nicht gibt, damit Unternehmen Profite erzielen können, die nicht eintreten werden.

AI is so dumb.

{Transcription created using AI (Advanced Imitation).}

If profit is the mindless driver? What is the cost of internet? Is personal data and routine info being fed into some advertisement/ publicity data bank

Yup.

Now if only we had rulers as clear sighted about futures markets as Lord Vetinari.