Consciously not using something ≠ prohibition

Edit: Also, who cares who worked/ envisioned or works on this now? If you think about LLMs enough, you will likely see enough good arguments about the resource waste, centralization of power and multiplication of slop which describe LLMs. We lived without it before and we can live without it in future times.

@joshuagrochow and the lack of moral compass or publicly stated ethicals standards that would allow university employees to steal large enough sets. small sets of text are read and understood by humans who can, far more efficiently, apply appropriate prior written and other formats of source material to a specific use case.

programming a calculator only makes reasonable sense if the computation requires enough repetition to warrant the resources used in building it, or it's a closed set without novelty... like, for example, a numerical calculator. ; )

edited for typos and clarity: it was killing me, apologies for the notification disruption.

The code for the LLM interpreter is relatively simple, and bears the same relationship to the actual LLM as the C compiler does to an operating system. The models are the real software and the ones big and complex enough to be useful are the product of large corporations and mass copyright violation.

@Gargron @df

Yes. The flowchart has three boxes:

1. Create LLM

2. Then a miracle occurs

3. Profit from AGI !!!

The companies pushing so-called "AI" have completed step 1. Some of them try to tell us that they've nearly got a handle on step 2, but that's just an attempt to swindle more investors. There is literally NOTHING that fits in the hole of step 2.

Transformers are neural networks.

LLMs are transformers wrapped in some Python scripting.

Every neural network can be accurately represented as an Excel sheet, even if it ends up having billions of cells.

Since it's just addition and multiplication, the model is fully deterministic. Same input, same output. Not intelligent.

It's Python code that does probabilistic sampling of the output. It's just a few lines of well-understood math plus a dice roll. Again, not intelligent.

@patrys @df @Gargron does determinism imply non-intelligence?

If you hooked up the computer to a Geiger counter for true random noise and used that to modulate the output, would that have any bearing on its intelligence?

Or from the other side, what makes you think our brains are non deterministic, and why does that make us more intelligent than if the exact same history and sense-data always produced the same response?

@FishFace @df @Gargron If it’s deterministic, it can be unrolled into a giant lookup table. Did we kill phone books because they were on the verge of achieving AGI?

To me, intelligence implies a lot of things, like being able to form higher-order abstractions, learn, and thus remember things (no, being passed your “memories” as part of every prompt does not count). It also implies being curious.

@patrys @df @Gargron given that the lookup table would generally be infinite, I don't even see what that would have to do with anything. What about the Geiger counter?

I don't think those things are really needed for human-like intelligence, and something like curiosity can easily be simulated by a rules-based system.

@patrys LLMs are intelligent only in the sense of pattern recognition; that is, they possess logical intelligence. However, some psychologists argue that there are multiple intelligences that cannot be reduced to logic, nor are LLMs capable of possessing them. See psychologist Howard Gardner.

@FishFace @patrys @df @Gargron

"Or from the other side, what makes you think our brains are non deterministic"

Us having free will/being non-deterministic is pretty much the base assumption we all operate on to even be able to function as humans. That of course doesn't mean that it's automatically true, but it makes the question of why do you think your brain is non-deterministic a no-brainer to answer: because we can't help but perceive ourselves as such.

LLMs are Shannon 1948 as far as the theory goes (building on Markov, but adding computer technology). With some compression techniques.

But I think you're talking about something else entirely, not purely syntactical.

imagine for a moment, the billionaires have been beheaded and the yachts sunk into the sea. the value in the output of workers 100% reinvested into local communities. all of it. none for colonial masters far away. the 20 hour work weeks and all human workers hands full of the satisfaction their efforts are meaningful... no more busy work for shareholders to skim value out of. only meaningful work. custom artisanal everything. housewares repaired by local handicrafters. clothes sewn and tailored to each body. homes and townhomes and communal living spaces built and maintained by cooperative owners. neighboring towns and regions and nations translating with loving care between the communities of meaning... interconnected with care. 💜

And that lasts 1-2 generations before new people who don't understand the problems that lead their parents to create the paradise chafe under their constraints and begin changing the system to something its originators wouldn't like, this creating conflict, diversity of thought, and continuing the cycle of history.

See: reality.

Machine vs. Human translation of fiction is an excellent analogy. Good translation involves an understanding of complicated material in an intuitive and nuanced way, and conveying those subtleties cleverly using equally complex forms in the target language while retaining the beauty of the writing. It involves much higher level thought than what LLMs do.

Likewise software engineering is much more complex and involves higher level thinking than prompted LLM code generation.

@Gargron You sound like me arguing against the inevitability of mass use of the cell phone.

I never understood why we gave up crystal clear audio, a two way simultaneous connection (yes, both parties could talk at the same time and hear wha5 the other had to say), and phone books for unintelligible garbled speak, dropped calls, delays, and no way to look up the damn phone number.

@HappytoBe @Gargron You had crystal clear audio on your landlines?

We never had that (I'm vaguely jealous, it was memorably trash). Even early cellphones were very comparable (current ones are much better, the most early ones I never got exposed to, too expensive).

and no way to look up the damn phone number.

I'm not sure if the harassment potential is why no one was interested in reimplementing it or if it was some manner of walled garden plans.

@benedictc @Gargron imagine the cost of the subscription if all of those companies worked with real money and had to turn a profit from the start.

Imagine that they had to pay real copyright fees for all the content used in training the models.

Imagine that any of the illegal uses of the training data and the people that died using their products had meaningful consequences in court.

Imagine that they had to pay the full tax, the full price of the services that they use.

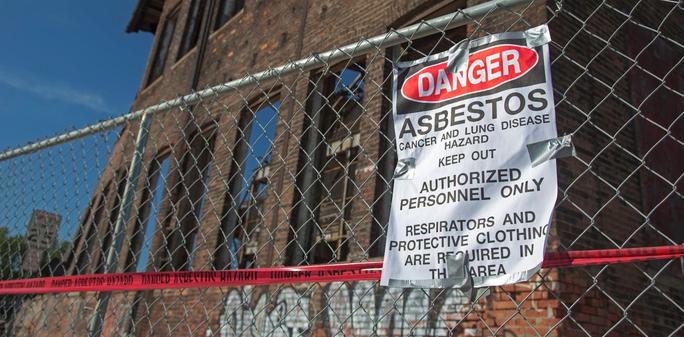

@Gargron if asbestos was invented last year it would be inevitable, I'm afraid.

When almost all legislative power has been captured by corporatism there's not much hope we could outlaw such poisons.

@Gargron It's hard to put the brakes on advances, like the Ghost Shirt Society finds out at the end of Vonnegut's Player Piano.

I heard an interview with a professor yesterday who wrote a book on the benefits of keeping cash alive and not relying completely on digital payment systems. He suggested using cash at least once a week. Maybe people will be able to do that with AI - limit their use and rely on their own brains at least some of the time. https://blogs.bu.edu/zagorsky/

I'm not setting foot in their COVID-loving plaguebearer environment if I can help it.

I could not agree more

I could not agree morefailed technologies, like Zeppelin

@Gargron yes. That's truth. But there is something over the good/health reason, this is: big-business earning money. Fossil fuels seems to be not the best, but it earns money for the biggest.

So i't a bit like disarmament - ok... ALL we will have AtomBombs, but we are not going to use them...

This same will be with LLM? This is actually a new weapon.

Stop producing weapons! They kill us all.

Am I being overly optimistic? Well, perhaps.

@Gargron you might need to define what you mean by "we" :)

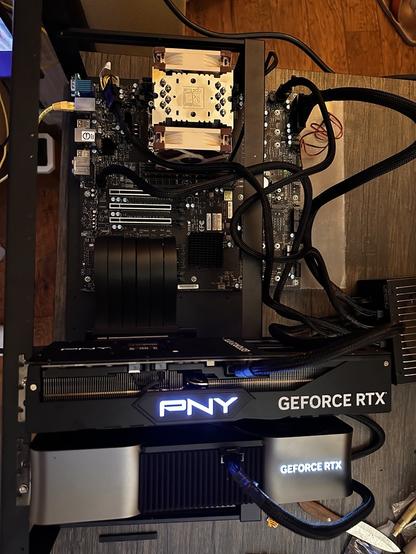

yes, here's a random example of consumer hardware used to train an LLM at home sabareesh.com/posts/llm-rig/

plenty more if you search