Here is a way that I think #LLMs and #GenAI are generally a force against innovation, especially as they get used more and more.

TL;DR: 3 years ago is a long time, and techniques that old are the most popular in the training data. If a company like Google, AWS, or Azure replaces an established API or a runtime with a new API or runtime, a bunch of LLM-generated code will break. The people vibe code won't be able to fix the problem because nearly zero data exists in the training data set that references the new API/runtime. The LLMs will not generate correct code easily, and they will constantly be trying to edit code back to how it was done before.

This will create pressure on tech companies to keep old APIs and things running, because of the huge impact it will have to do something new (that LLMs don't have in their training data). See below for an even more subtle way this will manifest.

I am showcasing (only the most egregious) bullshit that the junior developer accepted from the #LLM, The LLM used out-of-date techniques all over the place. It was using:

- AWS Lambda Python 3.9 runtime (will be EoL in about 3 months)

- AWS Lambda NodeJS 18.x runtime (already deprecated by the time the person gave me the code)

- Origin Access Identity (an authentication/authorization mechanism that started being deprecated when OAC was announced 3 years ago)

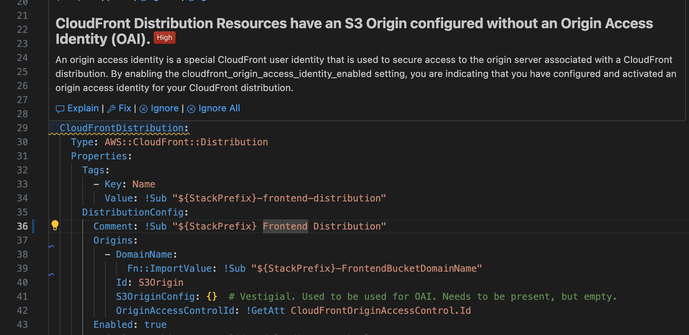

So I'm working on this dogforsaken codebase and I converted it to the new OAC mechanism from the out of date OAI. What does my (imposed by the company) AI-powered security guidance tell me? "This is a high priority finding. You should use OAI."

So it is encouraging me to do the wrong thing and saying it's high priority.

It's worth noting that when I got the code base and it had OAI active, Python 3.9, and NodeJS 18, I got no warnings about these things. Three years ago that was state of the art.

But we are not done.

Teach your developers ignore #AI generated warnings with this one weird trick!

If you look sy my previous post, under the erroneous "You should use OAI" message, there's a little icon and the word "Explain." You can ask the #LLM to explain the warning. Here's the explanation that Claude Sonnet 4.0 has for that warning. For those of you who don't want to read a screenshot, the LLM has prompted itself with:

Explain CloudFront Distribution Resources have an S3 Origin configured without an Origin Access Identity (OAI). issue in 70-frontend-stack.yaml at [29]

And the LLM's response to its own prompt is:

This is a false positive security warning. The issue is incorrectly flagged because:

You ARE using access control - Line 16-23 creates an Origin Access Control (OAC), which is the modern replacement for OAI

OAC is properly configured - Line 38 attaches the OAC: OriginAccessControlId: !GetAtt CloudFrontOriginAccessControl.Id

S3 bucket policy enforces OAC - Lines 120-135 ensure only CloudFront can access the S3 bucket

The security scanner likely only checks for the legacy OriginAccessIdentity property and doesn't recognize the newer OriginAccessControlId. Your configuration is actually more secure than using the deprecated OAI.

The warning can be safely ignored - your setup uses the AWS-recommended OAC approach for securing S3 origins.

Thanks for wasting my time AND egregious amounts of electricity generating a pointless "high priority" security warning.

It is even worse than that, there will be a rush by folks looking to poison the data set to plant enough bad code w/ exploits they know about in the hopes that it gets seeded in some x% of code bases given them plenty of low hanging fruit to pick.

@shafik @paco Poison is a really interesting aspect of the whole LLM thing. Nightshade has only been out for a little while, and it doesn’t have a large install base, but you can already see its effects in “art” generated by the poisoned learn modality.

My point is, there are very clever people who can and will poison the llm. While image poison offers no real opportunity for shenanigans, poisoned code bases have all sorts of ways they can be exploited, including backdoors, dead drops, and key forwarding.

And if you fire the real coders, and just have devs that accept echoes as real code, then the architecture will fail spectacularly.

This reminds me of the prophet Paul Riddell who once said “We can solve a lot of problems if we club all the MBAs like they were baby seals.”

@MissConstrue @shafik @paco Also, poisoning is an object lesson in how easy it is to pollute this stuff - reminder: The vendors behind all of it CANNOT WAIT!! to start polluting it in ways that favor themselves and/or anyone who pays them enough.

The output from these systems can never be remotely trustworthy & that's a fundamental technical limitation.

I'd be interested to know whether the models make any effort to distinguish working code from non-working code (in StackExchange, etc.). So many threads have the form: 1) Here is some code I wrote - why doesn't it work? 2) Lots of snippets and suggestions, but no integrated working version.

Not interested enough to investigate. I will have nothing to do with "AI" code.

@dhobern Yeah, "models making an effort" isn't a thing. Do the model trainers make an effort? Well, sure. Sorta. I mean, people try to classify data. There are armies of low-paid people in the global South spending hours doing tedious clicking on stuff to try to classify it.

From the LLM's perspective, it's more probabilities. If bad examples are more plentiful in the training data than good examples, or bad idioms are more common than good idioms, the output reflects that.

By the time the output is coming to a developer, it's all just weights. Any "effort" to curate or improve is long done, and it's just output based on input. When people say things like "it can't be that stupid, you must be prompting it wrong" they're half right: Because the only way to improve beyond whatever is baked in, is for the user to add stuff to the current context themselves. But both can be true: it can be that stupid and you can be prompting it wrong. 😀

They are purely statistical inference, there is no notion of understanding good or bad code.

What is the saying they tell little kids in daycare, "you get what you get and you don't get upset"

This is why there are all sort of tricks like writing a lot of tests and having detailed specifications. This "should" catch a lot of these obvious problems.

This does not guarantee the code is maintainable or that the code is not fragile or that the code is not some mismash of different idioms that don't mesh well.

I guess a approach would be to hand curate some enormous repository of super high quality code but this is a rightfully hard problem, if we could do this reliably at scale then all out problems would be solved and we wouldn't need all the tools we have.

Thanks for the reply - I fully understand this. I am a 40-year-career software engineer who can't imagine a situation in which I would want to replace my coding with statistically likely code-resembling text generation.

But I'm intrigued as a mental exercise by imagining someone 1) who thought this was a good idea and 2) who wanted to leverage useful information from examples in StackExchange-like discussions and 3) who was actually careful about what they were doing.

How would such a person go about filtering such examples to extract just those that seem to be working for some purpose? This is just one small aspect of the pre-training work that ought to have happened and that, I'm guessing, was skipped on the assumption that the errors would be swamped by the volume of examples.

The two most recent examples I've had of searching for useful code snippets on the web (one for reading a particular GPS sensor from Python, the other for a messy case of generating JavaScript code from PHP) both took me to multiple online examples, and in all cases, the only complete end-to-end code was the failing one submitted by the OP. So, blindly consuming all available examples would be disastrous.

If you reduce the training data only to the final most-upvoted replies that actually containing code blocks, the volume of this kind of training data on the web drops dramatically.

The largest other category of hopefully functioning online code (git repositories, etc.) tends to lack the contextual labeling ("How do I ... ?") that would make it good for code chatbottery of the "Build me a system that does X" kind.

The biggest frustration I have with the whole LLM/chatbot onslaught (well, the biggest other than the billionaire oligarch sociopathy of it all) is that it deliberately obfuscates the decomposition of big challenges into what can only realistically be being solved with the help of non-LLM modules. Knowing how those modules are being leveraged would make it so much easier to estimate the likely categories of error.

@paco

By displacing Q&A platforms, LLMs also suppress the generation of new training data about new API's.

This makes sure that LLM's won't catch up on new stuff.

They poisoned the well from which they drank.