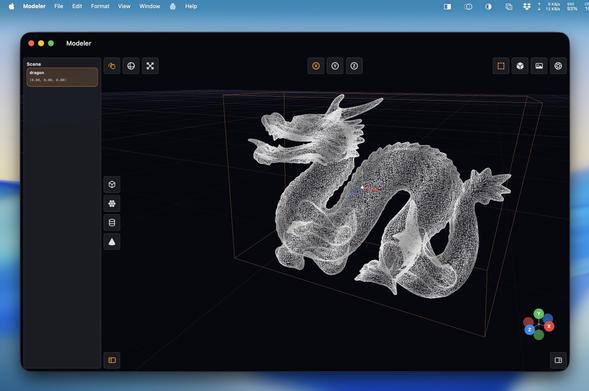

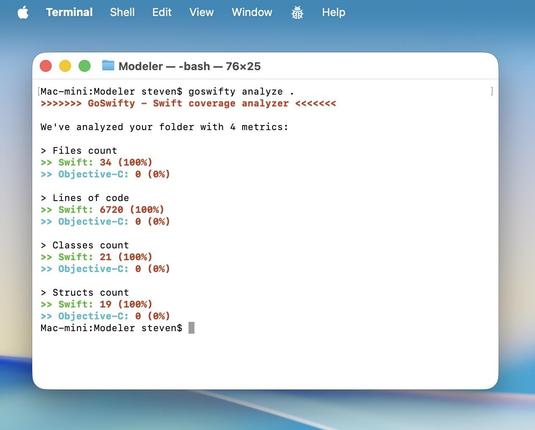

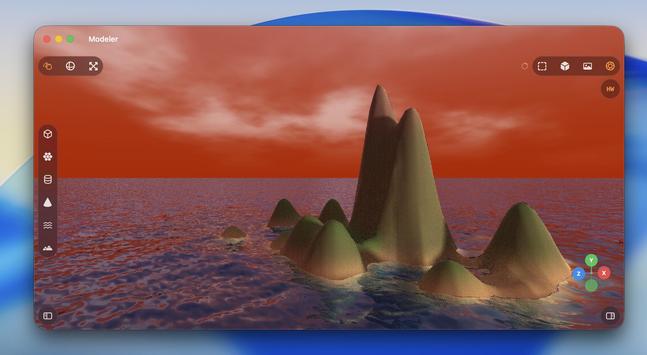

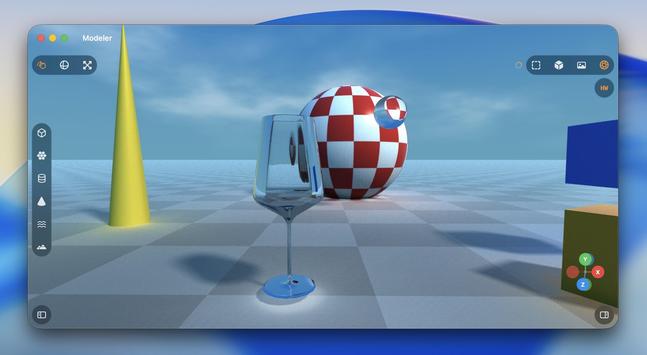

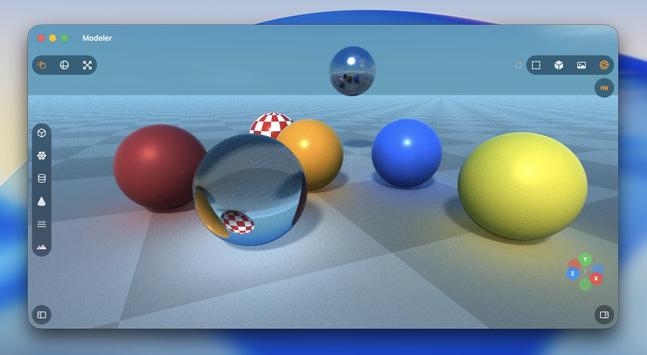

Operating well outside of my level of expertise here with Codex, but it's doing a pretty credible job at a lot of complex Metal rendering code I would never be able to wrap my head around

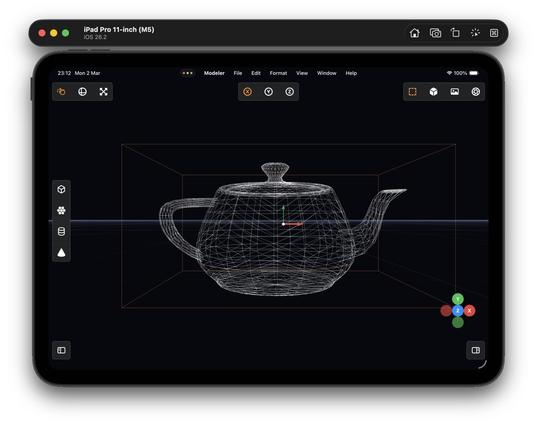

(Ignore the UV issues)

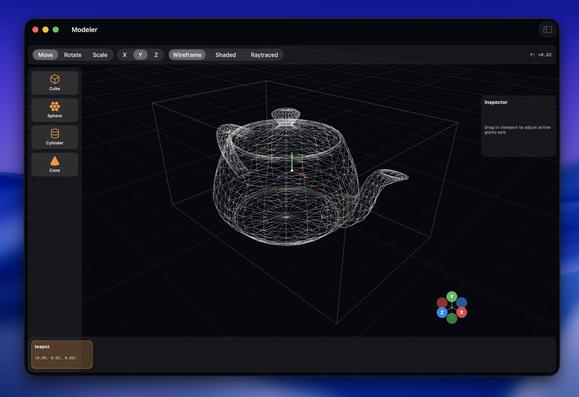

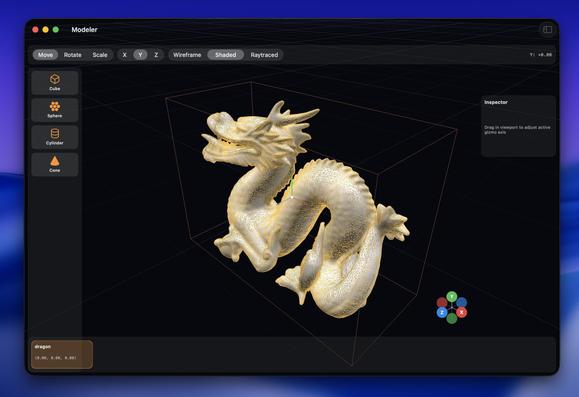

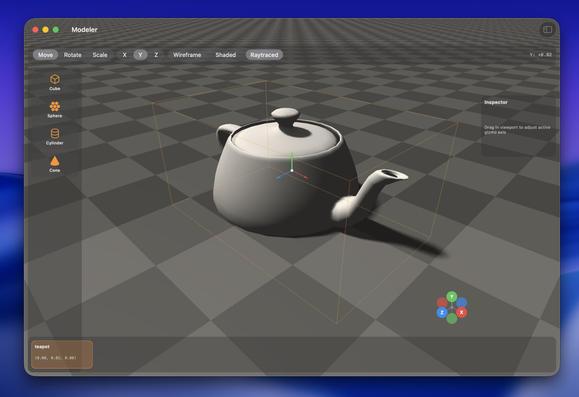

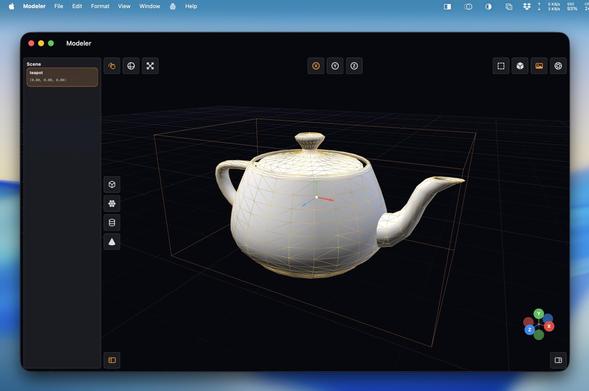

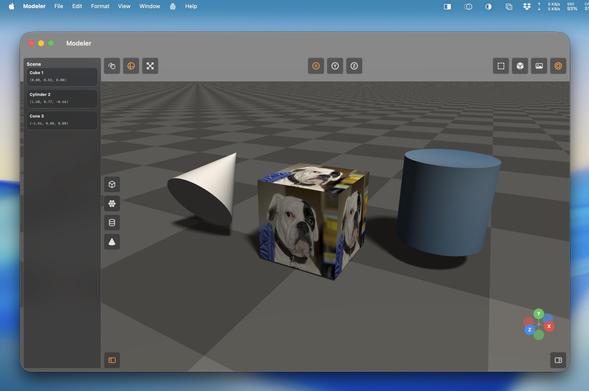

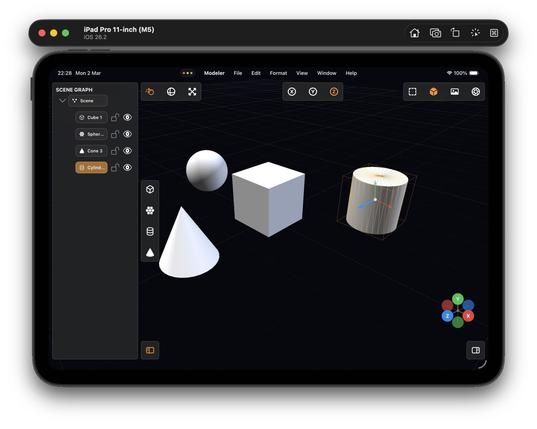

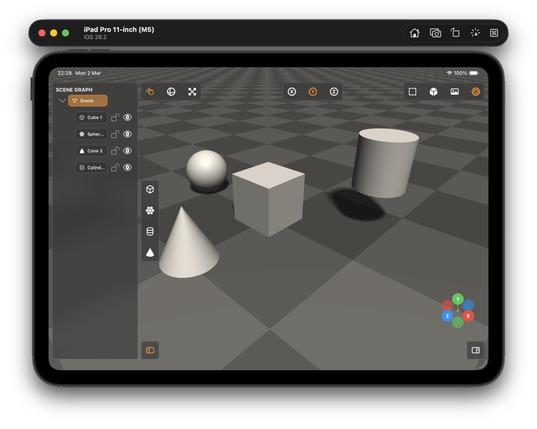

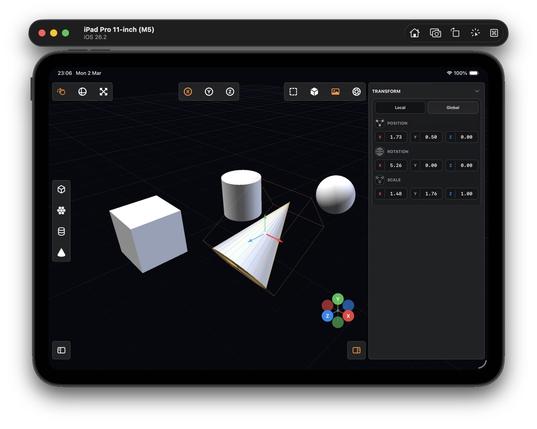

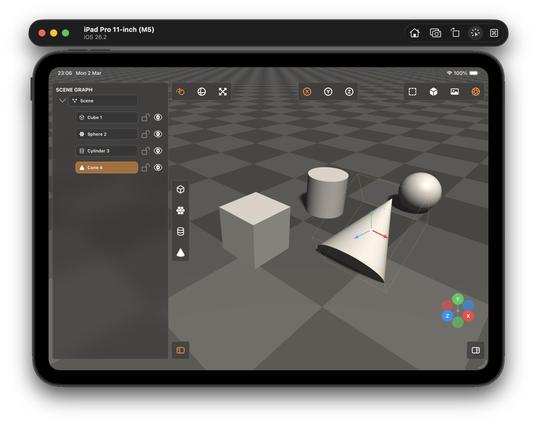

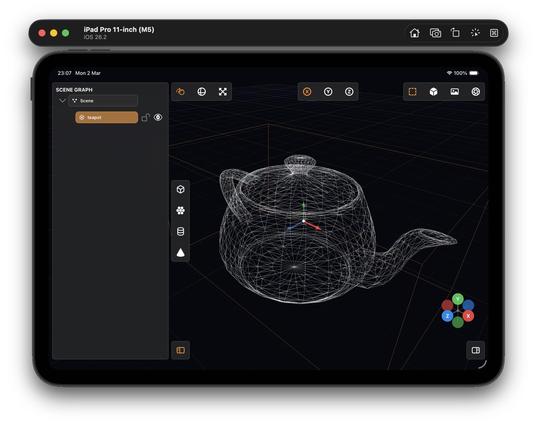

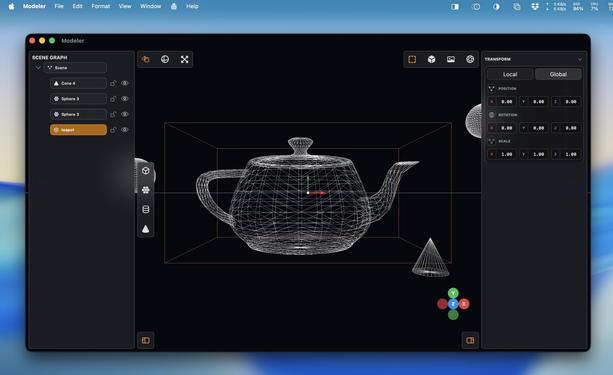

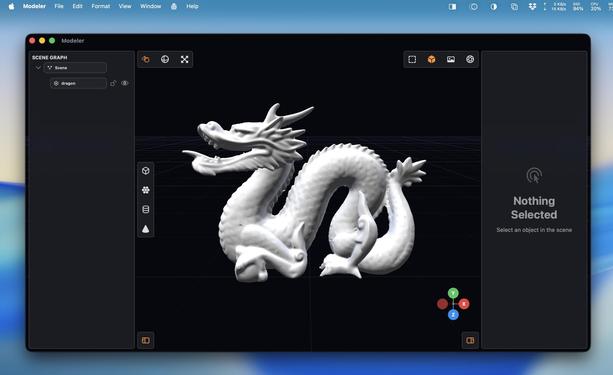

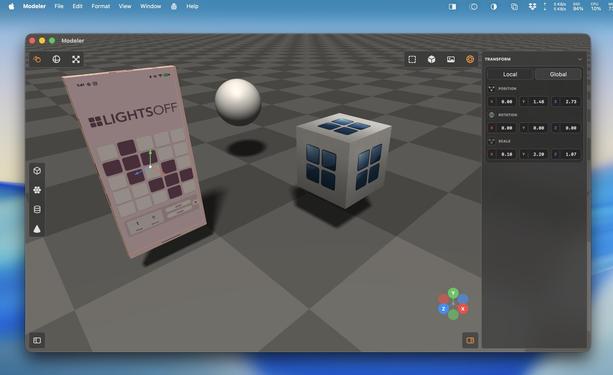

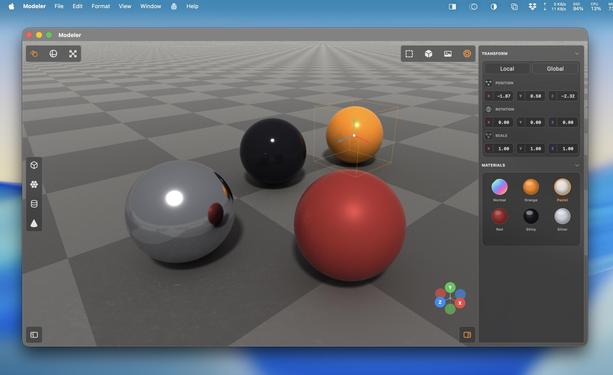

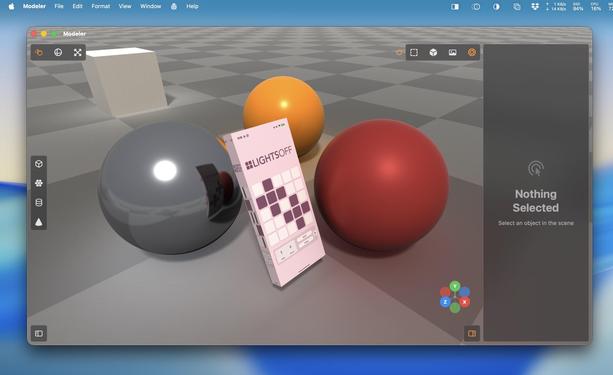

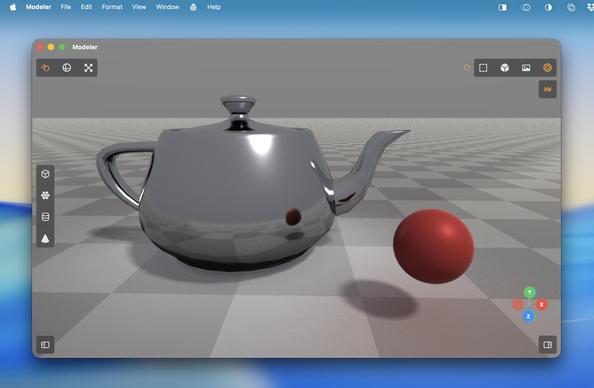

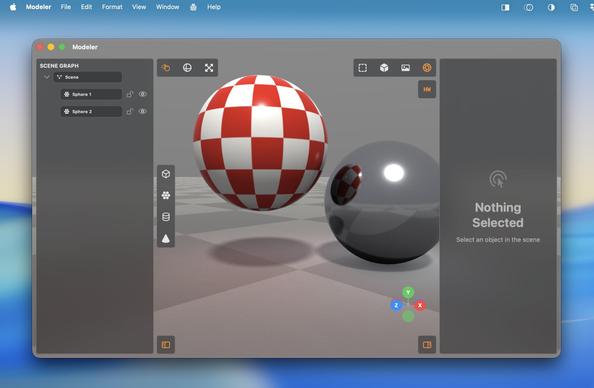

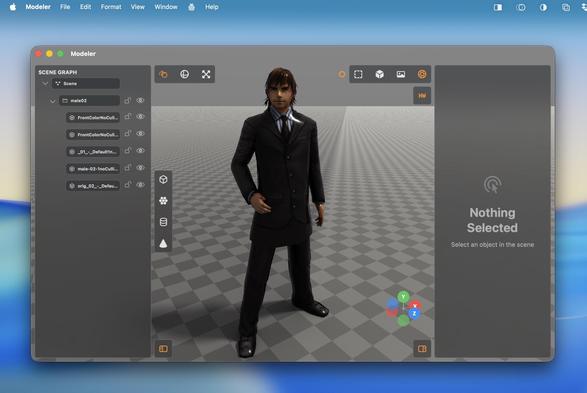

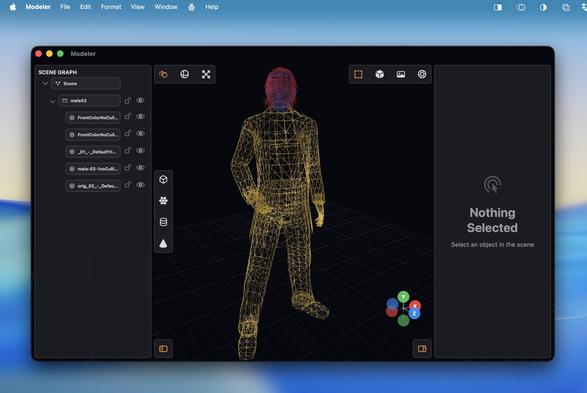

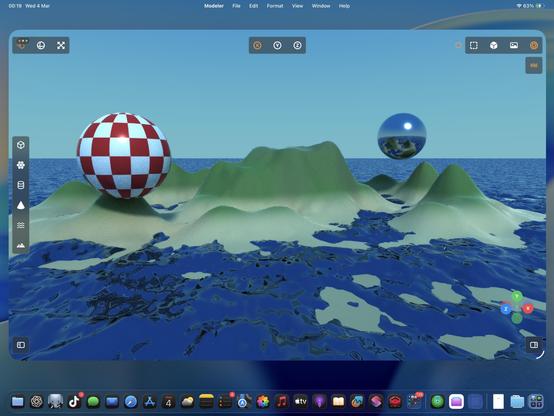

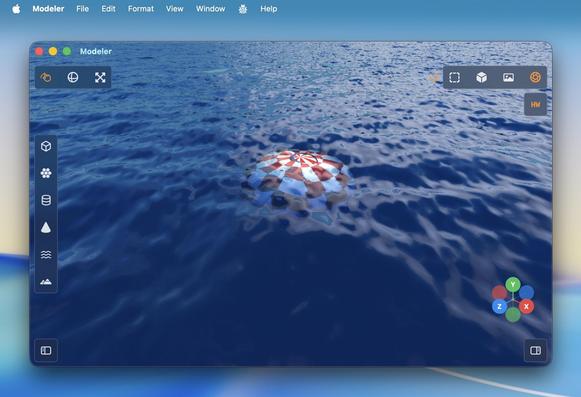

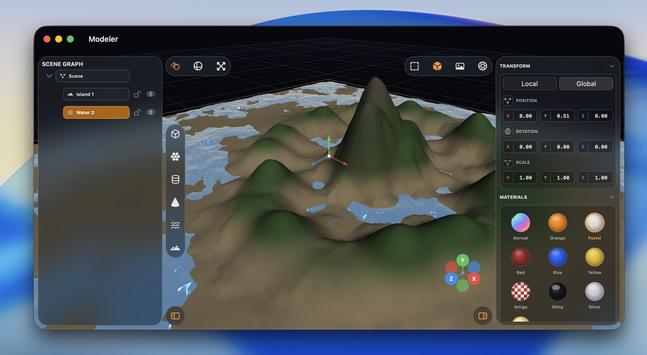

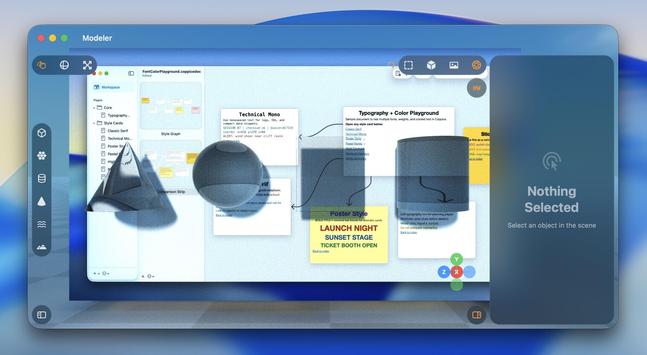

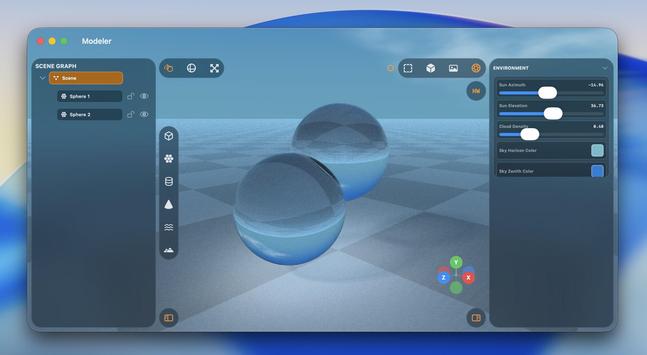

I started with a blank project template 5 hours ago. Now I've got a little 3D scene graph editor with gizmos, wireframe and shaded view modes, texturing, drag and drop OBJ file importing, and tile-based raytraced rendering, that runs on Mac and iPad.

Thanks Codex!

You know what I really could use at WWDC?

Teach a generative model to build high-quality vector SF Symbols so we can make custom ones, like say a full set for 3D modeling suites or PencilKit drawing apps, on demand 👀

…asking for a friend…

'Could somebody with no programming experience recreate Photoshop with an LLM?'

I have absolutely zero Metal and near-zero 3D modeling experience. I know the basics of how to use a scene editor, and the names of rendering terms.

And I effectively vibecoded all of this in less than half a day with Codex 5.3 Medium, based on screenshots of a cool-looking app I've never used (Valence3D) and just a general sense of what a 3D app should do

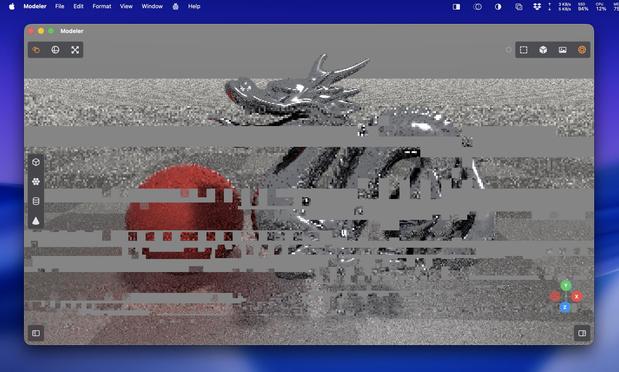

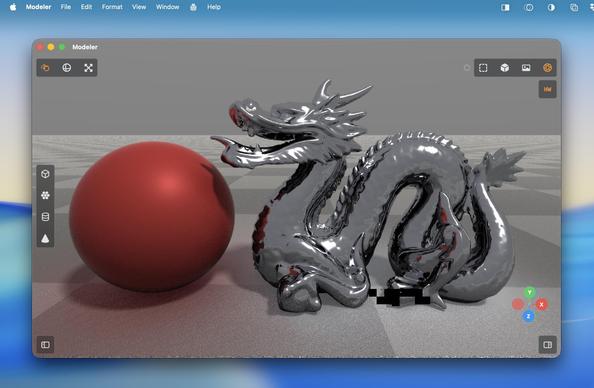

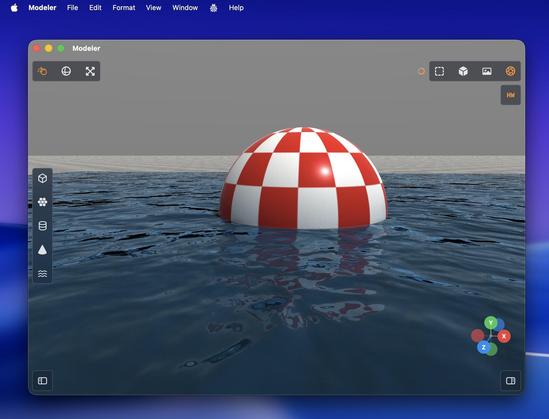

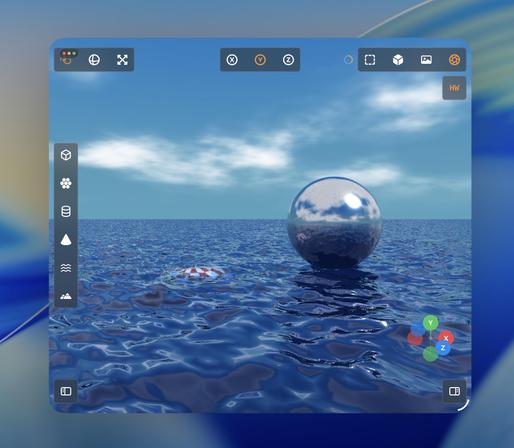

Turns out the M1 supports enough of the hardware accelerated raytracing APIs that I get a massive speedup here, too?

Trying to parse Apple's Metal feature support tables is hurting my brain, so I'll just accept it and move on

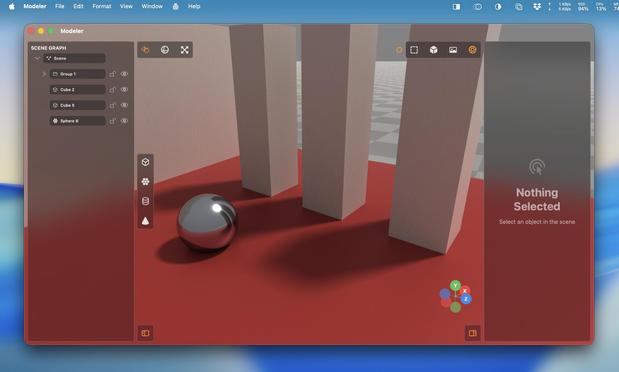

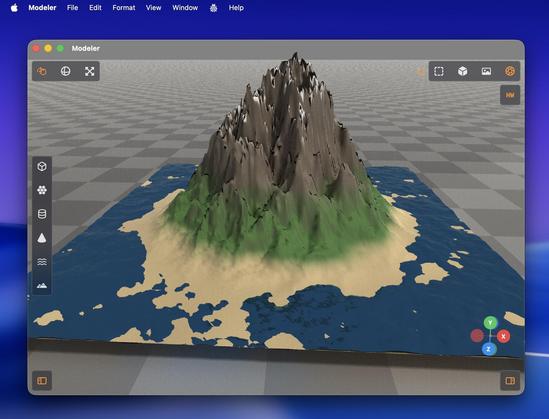

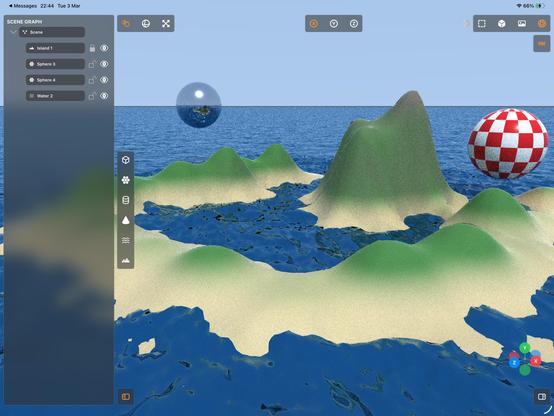

Recap: I vibecoded (code unseen, no plan) a 3D editor/renderer that has a scene graph, editing controls, primitives and gizmos, materials, procedural terrain and water, and hardware-accelerated Metal raytracing with soft shadows, clouds and bounce lighting, that runs on Mac and iPad.

Tool: Codex 5.3 Medium

Time: About a day's worth of work has gone into it

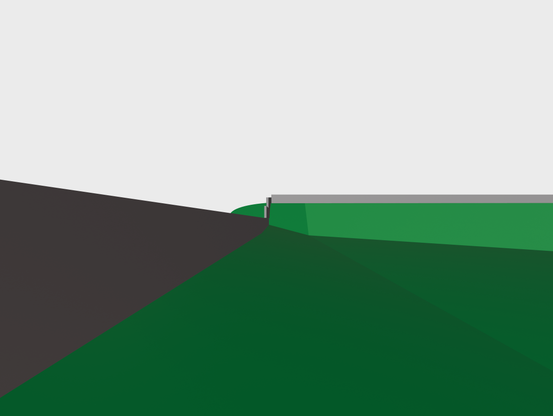

'Attenuated shadows'

A little better

@stroughtonsmith is this ever coming to TestFlight? It's making me miss Bryce so much

Sunsets next?

@stroughtonsmith is this going to be broadly available? Any chance for an iPhone version?

Seeing these updates has made me incredibly nostalgic for iTracer, which was a basic 3D rendering app for the original iPhone, but also the first app that made me think, “woah, this is literally just a small computer.”