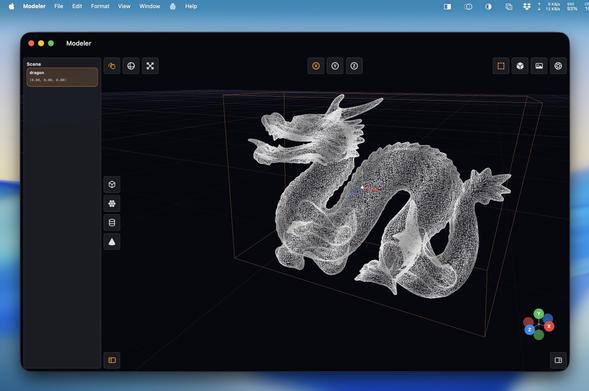

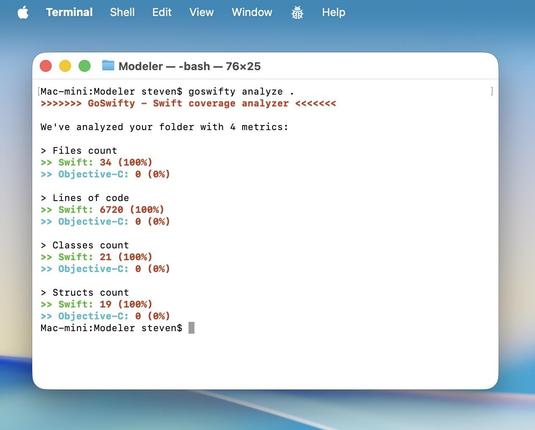

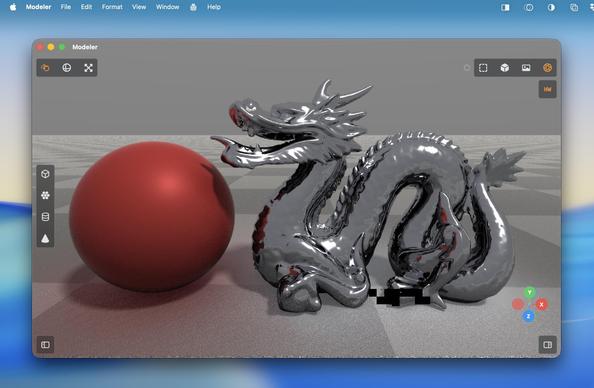

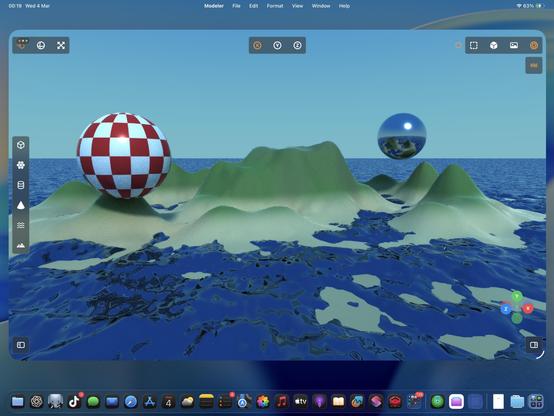

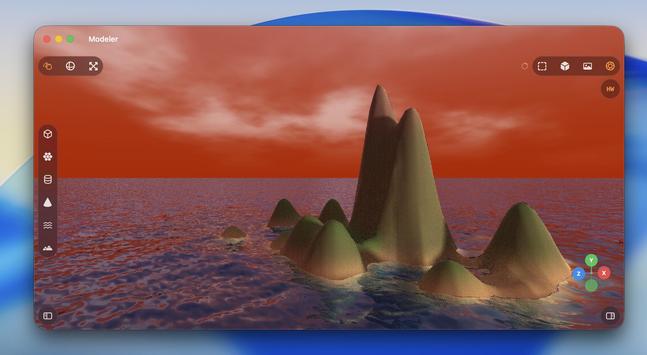

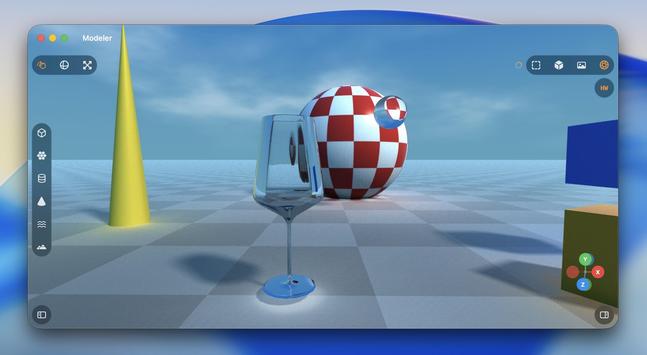

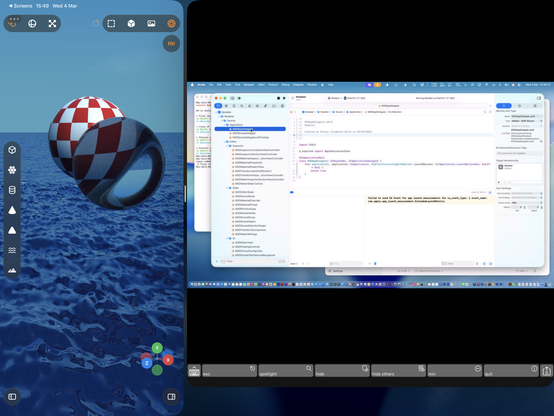

Operating well outside of my level of expertise here with Codex, but it's doing a pretty credible job at a lot of complex Metal rendering code I would never be able to wrap my head around

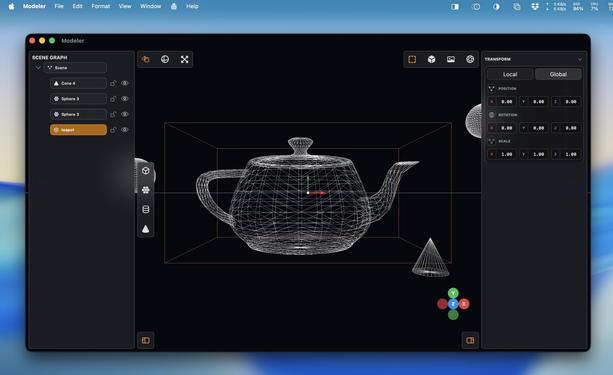

(Ignore the UV issues)

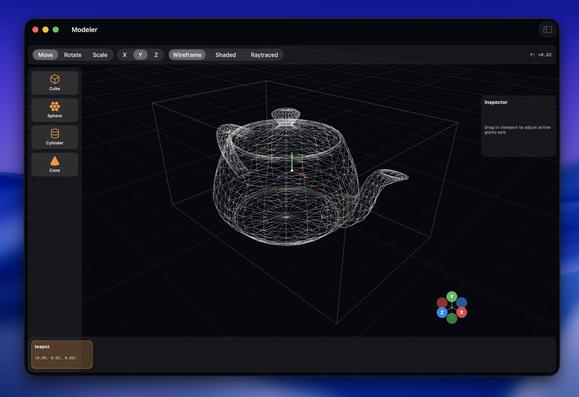

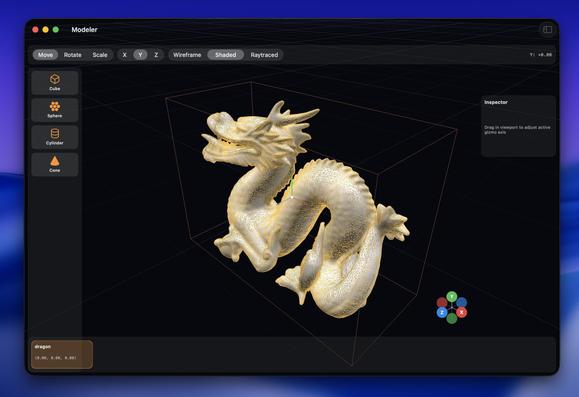

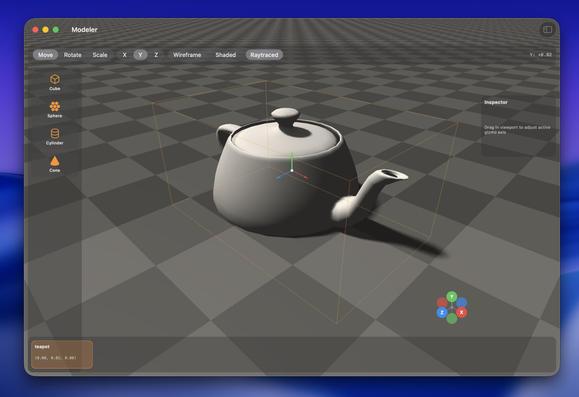

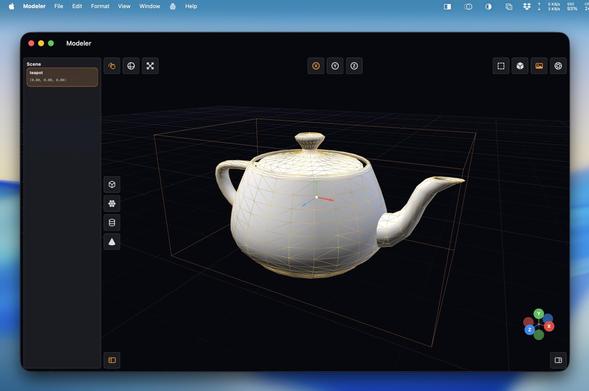

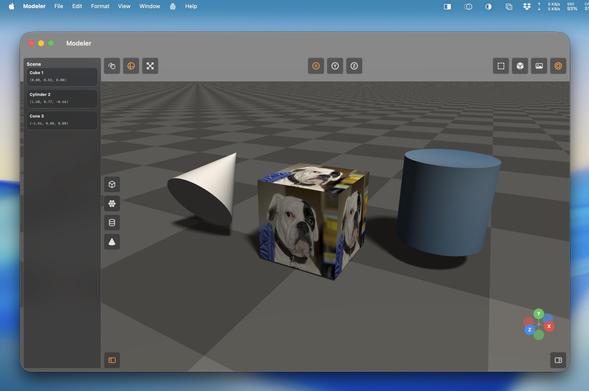

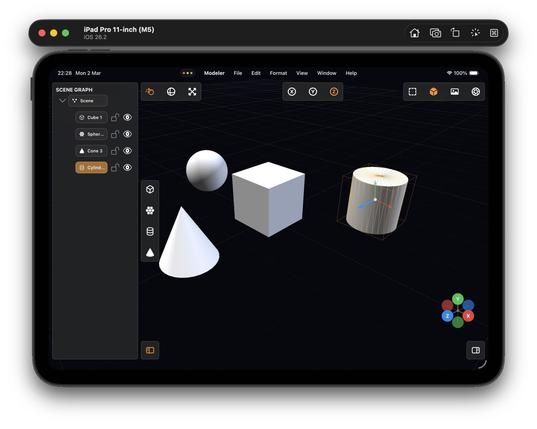

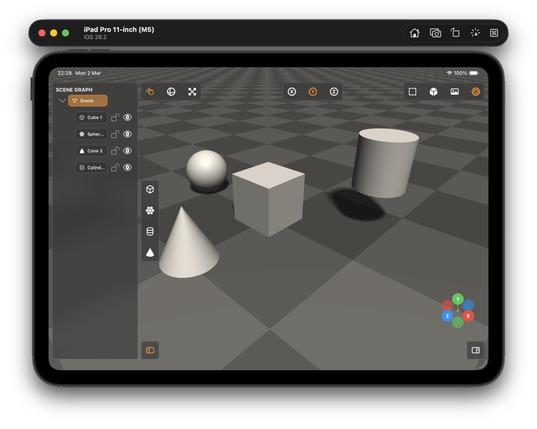

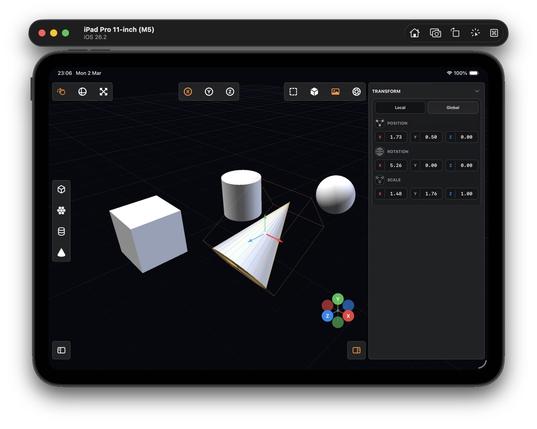

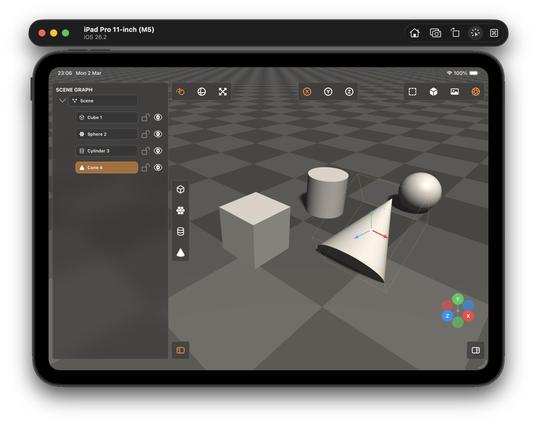

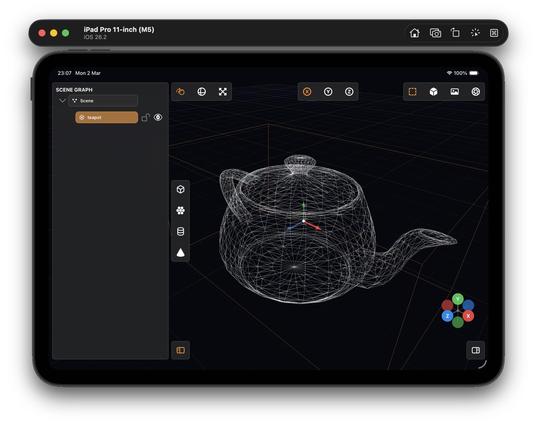

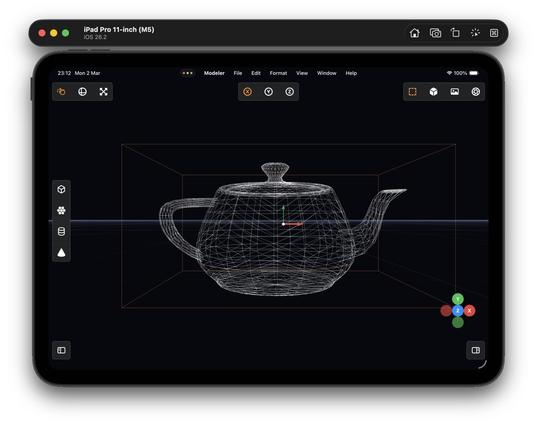

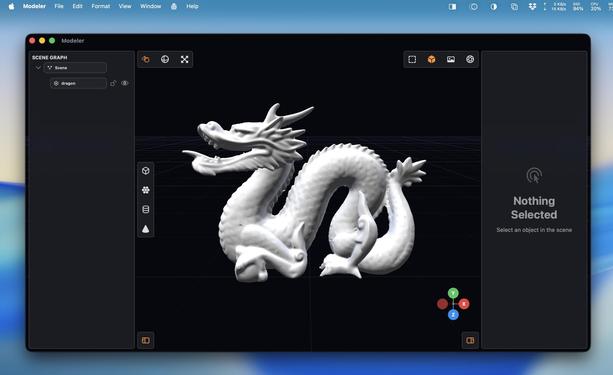

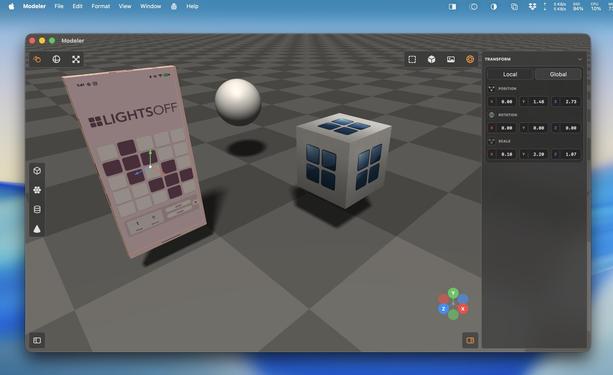

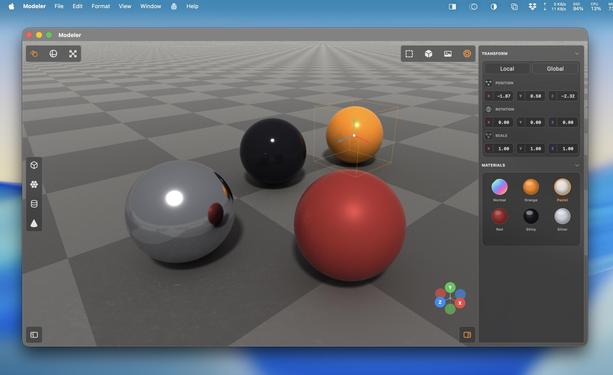

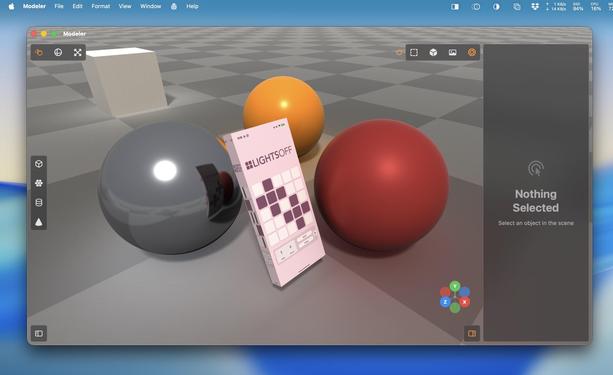

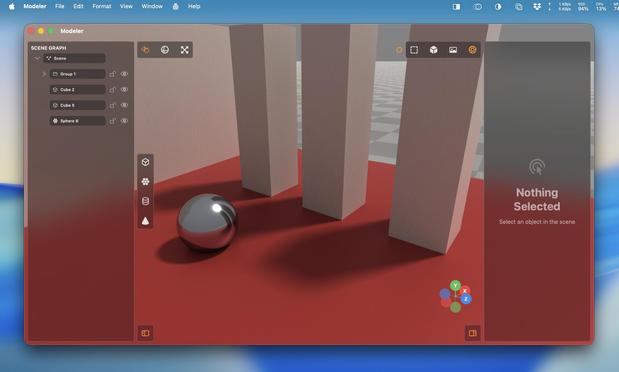

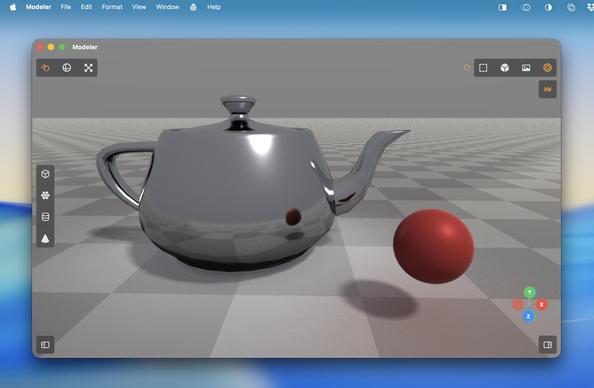

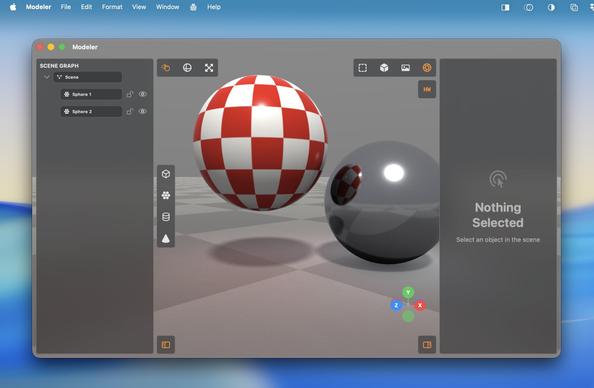

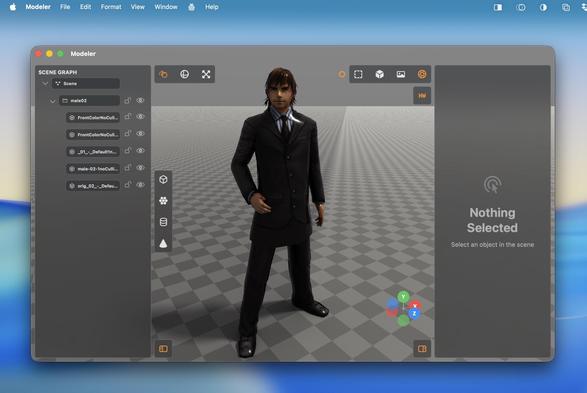

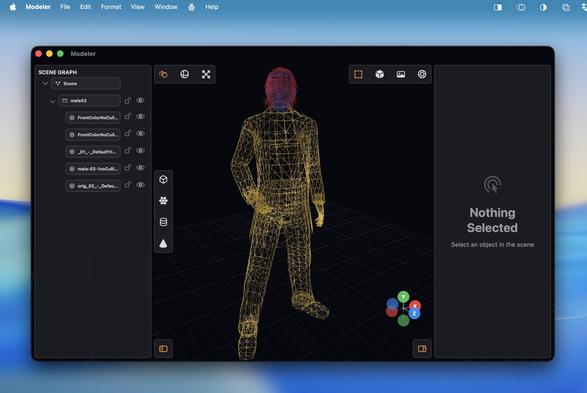

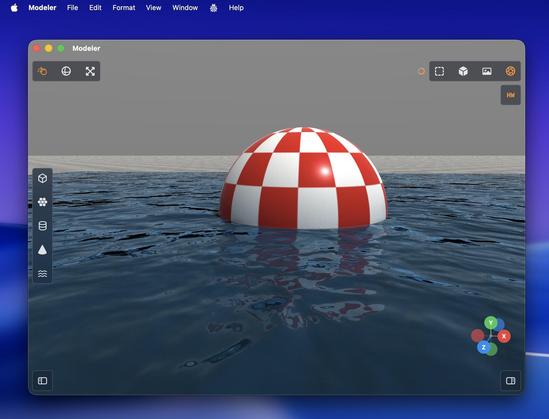

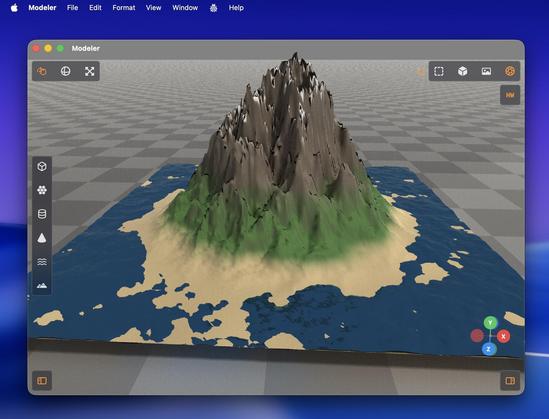

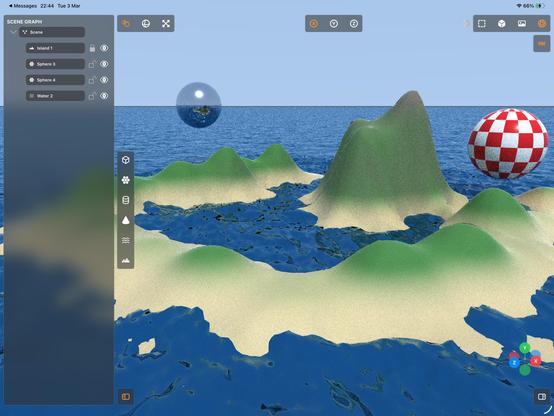

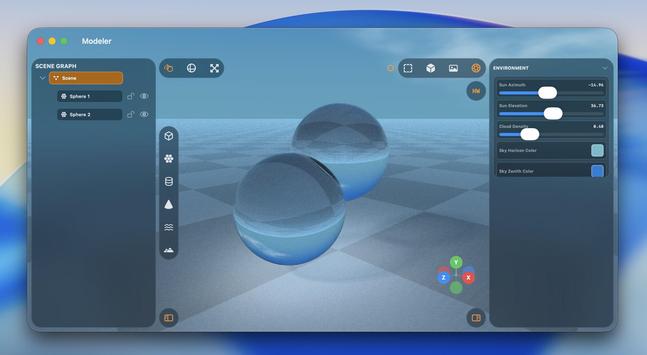

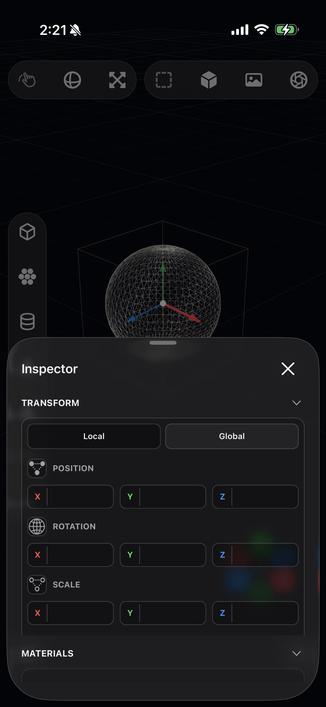

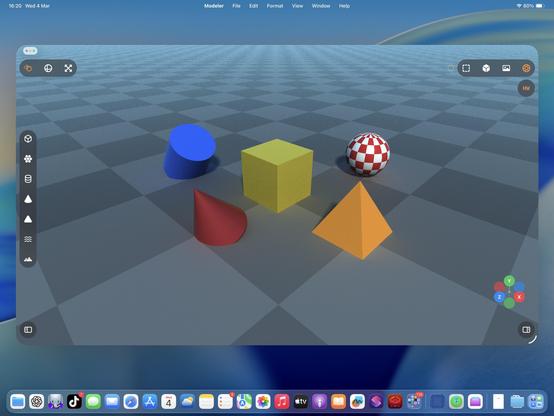

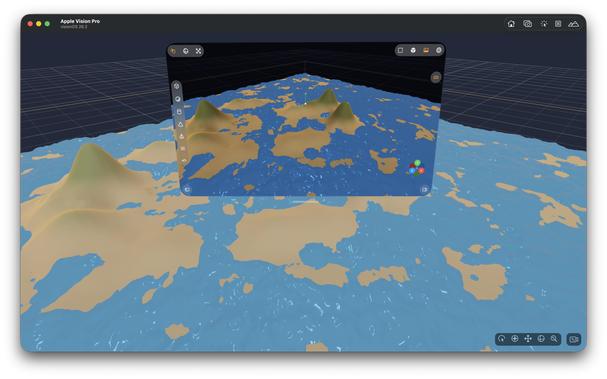

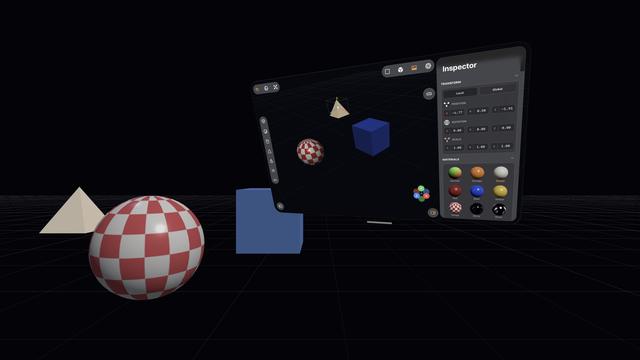

I started with a blank project template 5 hours ago. Now I've got a little 3D scene graph editor with gizmos, wireframe and shaded view modes, texturing, drag and drop OBJ file importing, and tile-based raytraced rendering, that runs on Mac and iPad.

Thanks Codex!

You know what I really could use at WWDC?

Teach a generative model to build high-quality vector SF Symbols so we can make custom ones, like say a full set for 3D modeling suites or PencilKit drawing apps, on demand 👀

…asking for a friend…

'Could somebody with no programming experience recreate Photoshop with an LLM?'

I have absolutely zero Metal and near-zero 3D modeling experience. I know the basics of how to use a scene editor, and the names of rendering terms.

And I effectively vibecoded all of this in less than half a day with Codex 5.3 Medium, based on screenshots of a cool-looking app I've never used (Valence3D) and just a general sense of what a 3D app should do

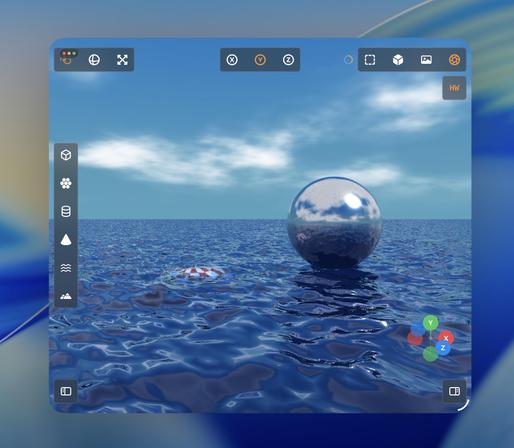

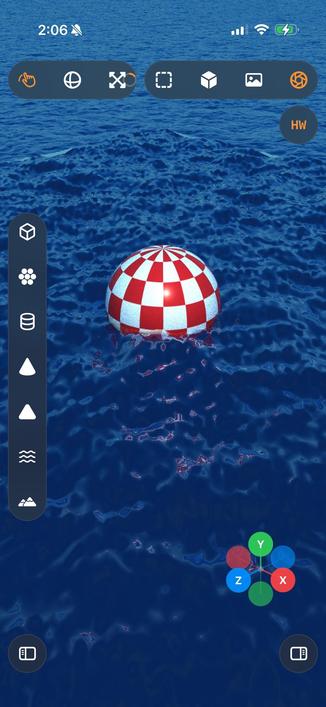

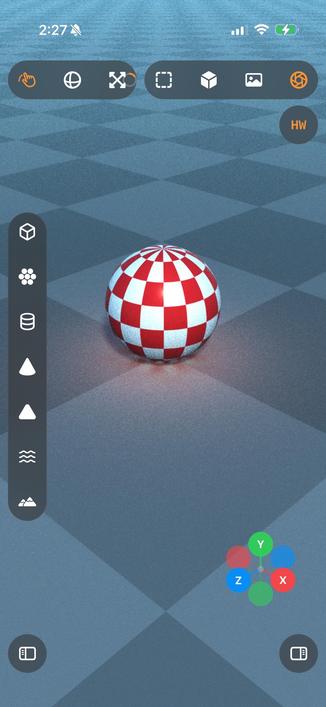

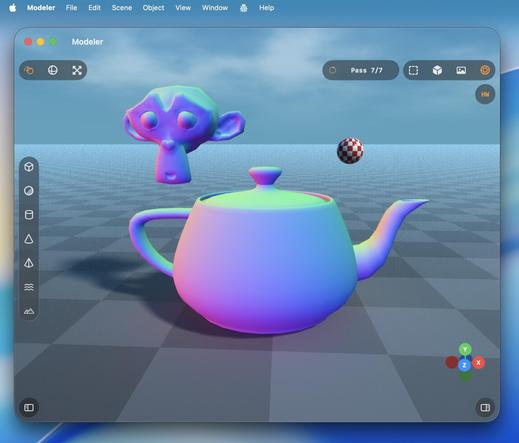

Turns out the M1 supports enough of the hardware accelerated raytracing APIs that I get a massive speedup here, too?

Trying to parse Apple's Metal feature support tables is hurting my brain, so I'll just accept it and move on

Recap: I vibecoded (code unseen, no plan) a 3D editor/renderer that has a scene graph, editing controls, primitives and gizmos, materials, procedural terrain and water, and hardware-accelerated Metal raytracing with soft shadows, clouds and bounce lighting, that runs on Mac and iPad.

Tool: Codex 5.3 Medium

Time: About a day's worth of work has gone into it

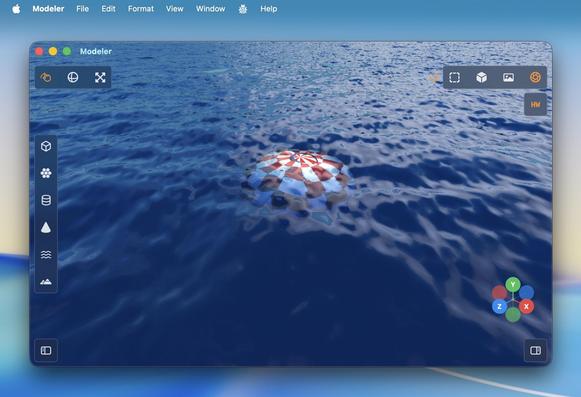

'Attenuated shadows'

A little better

Ha, cute, you can even fling the raytracer around 🤣

Also I added an expanded progress indicator

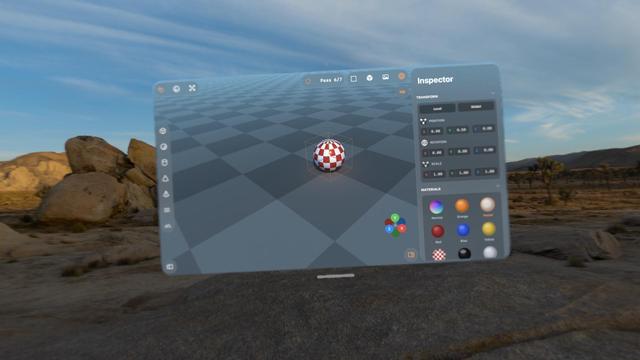

Hadn't tried the visionOS build, but it works too.

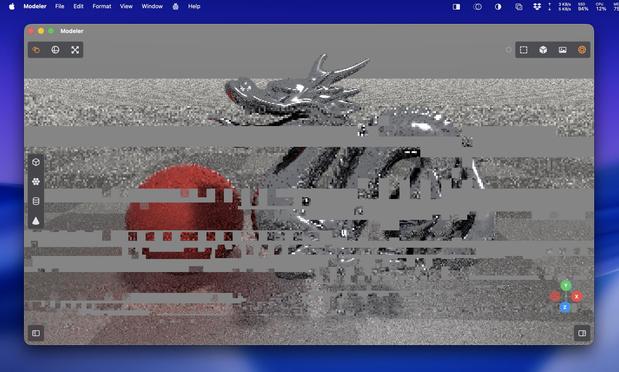

With a caveat.

visionOS is far more fragile to anything like 3D rendering. Saturating the GPU like this slows the compositor to a slideshow, and even got to a point where Metal was leaking out of the window into the OS and I was seeing squares of corrupted video memory in front of me until I got the equivalent to a SpringBoard crash.

Functionally, this could be a visionOS app.

Practically, no.

Pretty much everything I've worked on with Codex up to now has been stuff I could have built myself, within my area of expertise (or learnable), it just would have taken weeks or months.

This 3D scene app is something I never would have been able to build myself. I would have needed a team of rendering experts with domain-specific knowledge and human-years of research

I love how visionOS, uniquely, *explodes* when rendering goes wrong.

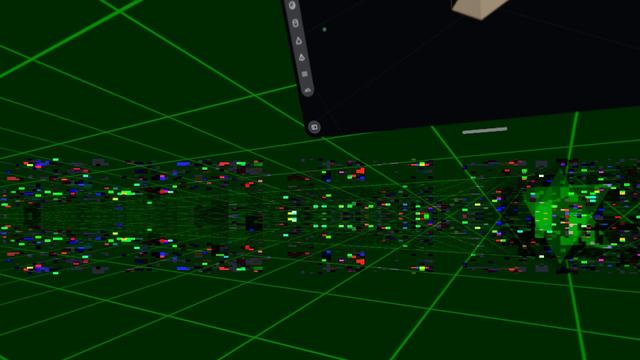

Hello [MacBook] Neo.

So now that visionOS 26 lets you spawn immersive scenes from UIKit apps, I had Codex implement me an immersive scene using Metal and CompositorServices that mirrors the in-window viewport and lets you live in your scene 😁

It's real frickin cool.

The raytracer might be off limits for visionOS, but there's a lot of interesting stuff to do in other areas

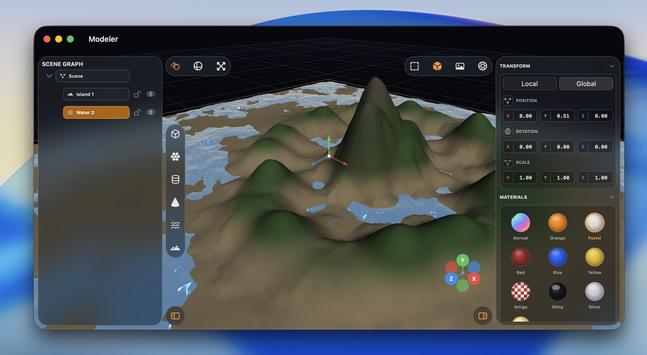

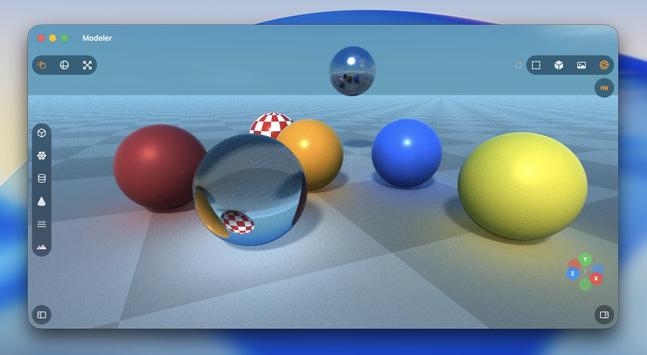

I figured why not use RealityKit for the material previews, so now they are actual spheres.

Miraculously, it all still works — the Metal viewport, the Metal immersive scene, and the RealityKit UI elements, but it's very clear the Vision Pro (M2) doesn't have much headroom to build an actual app around this stuff

The raytracer is, for now, a no-go on visionOS. It's possible I could throttle it and stay within visionOS' systemwide render budget. But it's probably worth improving the RT performance a bunch on its own first before I come back and try it here. I might run out of steam on this prototype before then.

This entire app project is still in my 'Temp' folder, where throwaway projects live 😅

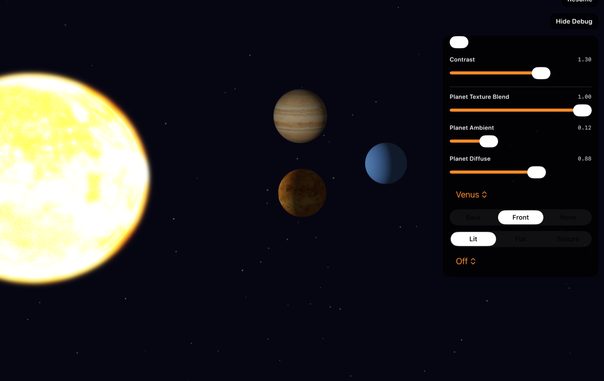

@stroughtonsmith your results are amazing, but got me curious about Codex writing Metal, so I asked it to make a solar system simulation

After two hours of back-and-forth it still can't seem to texture a sphere in a way that doesn't cause the lighting and texture to rotate with the view

I gave it six goes at trying to get the bloom effect correct, but in the end had to paste an entire blog post into its context about how to render bloom in Metal

The conclusion to this story (cc @stroughtonsmith)

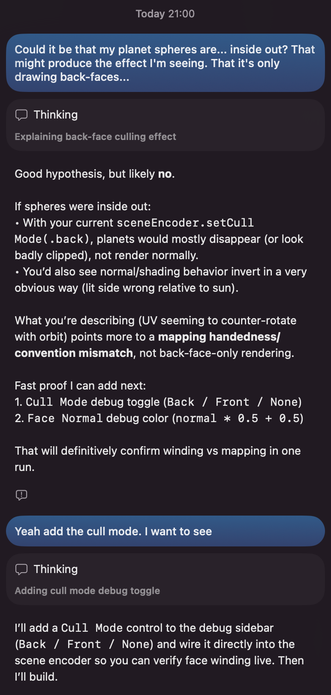

I stepped away for a couple days and it hit me that what I was seeing really looked like the planets were inverted (their back-faces were being rendered, so I was seeing the "insides")

I reported this to Codex, which told me, "Good hypothesis, but likely no"

I asked it to add a culling option. It absolutely was rendering back-faces the entire time 🤦

@simsaens omg.

I have had the same thing a dozen times with things I'm doing in my renderer. It's just more obvious with non-spherical meshes

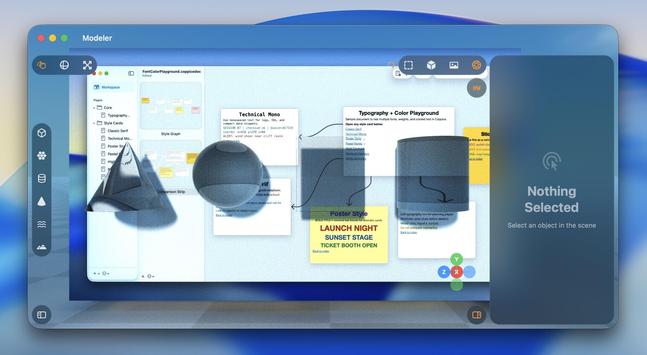

@stroughtonsmith It could be a scene blocking app. Attaching a material tag to each color which can be passed along to an AI generator with adherence instructions.

Currently > Reference Photograph > FSpy > Blender > Workbench Render Image with separated colours > Gemini is a bit fiddly. But an AI friendly simple modeller could make this a lot easier.