@firepoet @the_other_jon

Thank you for this answer 😊

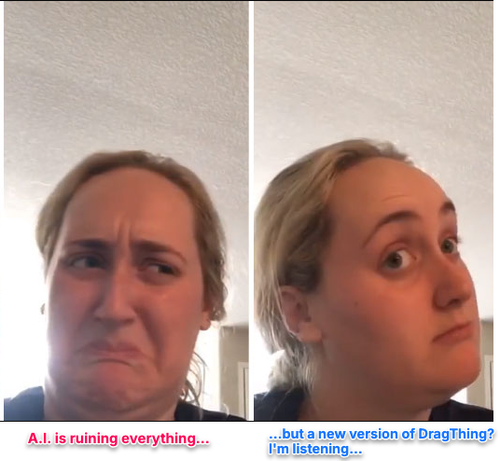

I respect that position, and you're right that ethical stances often require sacrifice. But I think we're drawing different lines here. I don't see AI as something we can just starve by not using it. It's already everywhere, being used by corporations, governments, everyone. So for me, the question is: do I abstain entirely while others use it uncritically, or do I use it thoughtfully and keep pushing for better regulation?

I am trying to navigate the reality I am living in.

As I said in other posts it helps me navigate with my ADHD and also I’m kind of forced to use it at work.

What I am doing is to advocate for using different models at work and talking with people about the problems of AI. All while being really excited how we can use it to make lives better, because it has cool use cases.

Also I am pragmatic singular boycott never helped much. We need regulations in place, that’s the most important thing